by tyler garrett | May 8, 2025 | Solutions

As organizations grow, the complexity and diversity of data operations quickly escalate. It’s no longer viable to rely solely on traditional query acceleration techniques or singular database implementations—modern organizations need strategic query routing that optimizes data flows. Enter Query Mesh Optimization: a powerful paradigm for streamlining data operations, distributing workloads strategically, and dramatically enhancing query performance. Whether you’re facing slower analytics, stale dashboards, or burdensome data pipelines, adopting an optimized query mesh architecture can provide you with the agility, efficiency, and speed to remain competitive. Let’s unravel how strategic query mesh optimization can be your game-changing solution, ensuring your data-driven initiatives provide maximum value with minimal latency.

Understanding Query Mesh and Its Significance in Modern Data Environments

At the heart of improved data operation performance is the concept of a query mesh, a distributed data access layer overlaying your existing data infrastructure. Think of it as efficiently managing complex data queries across multiple data sources—whether data warehouses, relational databases, polyglot persistence architectures, or cloud-based platforms. Unlike monolithic database solutions that struggle to maintain performance at scale, a query mesh dynamically routes each query to the ideal source or processing engine based on optimized routing rules that factor in latency, scalability, data freshness, and workloads.

In today’s multifaceted digital landscape, where organizations integrate data from diverse systems like social media APIs, CRM applications, and ERP solutions like Procore, the significance of efficient querying multiplies. Inefficient data access patterns or suboptimal database query plans often lead to performance degradation, end-user frustration, and reduced efficiency across business intelligence and analytics teams.

Adopting query meshes provides a responsive and intelligent network for data operations—a game-changer for IT strategists who want competitive edges. They can draw insights across distributed data environments seamlessly. Consider the scenario of querying large-scale project data through a Procore API: by optimizing routing and intelligently distributing workloads, our clients routinely achieve accelerated project analytics and improved reporting capabilities. If your firm uses Procore, our Procore API consulting services help ensure rapid optimized queries, secure connections, and reduced processing overhead on critical applications.

Key Benefits of Implementing Query Mesh Optimization

Enhanced Performance and Scalability

Query mesh architectures significantly enhance the performance of analytics tasks and business intelligence dashboards by effectively distributing queries based on their nature, complexity, and required data freshness. By breaking free from traditional constrictive data systems, query mesh routing enables targeted workload distribution. Queries demanding near-real-time responses can be routed to specialized, fast-access repositories, while large historical or analytical queries can route to batch-processing or cloud-based environments like Google’s BigQuery. Organizations routinely achieving these efficiencies note improved query response times, increased scalability, and a markedly better user experience.

Reduced Infrastructure and Operational Costs

By intelligently offloading complex analytical queries to appropriate data storage solutions like data lakes or data warehouses, a query mesh significantly reduces operational expenses. Traditional single-database models can require expensive hardware upgrades or software license additions. However, by using a strategically planned data strategy, businesses manage operational costs more efficiently, significantly reducing infrastructure overhead. Implementing modern query mesh solutions can help decision-makers control technical debt, streamline their data infrastructure, and reduce IT staffing overhead—because, as we’ve emphasized previously, the real expense isn’t expert consulting services—it’s constantly rebuilding and maintaining inefficient systems.

Greater Data Flexibility and Interoperability

Another major advantage is achieving data interoperability across various platforms and data storage mediums within an organization. Query mesh optimization allows stakeholders to integrate heterogeneous data faster. It enables faster prototypes, smoother production deployment, and fewer bottlenecks. Such optimization dramatically simplifies the integration of diverse data types—whether stored in simple formats like Google Sheets or elaborate corporate data lakes—with flexible adapters and data connectors. For instance, if you face roadblocks with large-scale Google Sheet data integration, specialized querying and integration techniques become crucial, ensuring you access vital data quickly without compromising user experiences.

Strategies for Optimizing Query Routing for Maximum Efficiency

Implement Polyglot Persistence Architectures

The first strategic step toward query mesh optimization is adopting polyglot persistence architectures. Rather than forcing every business-specific dataset into a single relational database solution, organizations benefit from choosing specialized analytical databases or storage solutions tailored for their respective purpose. Real-time operational queries, transactional data operations, and analytical batch queries are each stored in databases explicitly optimized for their unique query patterns, drastically improving responsiveness and reducing latency for end-users.

Virtualization through SQL Views

Creating effective virtualization layers with SQL views can help ease complexity within query routing strategies. These convenient and powerful features enable analysts and developers to query complex data structures through simplified interfaces, effectively masking underlying complexity. Building virtual tables with SQL views contributes significantly toward maintaining query simplicity and managing performance-intensive data operations fluidly, enabling your query mesh strategy to distribute queries confidently.

Predictive Query Routing and Intelligent Query Optimization

Implementing predictive query routing or enhanced machine learning-driven algorithms can actively predict query processing bottlenecks and automatically make routing decisions. It continuously analyzes query behavior patterns and data availability across different silos or databases, automatically adjusting the routing and prioritization parameters. Tools that employ intelligent routing decisions allow faster query delivery and ensure smoother business intelligence outcomes, directly feeding into better business decisions. Embracing automation technologies for query routing can become a major differentiator for firms committed to advanced data analytics and innovation.

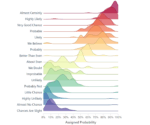

Visual Analytics to Communicate Query Mesh Optimization Insights

Query mesh optimization isn’t merely a backend technical decision; it’s vital that stakeholders across business operations clearly grasp the value of improvements delivered by data strategies. This understanding grows through intuitive, impactful visuals representing performance metrics and query improvements. Selecting suitable visualization tools and tactics can drastically elevate stakeholder and leadership perception of your company’s analytical capabilities.

Thoughtfully choosing visual charts within dashboards assists in demonstrating query improvements over time. An effective visualization clearly articulates query performance gains through appropriately chosen chart types. Select visualizations that simplify complex analytics signals, offer intuitive context, and enable quick decision-making. It is imperative for analysts to choose a chart type suitable for the data, effectively communicating the optimization results. Additionally, visualizations like sparklines provide stakeholders immediate insight into performance gains, query reduction latency, and throughput enhancements—learn how to build these efficiently by exploring how to create a sparkline chart in Tableau Desktop.

Final Thoughts: Aligning Query Mesh Optimization with Strategic Business Goals

Strategically optimized query routing should always align with broader business objectives: lowering operational costs, enhancing user experiences, creating faster analytics pathways, and empowering stakeholders with richer, prompt insights. By harnessing Query Mesh Optimization, businesses elevate their data analytics culture, dramatically improving productivity and accelerating growth through insightful, data-informed decisions.

A carefully architected query routing architecture helps businesses maintain operational flexibility, innovate faster, and consistently outperform competitors. Reducing latency, cutting infrastructure costs, achieving performance scalability, and ensuring data interoperability will undoubtedly make your company more agile, adaptive, and responsive to market conditions. At Dev3lop, we specialize in leveraging proven methodologies to deliver maximum value from your data infrastructure, helping you future-proof your technology investments and gain competitive advantages in highly demanding data-driven environments.

Curious about deploying query mesh optimization within your organization? Let’s discuss your unique data challenges and opportunities ahead.

by tyler garrett | May 8, 2025 | Solutions

Enterprises increasingly rely on a tangled web of APIs, platforms, and microservices, ensuring consistency, quality, and clarity is becoming critical. DataContract-driven development is the forward-thinking approach that cuts through complexity—aligning development, analytics, and operational teams around clearly defined data practices. By establishing explicit expectations through DataContracts, teams not only streamline integration but also maximize value creation, fostering collaborative innovation that scales. Let’s unpack what DataContract-driven development entails, why it matters, and how your enterprise can leverage it to revolutionize data-driven practices.

What is DataContract-Driven Development?

At its core, DataContract-driven development revolves around explicitly defining the structure, quality, and expectations of data exchanged between different teams, APIs, and services. Think of it like a legally-binding agreement—but in the context of software engineering. These contracts clearly specify how data should behave, the schema to adhere to, acceptable formats, and interactions between producer and consumer systems.

Historically, teams faced conflicts and misunderstandings due to ambiguous data definitions, inconsistent documentation, and frequent schema changes. Adopting DataContracts eliminates these uncertainties by aligning stakeholders around consistent definitions, encouraging predictable and maintainable APIs and data practices. It’s similar to how well-designed API guidelines streamline communication between developers and end users, making interactions seamless.

When teams explicitly define their data agreements, they empower their analytics and development groups to build robust solutions confidently. Data engineers can reliably construct scalable pipelines, developers see streamlined integrations, and analysts benefit from clear and dependable data structures. In essence, DataContract-driven development lays the groundwork for efficient collaboration and seamless, scalable growth.

Why DataContract-Driven Development Matters to Your Business

The increasing complexity of data ecosystems within organizations is no secret; with countless services, APIs, databases, and analytics platforms, maintaining reliable data flows has become a significant challenge. Without proper guidance, these tangled data webs lead to costly errors, failed integrations, and inefficient data infrastructure. DataContract-driven development directly addresses these challenges, delivering vital clarity, efficiency, and predictability to enterprises seeking competitive advantages.

Aligning your teams around defined data standards facilitates faster problem-solving, minimizes mistakes, and enhances overall collaboration—enabling businesses to pivot more quickly in competitive markets. By explicitly detailing data exchange parameters, DataContracts offer enhanced systems integration. Teams leveraging these well-defined data agreements significantly reduce misunderstandings, data quality issues, and integration errors, maximizing productivity and making collaboration painless.

Furthermore, adopting this model fosters data democratization, providing enhanced visibility into data structures, enabling ease of access across teams and driving insightful analysis without intensive oversight. DataContracts directly support your organization’s role in delivering value swiftly through targeted API engagements, solidifying collaboration, consistency, and efficiency across the business landscape.

The Pillars of a Strong DataContract Framework

Building a reliable, impactful DataContract framework inevitably involves several foundational pillars designed to manage expectations and drive positive outcomes. Let’s explore the key elements businesses should consider when venturing down a DataContract-driven pathway:

Clearly Defined Data Schemas

Foundational to DataContracts are explicit schemas that dictate precise data formats, types, cardinality, and structures. Schemas eliminate guesswork, ensuring everyone accessing and producing data understands expectations completely. By leveraging clear schema definitions early, teams prevent confusion, potential integration conflicts, and unnecessary maintenance overhead later in the process.

Versioning and Lifecycle Management

Strong DataContract frameworks maintain robust version control to regulate inevitable schema evolution and gradual expansions. Effective data governance requires transparency around changes, maintaining backward compatibility, systematic updates, and straightforward transition periods. This responsible approach eliminates schema drift and minimizes disruptions during inevitable data transformations.

Data Quality and Validation Standards

Reliable data quality standards embedded within DataContracts help businesses ensure data accuracy, consistency, and fitness for intended use. Teams agree upon validation standards, including defined checks, quality tolerances, and metrics to measure whether data meets quality expectations. Implemented correctly, these frameworks protect stakeholders from inadvertently consuming unreliable or unstable data sources, improving decision-making integrity.

Implementing DataContracts: Best Practices for Success

Transitioning towards DataContract-driven development is an exciting journey promising considerable organizational upside but demands careful implementation. Adhering to certain best practices can drastically improve outcomes, smoothing the path towards successful adoption:

Collaborative Cross-Functional Alignment

A successful DataContract initiative cannot exist in isolation. Stakeholder buy-in and cross-functional collaboration remain essential for sustainable success. Leaders must clearly outline data expectations and discuss DataContracts transparently with developers, analysts, engineers, and business personnel alike. Collaborative involvement ensures consistency, support, and accountability from inception to successful implementation, leveraging perspectives from multiple vantage points within your organization.

Utilize Automation and Tooling

Automation plays a vital role in implementing and maintaining DataContract frameworks consistently. Businesses should leverage testing, schema validation, and continuous integration tooling to automatically enforce DataContracts standards. Tools like schema registries, API validation platforms, and automated testing frameworks streamline validation checks, reducing human error, and offering real-time feedback during product rollouts.

Offer Education and Support to Drive Adoption

Education and coaching remain vital considerations throughout both the initial adoption period and continuously beyond. Teams need proper context to see tangible value and prepare to adhere reliably to your new DataContract standards. Offering detailed documentation, well-structured training sessions, interactive workshops, or partnering with experts in API and data consulting can significantly reduce the barrier of entry, ensuring seamless, rapid adoption by optimizing organizational learning.

The Strategic Value of DataContracts for Analytics and Innovation

The strategic importance of DataContracts cannot be overstated, especially regarding analytics initiatives and innovative pursuits within businesses. These defined data frameworks ensure both accuracy and agility for analytics teams, offering clarity about data definitions and streamlining the development of ambitious analytics solutions or data-driven products.

Advanced analytics disciplines, including predictive modeling, machine learning, and artificial intelligence, require pristine datasets, consistency, and stability for operating in complex environments. Without clearly defined DataContracts, analysts inevitably experience frustration, wasted time, and reduced productivity as they navigate unexpected schema changes and unreliable data. Embracing DataContract-driven practices amplifies the potency of your data mining techniques and empowers analytics professionals to deliver meaningful insights confidently.

Moreover, innovation accelerates considerably when teams operate from a solid foundation of reliable, consistent data standards. DataContracts remove organizational noise, allowing streamlined experimentation efforts such as A/B testing, rapid pilot programs, and quickly iterating solutions. Enterprises seeking an edge benefit greatly by adopting structured data governance frameworks, bolstering agility, and delivering tangible results effectively. It directly accelerates your enterprise journey, aligning real-world insights through coherent data management and streamlined analytics integration, translating into competitive advantages to stay ahead.

Future-Proofing Your Business with DataContract-Driven Development

Looking ahead, technology landscapes become increasingly data-centric, shaping lasting data engineering trends. Mastering robust data-centric strategies using DataContracts sets organizations apart as forward-looking and innovation-ready. Keeping pace with ever-changing technology demands strong foundations around data standards, agreements, and operational simplicity.

Implementing comprehensive DataContracts early manifests value immediately but also ensures preparedness toward future industry shifts, empowering teams across your organization with confidence in their data infrastructure. It liberates professionals to advance the leading edge, proactively leveraging trends and exploring future data opportunities.

Enterprises pursuing long-term growth must adopt visionary approaches that ensure data trustworthiness and agility. DataContract-driven development is exactly that framework, setting clear guardrails encouraging targeted innovation, offering accurate risk management, accountability, standardization, and increased transparency. It positions your organization strategically to embrace whatever industry disruption emerges next, ensuring continual alignment and ease of scalability, proving DataContracts a cornerstone for growth-minded businesses.

Ready to create your unique DataContract-driven roadmap? Explore our in-depth exploration of 30 actionable data strategies and understand the nuances between grassroots consultancy vs enterprise partnerships to help kickstart your transformational journey.

by tyler garrett | May 7, 2025 | Solutions

Businesses confront immense volumes of complex and multi-dimensional data that traditional analytics tools sometimes struggle to fully harness.

Enter hyperdimensional computing (HDC), a fresh paradigm offering breakthroughs in computation and pattern recognition.

At the crossroads of artificial intelligence, advanced analytics, and state-of-the-art processing, hyperdimensional computing promises not merely incremental progress, but revolutionary leaps forward in capability.

For organizations looking to transform data into actionable insights swiftly and effectively, understanding HDC principles could be the strategic advantage needed to outperform competitors, optimize resources, and significantly enhance outcomes.

In this post, we’ll explore hyperdimensional computing methods, their role in analytics, and the tangible benefits that organizations can reap from deploying these technological innovations.

Understanding Hyperdimensional Computing: An Overview

At its core, hyperdimensional computing (HDC) refers to computational methods that leverage extremely high-dimensional spaces, typically thousands or even tens of thousands of dimensions. Unlike traditional computing models, HDC taps into the capacity to represent data as holistic entities within massive vector spaces. In these high-dimensional frameworks, data points naturally gain unique properties that are incredibly beneficial for memory storage, pattern recognition, and machine learning applications.

But why does dimensionality matter so significantly? Simply put, higher dimension vectors exhibit unique mathematical characteristics such as robustness, ease of manipulation, and remarkable tolerance towards noise and errors. These properties enable hyperdimensional computations to handle enormous datasets, provide accurate pattern predictions, and even improve computational efficiency. Unlike traditional computational approaches, HDC is exceptionally well-suited for parallel processing environments, immediately benefiting analytics speed and performance akin to quantum computing paradigms.

Businesses looking to keep pace with the exponential growth of big data could benefit tremendously by exploring hyperdimensional computing. Whether the operation involves intricate pattern detection, anomaly identification, or real-time predictive analytics, hyperdimensional computing offers a significantly compelling alternative to conventional computational frameworks.

The Real Advantages of Hyperdimensional Computing in Analytics

Enhanced Data Representation Capabilities

One notable advantage of hyperdimensional computing is its exceptional capability to represent diverse data forms effectively and intuitively. With traditional analytic methods often limited by dimensional constraints and computational complexity, organizations commonly find themselves simplifying or excluding data that may hold vital insights. Hyperdimensional computing counters this limitation by encoding data into high-dimensional vectors that preserve semantic meaning, relationships, and context exceptionally well.

Thus, hyperdimensional methods greatly complement and amplify approaches like leveraging data diversity to fuel analytics innovation. Organizations become empowered to align disparate data streams, facilitating holistic insights rather than fragmented perspectives. In such scenarios, complex multidimensional datasets—ranging from IoT sensor data to customer behavior analytics—find clarity within ultra-high-dimensional vector spaces.

Inherently Robust and Noise-Resistant Computations

The curse of data analytics often rests with noisy or incomplete datasets. Hyperdimensional computing inherently provides solutions to these problems through its extraordinary tolerance to error and noise. Within high-dimensional vector spaces, small random perturbations and inconsistencies scarcely affect the outcome of data representation or computation. This makes hyperdimensional systems particularly robust, enhancing the credibility, accuracy, and reliability of the resulting insights.

For instance, organizations implementing complex analytics in finance need meticulous attention to accuracy and privacy. By leveraging hyperdimensional computing methodologies—combined with best practices outlined in articles like protecting user information in fintech systems—firms can maintain stringent privacy and provide robust insights even when dealing with large and noisy datasets.

Practical Use Cases for Hyperdimensional Computing in Analytics

Real-Time Anomaly Detection and Predictive Analytics

An immediate application for hyperdimensional computing resides in real-time anomaly detection and predictive analytics. These tasks require performing sophisticated data analysis on large, rapidly changing datasets. Traditional approaches often fall short due to computational delays and inefficiencies in handling multidimensional data streams.

Hyperdimensional computing alleviates these bottlenecks, efficiently transforming real-time event streams into actionable analytics. Enterprises operating complex microservices ecosystems can greatly benefit by combining robust data architecture patterns with hyperdimensional approaches to detect unusual activities instantly, prevent downtime, or predict infrastructure challenges effectively.

Efficient Natural Language Processing (NLP)

Another promising hyperdimensional computing application lies in natural language processing. Due to the sheer abundance and diversity of linguistic information, NLP tasks can significantly benefit from HDC’s capabilities of representing complex semantic concepts within high-dimensional vectors. This approach provides rich, computationally efficient embeddings, improving analytics processes, such as sentiment analysis, chatbot conversations, or intelligent search behaviors.

With hyperdimensional computing powering NLP analytics, organizations can transform textual communications and user interactions into valuable insights rapidly and accurately. For decision-makers keen on deploying solutions like NLP-powered chatbots or enhancing ‘data-driven case studies,’ incorporating strategies highlighted in this guide on creating analytics-driven narratives becomes decidedly strategic.

Integration Strategies: Bringing Hyperdimensional Computing Into Your Analytics Stack

Once realizing the potential of hyperdimensional computing, the next essential phase involves effectively integrating this advanced methodology into existing analytics infrastructures. Successful integrations necessitate solid foundational preparations like data consolidation, schema alignment, and robust data management practices, especially through optimal utilization of methodologies articulated in articles like ETL’s crucial role in data integration.

Consequently, strategically integrating hyperdimensional computing methodologies alongside foundational analytic data solutions such as dependable PostgreSQL database infrastructures ensures seamless transitions and comfortably scaling to future data-processing demands. Moreover, pairing these integrations with modern identity and data security standards like SAML-based security frameworks ensures security measures accompany the rapid analytical speed HDC provides.

Educational and Talent Considerations

Implementing hyperdimensional computing effectively requires specialized skill sets and theoretical foundations distinct from traditional analytics. Fortunately, institutions like The University of Texas at Austin actively train new generations of data professionals versed in innovative data approaches like hyperdimensional theory. Organizations seeking competitive analytical advantages must, therefore, invest strategically in recruiting talent or developing training programs aligned to these cutting-edge methodologies.

Simultaneously, simplified yet robust automation solutions like Canopy’s task scheduler provide efficiency and scalability, enabling analytics teams to focus more on value-driven insights rather than repetitive operational tasks.

Conclusion: Embracing the Future of Advanced Analytics

Hyperdimensional computing stands as a compelling approach reshaping the landscape of analytics, opening substantial opportunities ranging from enhanced data representations and noise-resistant computations to real-time anomaly detection and advanced language processing operations. To remain competitive in an evolving technological scenario, adopting practices such as hyperdimensional computing becomes more a necessity than an option. By consciously integrating HDC with robust infrastructures, fostering specialized talent, and embracing cutting-edge data management and security practices, organizations carefully craft competitive edges powered by next-generation analytics.

Hyperdimensional computing isn’t merely innovation for tomorrow—it’s innovation your business can leverage today.

by tyler garrett | May 7, 2025 | Solutions

In today’s fast-moving landscape of data innovation, harnessing the power of your organization’s information assets has never been more crucial. As companies ramp up their analytical capabilities, decision-makers are grappling with how to ensure their data architectures are robust, trustworthy, and adaptable to change. Enter immutable data architectures—a strategic solution serving as the foundation to build a resilient, tamper-proof, scalable analytics environment. In this comprehensive guide, we’ll unpack exactly what immutable data architectures entail, the significant advantages they offer, and dive deep into proven implementation patterns your organization can tap into. Let’s take the journey toward building data solutions you can rely on for mission-critical insights, innovative analytics, and agile business decisions.

Understanding Immutable Data Architectures: A Strategic Overview

An immutable data architecture is fundamentally designed around the principle that data, once created or recorded, should never be modified or deleted. Instead, changes are captured through new, timestamped records, providing a complete and auditable history of every piece of data. This approach contrasts sharply with traditional data systems, where records are routinely overwritten and updated as information changes, often leading to a loss of critical historical context.

At Dev3lop, a reliable practitioner in advanced Tableau consulting services, we’ve seen firsthand how industry-leading organizations use immutable architectures to drive trust and accelerate innovation. Immutable architectures store each transaction and operation as an individual record, transforming data warehouses and analytics platforms into living historical archives. Every data mutation generates a new immutable entity that allows your organization unparalleled levels of transparency, reproducibility, and compliance.

This strategic architecture aligns flawlessly with modern analytical methodologies such as event-driven design, data mesh, and DataOps. By implementing immutability in your systems, you set the stage for robust analytics solutions, empowering teams across your organization to gain clarity and context in every piece of data and ensuring decision-makers have accurate, comprehensive perspectives.

Key Benefits of Immutable Data Architectures

Data Integrity and Reliability

Implementing an immutable data architecture dramatically improves data integrity. Since data points are never overwritten or deleted, it ensures transparency and reduces errors. Analysts and decision-makers benefit from a data source that is robust, reliable, and inherently trustworthy. Organizations adopting immutable data architectures eliminate common data problems such as accidental overwrites, versioning confusion, and loss of historical records, allowing teams to make insightful, impactful decisions quickly and confidently.

This enhanced reliability is critical in high-stakes fields such as healthcare, finance, and compliance-sensitive industries. For example, in healthcare, immutable data structures coupled with data analytics platforms and visualization tools can drastically improve patient outcomes and practitioner decision-making processes. Our analysis of how Data Analytics is Transforming the Healthcare Industry in Austin highlights powerful examples of this synergy.

Enhanced Compliance and Auditability

Immutable data architectures provide valuable support to compliance and governance efforts. By preserving historical data in immutable form, you create a clear, auditable track record that simplifies regulatory requirements, reporting, and audits. Compliance teams, auditors, and management all benefit from complete transparency, and immutable designs provide a built-in auditable trail without additional overhead or complexity.

Moreover, when coupled with efficient data analytics or reporting solutions, immutable architectures enable organizations to quickly respond to regulatory inquiries, audits, or compliance verifications. Combined, this eliminates extensive manual data reconciliation processes and reduces the risk associated with regulatory non-compliances and fines.

Empowered Versioning and Collaboration

Due to its inherent nature, immutable architecture naturally provides detailed and always-accessible version control. Each entry timestamps an exact point in time, ensuring anyone in the organization can revert to precise data snapshots to understand past states or recreate past analytical outcomes. Embracing immutability means the team can confidently share data, collaborate freely, and iterate quickly without fearing data corruption.

The advantages gained through robust version control are clear, documented previously in our blog “We Audited 10 Dashboards and Found the Same 3 Mistakes,” highlighting common pitfalls resulting from lack of data consistency and reproducibility.

Proven Implementation Patterns for Immutable Architectures

Event Sourcing and Streams

Event sourcing is a robust architectural pattern to integrate immutability directly into application logic. Rather than saving just a single representation of state, event sourcing captures every change activity as an immutable sequence of “events.” Each event is appended to an ordered event log, serving both as an audit mechanism and a primary source of truth. Modern platforms like Apache Kafka have further matured stream processing technology, making this method increasingly viable and scalable.

For analytical purposes, event-sourced architectures can feed data streams directly into visualization solutions such as Tableau, enabling real-time dashboards and reports. It’s crucial to maintain optimal coding practices and architecture principles—check out our “SQL vs Tableau” article for a deep comparison in choosing tools complementary to event-driven analytics.

Zero-Copy and Append-Only Storage

Leveraging append-only data storage mediums, such as Amazon S3, HDFS, or similar cloud-based storage services, is a straightforward, practical solution to implement immutable data sets. With this approach, all data entries are naturally recorded sequentially, eliminating the risk of overwriting important historical context.

Furthermore, embracing zero-copy architecture ensures data is seamlessly shared among multiple analytical applications and micro-services. Check out our exploration of “Micro Applications: The Future of Agile Business Solutions” to grasp the power of immutable data patterns in modern agile software ecosystems.

Blockchain and Distributed Ledger Technology

Blockchain technology provides an inherently immutable ledger through cryptographic hashing and distributed consensus algorithms. Due to this immutability, businesses can leverage blockchain to ensure critical data remains intact and verifiable across their decentralized networks and ecosystems.

Blockchain is finding relevance especially in sensitive transaction environments and computed contracts, where proof of precise historical activity is essential. Our recent blog “Exploring the Exciting World of Quantum Computing” touches upon future technologies that complement these immutable infrastructures.

Scaling Immutable Architectures for Advanced Analytics

Scaling an immutable architecture efficiently requires strategic storage management and optimized queries. When using data warehousing tools or subset extracts, SQL patterns like the “SELECT TOP Statement” are effective when retrieving limited datasets efficiently for performant analytics.

Maintaining optimal architecture goes beyond storage and analytics. Immutable patterns make systems inherently ready for powerful APIs. Check out our “Comprehensive API Guide for Everyone” to understand how API-centric designs are complemented profoundly by immutability patterns.

Visualizing Immutable Data: The Importance of Effective Design

Effective data visualization is critical when working with immutable datasets. As data accumulates, visualization clarity becomes essential to unlocking insights. In our recent article “The Role of Color in Data Visualization,” we demonstrate how creative visualization principles clarify scale and context within expansive immutable data sources.

Conclusion: Prepare for the Future with Immutable Architecture

As organizations face greater demands for transparency, accuracy, and agility in analytical decision-making, immutable data architectures offer compelling advantages. Leveraging event sourcing, append-only contexts, and even blockchain methodology, companies building these immutable environments will find their investments pay off exponentially in speed, auditability, regulatory compliance, and reliable innovations—strengthening their competitive edge for the future.

At Dev3lop, our team stands ready to guide you successfully through your strategic implementation of immutable architectures, aligning perfectly with your innovation-led analytics goals.

by tyler garrett | May 7, 2025 | Solutions

Imagine a world where information is transformed seamlessly into actionable insights at the exact point where it originates.

No waiting, no latency, no unnecessary routing back and forth across countless data centers—only real-time analytics directly at the data source itself.

This approach, known as Edge Analytics Mesh, isn’t merely an ambitious innovation; it’s a fundamental shift in how companies leverage data.

From improving speed and reducing complexity in proactive decision-making to enhancing privacy and optimizing infrastructure costs, Edge Analytics Mesh is redefining data strategy.

For businesses and leaders seeking agile, scalable solutions, understanding the promise and implications of processing data precisely where it’s created has never been more critical.

Understanding Edge Analytics Mesh: A New Paradigm in Data Processing

Edge Analytics Mesh is a sophisticated architecture designed to decentralize analytics and decision-making capabilities, placing them closer to where data is actually generated—commonly referred to as “the edge.” Rather than funneling massive amounts of raw data into centralized servers or data warehouses, businesses now rely on distributed analytical nodes that interpret and process data locally, significantly lowering latency and network congestion.

Traditional data analytics architectures often function as centralized systems, collecting immense volumes of data from disparate locations into a primary data lake or data warehouse for subsequent querying and analysis. However, this centralized approach increasingly presents limitations such as delayed insights, greater exposure to network issues, higher bandwidth demand, and inflated data transfer costs. By adopting Edge Analytics Mesh, companies effectively decentralize their analytics process, allowing the edge nodes at IoT devices, factories, point-of-sale systems, or autonomous vehicles to analyze and act upon data in real-time, distributing computation loads evenly across various network nodes.

Additionally, Edge Analytics Mesh aligns naturally with modern hybrid and multi-cloud strategies, effectively complementing traditional centralized analytics. As data and workloads grow increasingly decentralized, companies can reduce operational complexity—which we discussed at length in the article “SQL Overkill: Why 90% of Your Queries Are Too Complicated”. Thus, adopting edge-based analytical architectures ensures agility and scalability for future growth.

Benefits of Implementing Analytics at the Edge

Real-time Decision Making and Reduced Latency

When analytical processes are performed near the source, latency dramatically decreases, resulting in faster, real-time decisions. Consider scenarios such as self-driving vehicles, industrial control systems, or smart city implementations. In these contexts, decision-making that occurs within milliseconds can be crucial to overall operational success and safety. With centralized analytics, these critical moments can quickly become bottlenecks as data travels back and forth between site locations and cloud servers. Edge analytics drastically mitigates these risks, delivering instant data insights precisely when they’re most actionable and impactful.

Decreased Cost and Enhanced Efficiency

Implementing Edge Analytics Mesh significantly reduces the need to transmit large data volumes across networks or to cloud storage repositories, drastically cutting infrastructure expenses and alleviating network bandwidth congestion. This cost-saving is essential, particularly as companies discover that the Software as a Service (SaaS) platforms grow more expensive with scalability and evolving business needs. Edge-focused analytics helps businesses minimize unnecessary data movement, creating a leaner, more cost-effective alternative.

Improved Data Security, Governance, and Compliance

Edge-based analytics ensures sensitive data stays close to its point of origin, reducing exposure and improving overall data governance and compliance. By processing data at the edge, businesses gain better control over how sensitive information moves across their infrastructure, simplifying compliance efforts while mitigating the risk of data loss or cyber-attacks. Consequently, Edge Analytics Mesh proves particularly compelling for businesses operating under stringent regulatory frameworks such as healthcare, finance, or secure IoT ecosystems.

Typical Use Cases and Industry Implementations for Edge Analytics Mesh

Smart Cities and Sustainable Urban Development

In edge analytics for smart cities, sensors and IoT devices across urban environments provide real-time data analytics with immediate responsiveness. Consider leveraging Edge Analytics Mesh to optimize traffic management, enhance public safety, and improve energy distribution. We’ve previously discussed how analytics can shape better urban ecosystems in our explorations of data analytics addressing Austin’s housing affordability crisis. Edge computing can add a direct layer of responsiveness to such analytical thinking.

Manufacturing and Industrial IoT (IIoT)

Manufacturers greatly benefit from edge analytics mesh, particularly through Industrial IoT solutions. Intelligent machinery equipped with edge analytics capabilities can deliver immediate feedback loops enabling predictive maintenance, intelligent supply chain optimization, and real-time quality controls. Implementing edge analytics dramatically enhances efficiency by catching potential disruptions early, maintaining production levels, and reducing operational costs.

Retail and Customer Experiences

The retail industry can deploy edge analytics to detect purchase patterns, facilitate real-time customer interactions, and enable personalized experiences. For example, retail stores leveraging real-time inventory analytics at the edge can offer customers instant availability information, enhancing the overall shopping experience while reducing inventory errors and inefficiencies that arise from centralized-driven latency.

Integrating Edge Analytics Mesh with Existing Data Strategies

Edge Analytics Mesh doesn’t require businesses to discard their current analytical stacks. Instead, the approach complements existing infrastructures such as data lakes, data warehouses, and more recently, data lakehouses—bridging the flexibility between structured data warehouses and large-scale data lakes. Our previous guide on Data Lakehouse Implementation explores intelligent integration of cutting-edge architectures, underscoring strategic resilience. By coupling edge analytics mesh with centralized analytical platforms, companies achieve unprecedented operational agility and scalability.

Similarly, businesses must evaluate the roles of open source versus commercial data integration tools—such as ETL (Extract, Transform, Load)—highlighted in our deeper dive into open-source and commercial ETL solutions. Companies integrating edge analytics must tactically select solutions that efficiently balance cost-efficiency, feature-richness, and compatibility accordingly. In doing so, organizations ensure a unified data processing environment across edges and central infrastructures, fully leveraging analytics potential.

Challenges and Considerations in Adopting Edge Analytics Mesh

While Edge Analytics Mesh clearly offers value, several challenges exist that company strategists must consider when weighing its adoption. The primary consideration is the complexity inherent in geographically dispersed analytics implementations. Businesses face decision-making around necessary analytical functions at the edge versus those central best practices, troubleshooting support structures, and ensuring interoperability across decentralized settings and data ecosystems.

Organizations must address data handling precision in distributed environments and clearly delineate between scenarios requiring edge versus centralized evaluation. Embracing various analytics objectives means understanding the distinctions between descriptive, diagnostic, predictive, and prescriptive analytics—a topic we explored in-depth in our comprehensive guide on data analytics types. Companies adopting edge analytics must ensure processes remain secure, seamless, and fully capable of integrating predictive intelligence effectively.

Adopting Edge Analytics Mesh: A Strategic Move Toward Data Innovation

The rapid pace of business today demands immediate insights with minimal latency. Edge Analytics Mesh is a game-changer—empowering business leaders seeking strategic advantage through agile, data-driven decisions that occur instantaneously. Allowing businesses to fully harness the vast potential of distributed data environments and truly innovate where it counts, the practical benefits include reduced latency, increased savings, enhanced compliance, and improved security. As analytics experts, our team continues providing robust advice, solutions, and data visualization consultant services to ensure seamless adoption and optimal integration. When properly implemented, Edge Analytics Mesh positions your business confidently at the forefront of technological evolution.