by tyler garrett | May 30, 2025 | Data Management

In the rapidly evolving digital ecosystem, companies accumulate vast volumes of data that hold immense operational, strategic, and competitive value. However, protecting sensitive information while allowing appropriate access to stakeholders remains a constant technological challenge. Time-limited access control mechanisms emerge as an intelligent solution, offering granular, secure, and highly adaptive management of data assets. Today’s innovative business leaders recognize the necessity of balancing flexibility with precision—ensuring data accessibility aligns perfectly with evolving organizational objectives, laws, and user roles. In this blog, we will delve deeply into the strategic implementation of time-limited access control, highlighting its transformational potential to foster smoother operations, enhance regulatory compliance, and deliver powerful business analytics. By mastering these access control practices, companies position themselves strongly in data-driven markets and empower their employees through timely access to vital insights.

Why Time-Limited Access Control Matters

Organizations increasingly rely upon dynamically generated data streams to inform critical decisions and business processes. With this growing reliance comes the intricacy of balancing rapid and secure accessibility against potential risks arising from unauthorized or prolonged exposure of sensitive information. Time-limited access control systems uniquely serve this need by facilitating granular permission management, ensuring resources are available strictly within defined temporal scope. This solution mitigates risks such as unauthorized access, accidental information leaks, and regulatory non-compliance.

Consider collaborative research projects, where external stakeholders must securely access proprietary data sets within predefined timelines. Utilizing time-limited access control systems allows clear boundary management without the manual overhead of revoking permissions—one example of how data-centric organizations must evolve their pipeline infrastructure to embrace smarter automation. Not only does this practice protect intellectual property, but it also fosters trust with external collaborators and partners.

Further, time-bound permissions prevent prolonged exposure of sensitive data, an issue that is particularly crucial in dynamic industries like financial services or healthcare, where data exposure compliance regulations impose strict penalties. Aligning your employee access to job duties that frequently change reduces vulnerability while keeping your organization’s information posture agile. Time-limited access control thus becomes a core component of modern data strategy, facilitating a robust approach to securing assets and maintaining responsiveness to rapid operational shifts.

The Key Components of Time-Limited Access Control Implementation

Dynamic Identity Management Integration

To effectively implement time-limited access controls, an organization first requires advanced integration of dynamic identity management solutions. Identity management systems provide standardized access for user identities, ensuring that time-based restrictions and user permissions align fluidly with evolving personnel responsibilities or projects. Integrated identity management platforms, enhanced by artificial intelligence capabilities, allow rapid onboarding, delegation of temporary roles, and automated revocation of permissions after set intervals.

Organizations interested in modernizing their identity management infrastructure can leverage robust frameworks such as those discussed in our article on AI agent consulting services, where intelligent agents help streamline identity audits and compliance monitoring. By combining strong user authentication practices with dynamic identity frameworks, companies effectively minimize risk exposure and ensure elevated data security standards.

Context-Aware Policies and Permissions

Defining context-aware policies involves creating dynamically adaptable permissions that shift appropriately as roles, conditions, or situational contexts evolve. Organizations with ambitious data initiatives, such as those leveraging analytics for smart cities, detailed in our case study on data analytics improving transportation in Austin, rely heavily on context-driven privileges. Permissions may adapt following external triggers—such as specific points in project lifecycles, contractual deadlines, regulatory changes, or immediate modifications to job responsibilities.

Adopting technologies focused on context-awareness vastly enhances security posture. Policy administrators find significantly improved workflows, reducing manual intervention while boosting data governance quality. Ultimately, a context-driven permissions system paired with time constraints creates the rigor necessary for modern, complex data assets.

Technical Foundations for Implementing Time-Based Controls

Customizable Data Pipeline Architectures

Flexible and highly customizable data pipeline architectures represent another foundational requirement enabling effective and seamless integration of time-limited access controls. By creating pipelines able to branch effectively based on user roles, company permissions, or time-dependent access cycles—as elaborated in our comprehensive guide on data pipeline branching patterns—organizations can implement automated and sophisticated permissioning structures at scale.

Pipeline architecture integrated with flexible branching logic helps isolate data scopes per audience, adjusting dynamically over time. Organizations benefit immensely from leveraging such structured pipelines when implementing temporary project teams, third-party integrations, or fluid user roles. Ensuring the underlying pipeline infrastructure supports effective branching strategies reduces errors associated with manual intervention, tightening security and compliance measures effortlessly.

Automated Testing and Infrastructure Validation

With complex permissioning models like time-limited access coming into place, manual verification introduces risk and scale bottlenecks. Thus, implementing robust and automated testing strategies broadly improves implementation effectiveness. Our resource on automated data testing strategies for continuous integration provides useful methodologies to systematically validate data pipeline integrity and access management rules automatically.

Automated testing ensures that access control definitions align perfectly with organizational policy, minimizing human error greatly. Incorporating continuous automated testing into your data pipeline infrastructure helps create consistent compliance and significantly reduces security vulnerabilities related to misconfigured access privileges. Automation therefore becomes a backbone of robust time-limited control management.

Advanced Considerations and Strategies

Language-Aware Data Processing and Controls

For global enterprises or businesses operating across languages and international borders, implementing custom collators and language-aware controls is critical. As highlighted within our piece about custom collators for language-aware processing, advanced internationalization approaches provide additional security layers based on cultural or jurisdictional regulations. Locally optimized language-aware access management components help accommodate diverse regulatory environments seamlessly.

Analytical Visualizations for Monitoring and Compliance

To effectively oversee time-limited access implementations, visual analytics plays a meaningful role in compliance and monitoring practices. Utilizing analytics dashboards, organizations can achieve real-time insights into data usage, access frequency, and potential anomalies—gaining transparency of user engagement across multiple confidentiality zones or functions. Our detailed exploration on visualization consistency patterns across reports reveals how unified visual analytics help decision-makers efficiently monitor access measures and policy adherence over time.

Optimizing Content and Data Structures for Time-Based Controls

Strategic Data Binning and Segmentation

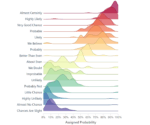

Employing techniques such as those discussed extensively in our blog about visual binning strategies for continuous data variables ensures data content itself aligns optimally with access paradigms. Data binning structures ensure permissions are easier to enforce dynamically at granular functional levels—saving processing times and computing resources.

SQL Practices for Time-Limited Data Joins

Implementing robust SQL practices, as recommended in the article SQL Joins Demystified, facilitates efficient management of time-bound analytical queries. Advanced join strategies efficiently aggregate temporary views through joining multiple timely data streams, enabling secure but temporary data sharing arrangements between stakeholders at convenience and scale.

Conclusion: Securing Data Innovation Through Time-Limited Controls

Effectively implementing time-limited access controls is crucial in modernizing data infrastructure—protecting your organization’s intellectual capital, managing compliance effectively, and driving actionable insights securely to stakeholders. Organizations achieving mastery in these cutting-edge solutions position themselves significantly ahead in an increasingly data-centric, competitive global marketplace. Leveraging strategic mentorship from experienced analytics consultants and best practices outlined above equips forward-thinking companies to harness and innovate successfully around their protected data assets.

Thank you for your support, follow DEV3LOPCOM, LLC on LinkedIn and YouTube.

by tyler garrett | May 30, 2025 | Data Management

Imagine piecing together fragments of a puzzle from different boxes, each set designed by a different person, yet each containing sections of the same overall picture. Cross-domain identity resolution is much like this puzzle, where disparate data points from multiple, isolated datasets must be accurately matched and merged into cohesive entities. For enterprises, successful entity consolidation across domains means cleaner data, superior analytics, and significantly better strategic decision-making. Let’s delve into how tackling cross-domain identity resolution not only streamlines your information but also unlocks transformative opportunities for scalability and insight.

Understanding Cross-Domain Identity Resolution and Why It Matters

At its core, cross-domain identity resolution is the process of aggregating and harmonizing multiple representations of the same entity across varied data sources, platforms, or silos within an organization. From customer records stored in CRM databases, transactional logs from e-commerce systems, to engagement statistics sourced from marketing tools, enterprises often face inconsistent portrayals of the same entity. Failing to consolidate results in fragmented views that compromise decision-making clarity and reduce operational efficiency.

This lack of consistent identity management prevents your organization from fully realizing the power of analytics to visualize holistic insights. For example, your analytics pipeline could misinterpret a single customer interacting differently across multiple platforms as separate individuals, thus missing opportunities to tailor personalized experiences or targeted campaigns. Bridging these gaps through effective identity resolution is pivotal for data-driven companies looking to build precise customer-centric strategies. Learn more about how effective visualization approaches such as visual analytics for outlier detection and exploration can leverage accurate consolidated identities to produce clearer actionable insights.

The Technical Challenges of Entity Consolidation Across Domains

Despite its immense value, entity consolidation presents unique technical challenges. Data from distinct domains often vary substantially in schema design, data relevance, data velocity, accuracy, and completeness. Different data owners maintain their own languages, definitions, and even encoding standards for similar entities, posing complications for integration. Additionally, unstructured datasets and data volumes skyrocketing in real-time transactional environments significantly complicate straightforward matching and linking mechanisms.

Another vital concern involves data latency and responsiveness. For robust identity resolution, organizations often leverage strategies like approximate query techniques to manage large-scale interactive operations. Leveraging methods such as approximate query processing (AQP) for interactive data exploration, organizations find success balancing analytical power with optimal performance. The necessity to meet rigorous data accuracy thresholds becomes even more crucial when reconciling sensitive customer or transactional data, increasing demand for proficient technological best practices and seasoned guidance.

Approaches and Techniques to Achieve Efficient Identity Resolution

To effectively consolidate entities across multiple domains, organizations must blend algorithmic approaches, human expertise, and strategic data integration techniques. The fundamental step revolves around establishing robust mechanisms for matching and linking entities via entity-matching strategies. Advanced machine-learning algorithms including clustering, decision trees, and deep learning models are widely employed. Organizations are increasingly integrating artificial intelligence (AI) techniques and sophisticated architectures like hexagonal architecture (also known as ports and adapters) to create reusable and robust integration designs.

Moreover, mastering database retrieval operations through advanced range filtering techniques such as SQL BETWEEN operator can significantly reduce data retrieval and querying times, ensuring better responsiveness to enterprise identity resolution queries. On top of automation, AI assistants can enhance ingestion workflows. In fact, leveraging AI experiences applicable to organizational workflows, like our insights covered in what we learned building an AI assistant for client intake, can streamline entity consolidation processes by automating routine identity reconciliation.

The Importance of Non-blocking Data Loading Patterns

As data volumes escalate and enterprise demands for analytics near real-time responsiveness, traditional blocking-style data loading patterns significantly limit integration capability and flexibility. Non-blocking loading techniques, as explored thoroughly in our piece Non-blocking data loading patterns for interactive dashboards, are essential building blocks to enable agile, responsive identity resolution.

By adopting patterns that facilitate seamless asynchronous operations, analytics initiatives integrate cross-domain entity data continuously without interruption or latency concerns. Non-blocking architecture facilitates greater scalability, effectively lowering manual intervention requirements, reducing the risk of errors, and increasing the consistency of real-time decision-making power. This enables highly responsive visualization and alerting pipelines, empowering stakeholders to take immediate actions based on reliably consolidated entity views.

Innovative API Strategies and Leveraging APIs for Consolidated Identities

Effective cross-domain identity resolution frequently demands robust interaction and seamless integration across diverse platform APIs. Strategically structured APIs help bridge data entities residing on disparate platforms, enabling streamlined entity matching, validation, and consolidation workflows. For teams aiming at superior integration quality and efficiency, our comprehensive API guide provides actionable strategies to maximize inter-system communication and data consolidation.

Additionally, developing API endpoints dedicated specifically to cross-domain identity resolution can significantly enhance the governance, scalability, and agility of these processes. Advanced API management platforms and microservices patterns enable optimized handling of varying entities originating from disparate sources, ensuring reliable and fast identity reconciliation. Empowering your identity resolution strategy through well-designed APIs increases transparency and enables more informed business intelligence experiences, critical for sustainable growth and strategy refinement.

Addressing the Hidden Risks and Opportunities in Your Data Assets

Data fragmentation caused by inadequate cross-domain identity resolution can result in unnoticed leaks, broken processes, duplication efforts, and significant revenue loss. Recognizing the importance of entity consolidation directly translates into understanding and remedying critical inefficiencies across your data asset lifecycle. Our analytics team has found, for instance, unseen inefficiencies within data silos can become major obstacles affecting organizational agility and decision accuracy, as discussed in our popular piece on Finding the 1% in your data that’s costing you 10% of revenue.

Ultimately, consolidating identities efficiently across platforms not only addresses individual tactical elements but also facilitates strategic growth opportunities. Together with an experienced consulting partner, such as our specialized Power BI Consulting Services, enterprises turn consolidated identities into robust analytical insights, customer-focused innovations, and superior overall market responsiveness. A methodical approach to cross-domain identity resolution empowers leaders with reliable data-driven insights tailored around unified stakeholder experiences and competitive analytics solutions.

The Bottom Line: Why Your Organization Should Invest in Cross-Domain Identity Resolution

Fundamentally, cross-domain identity resolution enables enterprises to generate clean, cohesive, integrated data models that significantly enhance analytical reporting, operational efficiency, and decision-making clarity. Investing strategically in sophisticated entity resolution processes establishes a platform for data excellence, optimizing information value and driving customer-centric innovations without friction.

Achieving authenticated and harmonized identities across multiple domains can revolutionize your organization’s analytics strategy, positioning your organization as an adaptive, insightful, and intelligent industry leader. With clearly managed and consolidated entities in hand, leaders can confidently plan data-driven strategies, mitigate risks proactively, maximize profitability, and pursue future-focused digital acceleration initiatives.

At Dev3lop, we specialize in translating these complex technical concepts into achievable solutions. Learn how cross-domain identity resolution adds clarity and strategic value to your analytics and innovation efforts—from visualization platforms to API management and beyond—for a more insightful, informed, and empowered organization.

Thank you for your support, follow DEV3LOPCOM, LLC on LinkedIn and YouTube.

by tyler garrett | May 30, 2025 | Data Management

In today’s rapidly evolving data environment, organizations face unprecedented complexities in maintaining compliance, ensuring security, and leveraging insights effectively. Context-aware data usage policy enforcement represents a crucial strategy to navigate these challenges, providing dynamic, intelligent policies that adapt based on real-time situations and conditions. By embedding awareness of contextual variables—such as user roles, data sensitivity, geographic locations, and operational scenarios—organizations can ensure data governance strategies remain robust yet flexible. In this blog, we explore the strategic importance of employing context-aware policies, how they revolutionize data handling practices, and insights from our extensive experience as a trusted advisor specializing in Azure Consulting Services, data analytics, and innovation.

Why Context Matters in Data Governance

Traditional static data policies have clear limitations. Often, they lack the agility organizations require to handle the dynamic nature of modern data workflows. Data has become fluid—accessible globally, increasingly diverse in type, and integral to decision-making across organization levels. Context-awareness infuses adaptability into policy frameworks, enabling businesses to set more nuanced, pragmatic policies. For instance, data accessibility regulations may differ if the user is internal, remote, operating in sensitive geographical or regulatory contexts, or even based on the user’s immediate action or intent.

Consider an analytics professional building a business dashboard. The capabilities and data accessibility needed likely vary significantly compared to a business executive reviewing sensitive metrics. Contextual nuances like the type of analytics visualization—whether users prefer traditional reporting tools or are comparing Power BI versus Tableau—can determine data security implications and governance requirements. Context-aware policies, therefore, anticipate and accommodate these varying requirements, ensuring each stakeholder receives compliant access perfectly aligned with operational roles and requirements.

Moreover, leveraging context-aware data policies is beneficial in regulatory environments such as GDPR or HIPAA. By incorporating geographic and jurisdictional contexts, policies dynamically adapt permissions, access controls, and data anonymization practices to meet regional directives precisely, significantly minimizing compliance risks.

How Context-Aware Policies Improve Data Security

Data security is far from a one-size-fits-all problem. Appropriately managing sensitive information relies upon recognizing context—determining who accesses data, how they access it, and the sensitivity of the requested data. Without precise context consideration, data access mechanisms become overly permissive or too restrictive.

Context-aware policies can automatically adjust security levels, granting or revoking data access based on factors such as user role, location, or even the network environment. A biotech employee connecting from within the secured network should face less friction accessing specific datasets compared to access requests from less-secure remote locations. Adjusting to such contexts not only enhances security but also optimizes operational efficiency—minimizing friction when not needed and increasing vigilance when required.

Moreover, understanding the intricacies of data access inherently involves grasping technical implementation considerations. For databases, context-aware enforcement involves determining permissions and understanding advanced SQL queries that govern data extraction and usage. For example, discerning the database outcomes by understanding options such as differences between UNION and UNION ALL in SQL helps teams implement more precise and strategically compelling contextual policies that align with business needs without sacrificing security.

Real-Time Adaptability Through Context-Aware Analytics

Real-time adaptability is one of the most compelling reasons organizations are shifting toward context-aware data usage policy enforcement. With data arriving from multiple sources and at increasing velocities, ensuring contextual policy adherence in real time becomes a cornerstone of effective data governance strategies. This shift towards real-time policy evaluation empowers immediate responses to shifting contexts such as market fluctuations, customer behavior anomalies, or network security incidents.

Advanced analytics and data processing paradigms, like pipeline implementations designed with context-awareness in mind, can utilize techniques like the distributed snapshot algorithm for state monitoring. Such real-time analytics support context-aware monitoring for dynamic policies, allowing companies to respond swiftly and semantically to emerging data circumstances.

Real-time adjustment is critical in anomaly detection and threat mitigation scenarios. If a policy detects unusual data transfer patterns or suspicious user activity patterns, contextual assessment algorithms can instantly alter data access permissions or trigger security alerts. Such augments help in proactively managing threats, protecting sensitive information, and minimizing damages in real time.

Self-Explaining Visualization to Enhance Policy Compliance

Enforcing context-aware policies also involves adopting transparent communication approaches towards stakeholders affected by these policies. Decision-makers, business users, and IT teams must understand why specific data usage restrictions or privileges exist within their workflows. Self-explaining visualizations emerge as an effective solution, providing dynamic, embedded contextual explanations directly within data visualizations themselves. These interfaces clearly and logically explain policy-driven access restrictions or data handling operations.

Our approach at Dev3lop integrates methodologies around self-explaining visualizations with embedded context, greatly simplifying understanding and boosting user compliance with policies. When users explicitly grasp policy implications embedded within data visualizations, resistance decreases, and intuitive adherence dramatically improves. In scenarios involving sensitive data like financial analytics, healthcare metrics, or consumer behavior insights, intuitive visual explanations reassure compliance officers, regulators, and decision-makers alike.

Transparent visualization of context-aware policies also enhances audit readiness and documentation completeness. Clarity around why specific restrictions exist within certain contexts reduces confusion and proactively addresses queries, enhancing decisions and compliance.

Optimizing Data Pipelines with Contextual Policy Automation

Optimizing data pipelines is a necessary and strategic outcome of context-aware policy enforcement. Automation of such policies ensures consistency, reduces human intervention, and enables technical teams to focus on innovation instead of constant manual management of compliance standards. Implementing context-driven automation within data engineering workflows dramatically improves efficiency in handling massive data volumes and disparate data sources.

Pipelines frequently encounter operational interruptions—whether due to infrastructure limitations, network outages, or transient errors. Context-aware policy automation enables rapid system recovery by leveraging techniques like partial processing recovery to resume pipeline steps automatically, ensuring data integrity remains uncompromised. Moreover, integrating context-sensitive session windows, discussed in our guide on session window implementations for user activity analytics, further empowers accurate real-time analytics and robust pipeline operations.

A pipeline adapted to context-aware policies becomes resilient and adaptive, aligning technical accuracy, real-time performance, and policy compliance seamlessly. Ultimately, this yields a competitive edge through improved responsiveness, optimized resource utilization, and strengthened data governance capabilities.

How Organizations Can Successfully Implement Context-Aware Policies

Successful implementation requires a multi-pronged approach involving technology stack selections, stakeholder engagement, and integration with existing policies. Engaging with analytics and data consultancy experts like Dev3lop facilitates defining clear and actionable policy parameters that consider unique organizational needs, regional compliance demands, and complexities across technical and business domains.

Collaborating with professional technology advisors skilled in cloud computing platforms, such as our Azure Consulting Services, organizations can construct robust infrastructure ecosystems supporting context-awareness enforcement. Azure offers versatile tools to manage identity, access control, data governance, and innovative analytics integration seamlessly. Leveraging these technologies, organizations effectively unify analytics-driven contextual awareness with decisive governance capabilities.

Implementing a continuous monitoring and feedback loop is vital in refining context-awareness policies. Organizations must consistently evaluate real-world policy outcomes, using monitoring and automated analytics dashboards to ensure constant alignment between intended policy principles and actual utilization scenarios. Adopting an ongoing iterative process ensures policy frameworks stay adaptive, optimized, and fit-for-purpose as operational realities inevitably evolve.

Conclusion: Context-Aware Policies—Strategic Advantage in Modern Data Governance

The strategic application of context-aware data usage policy enforcement marks an evolutionary step—transitioning businesses from reactive, static policies to proactive, responsive frameworks. Context-driven policies elevate security levels, achieve greater compliance readiness, and enhance real-time data handling capabilities. Partnering with trusted technology experts, such as Dev3lop, empowers your organization to navigate complexities, leveraging advanced analytics frameworks, innovative pipeline implementations, and robust visualization methodologies—delivering an unmatched competitive edge.

Thank you for your support, follow DEV3LOPCOM, LLC on LinkedIn and YouTube.

by tyler garrett | May 30, 2025 | Data Management

In the modern enterprise landscape, evolving complexity in data and exploding demand for rapid intelligence mean organizations face significant challenges ensuring disciplined semantics in their analytics ecosystem. A semantic layer implementation, structured thoughtfully, acts as a centralized source of truth, clarifying business terminology across technical boundaries, and ensuring alignment across stakeholders. The power of a semantic layer is that it bridges the gap often present between technical teams focused on databases or coding routines and executive-level decision-makers looking for clear and consistent reporting. To truly harness analytics effectively, implement an intuitive semantic layer that is tailored to your unique business lexicon, promoting data integrity and efficiency across all stages. As pioneers in the field of advanced analytics consulting services, we understand that businesses thrive on clarity, consistency, and ease of information access. In this blog post, we share valuable insights into semantic layer implementation, helping decision-makers and stakeholders alike understand the essentials, benefits, and considerations critical to long-term analytics success.

Why Does Your Organization Need a Semantic Layer?

When multiple teams across an enterprise handle various datasets without standardized business terminology, discrepancies inevitably arise. These inconsistencies often lead to insights that mislead rather than inform, undermining strategic goals. By implementing a semantic layer, organizations mitigate these discrepancies by developing a unified, dimensionally structured framework that translates highly technical data models into meaningful business concepts accessible to all users. Over time, this foundational clarity supports strategic decision-making processes, complexity reduction, and improved operational efficiencies.

A well-designed semantic layer empowers businesses to speak a universal analytics language. It encourages collaboration among departments by eliminating confusion over definitions, metrics, and reporting methodologies. Furthermore, when embedded within routine operations, it serves as a vital strategic asset that significantly streamlines onboarding of new reports, collaboration with remote teams, and supports self-service analytics initiatives. Especially as enterprises experience rapid growth or face increasing regulatory scrutiny, a robust semantic layer becomes essential. It ensures that terms remain consistent even as datasets expand dramatically, analytics teams scale, and organizational priorities evolve rapidly—aligning closely with best practices in data pipeline dependency resolution and scheduling.

It’s more than a tool; a semantic layer implementation represents an essential strategic advantage when facing a complex global landscape of data privacy regulations. Clearly understandable semantic structures also reinforce compliance mechanisms and allow straightforward data governance through improved accuracy, clarity, and traceability, solidifying your enterprise’s commitment to responsible and intelligent information management.

Critical Steps Toward Semantic Layer Implementation

Defining and Aligning Business Terminology

The foundational step in a semantic layer implementation revolves around precisely defining common business terms, metrics, and KPIs across departments. Gathering cross-functional stakeholders—from executive sponsors to analysts—into data working groups or workshops facilitates clearer understanding and alignment among teams. Clearly documenting each term, its origin, and the intended context ultimately limits future misunderstandings, paving the way for a harmonious organization-wide adoption.

By clearly aligning terminology at the outset, enterprises avoid mismanaged expectations and costly reworks during advanced stages of analytics development and operations. Developing this standardized terminology framework also proves invaluable when dealing with idempotent processes, which demand consistency and repeatability— a topic we explore further in our blog post about idempotent data transformations. Through upfront alignment, the semantic layer evolves from simply translating data to becoming a value driver that proactively enhances efficiency and accuracy throughout your analytics pipeline.

Leveraging Advanced Technology Platforms

Identifying and utilizing a capable technology platform is paramount for effective semantic layer implementation. Modern enterprise analytics tools now provide powerful semantic modeling capabilities, including simplified methods for defining calculated fields, alias tables, joins, and relational mappings without needing extensive SQL or programming knowledge. Leaders can choose advanced semantic layer technologies within recognized analytics and data visualizations platforms like Tableau, Power BI, or Looker, or evaluate standalone semantic layer capabilities provided by tools such as AtScale or Cube Dev.

Depending on enterprise needs or complexities, cloud-native solutions leveraging ephemeral computing paradigms offer high scalability suited to the modern analytics environment. These solutions dynamically provision and release resources based on demand, making them ideal for handling seasonal spikes or processing-intensive queries—a subject further illuminated in our exploration of ephemeral computing for burst analytics workloads. Selecting and implementing the optimal technology platform that aligns with your organization’s specific needs ensures your semantic layer remains responsive, scalable, and sustainable well into the future.

Incorporating Governance and Data Privacy into Your Semantic Layer

Effective semantic layer implementation strengthens your organization’s data governance capabilities. By standardizing how terms are defined, managed, and accessed, organizations can embed data quality controls seamlessly within data operations, transitioning beyond traditional governance. We provide a deeper dive into this subject via our post on ambient data governance, emphasizing embedding quality control practices throughout pipeline processes from inception to consumption.

The adoption of a semantic layer also supports data privacy initiatives by building trust and transparency. Clear, standardized terminologies translate complex regulatory requirements into simpler rules and guidelines, simplifying the compliance burden. Simultaneously, standardized terms reduce ambiguity and help reinforce effective safeguards, minimizing sensitive data mishandling or compliance breaches. For industries that handle sensitive user information, such as Fintech organizations, clear semantic layers and disciplined governance directly bolster the enterprise’s capability to protect data privacy—this aligns perfectly with concepts detailed in our post on the importance of data privacy in Fintech. When your semantic layer architecture incorporates stringent governance controls from the start, it not only simplifies regulatory compliance but also strengthens customer trust and protects the organization’s reputation.

Ensuring Successful Adoption and Integration Across Teams

An effective semantic layer implementation requires more than technology; it requires organizational change management strategies and enthusiastic team adoption. Your data strategy should include targeted training sessions tailored to different user groups emphasizing semantic usability, ease of access, and self-service analytics benefits. Empowering non-technical end-users to leverage business-friendly terms and attributes dramatically enhances platform adoption rates around the enterprise and reduces pressure on your IT and analytics teams.

To encourage smooth integration and adoption, ensure ongoing feedback loops across teams. Capture analytics users’ suggestions for refinements continuously, regularly revisiting and adjusting the semantic layer to maintain alignment with changing business strategies. Additionally, user feedback might highlight potential usability improvements or technical challenges, such as service updates presenting issues—referenced more thoroughly in the resource addressing disabled services like Update Orchestrator Service. Cultivating a sense of co-ownership and responsiveness around the semantic layer fosters greater satisfaction, adoption, and value realization across teams, maintaining steadfast alignment within an evolving organization.

Building for Scalability: Maintaining Your Semantic Layer Long-Term

The modern data ecosystem continually evolves due to expanding data sources, changing analytic priorities, and new business challenges. As such, maintenance and scalability considerations remain as critical as initial implementation. Efficient semantic layer management demands continuous flexibility, scalability, and resilience through ongoing reassessments and iterative improvements.

Build governance routines into daily analytics operations to periodically review semantic clarity, consistency, and compliance. Regular documentation, schema updates, automation processes, and self-service tools can significantly simplify long-term maintenance. Organizations may also benefit from standardizing their analytics environment by aligning tools and operating systems for optimal performance, explored thoroughly in our insights on Mac vs Windows usability with JavaScript development. In essence, designing your semantic layer infrastructure with an adaptable mindset future-proofs analytics initiatives, allowing critical advances like real-time streaming analytics, machine learning, or interactive dashboards resiliently—ensuring long-term strategic advantage despite ongoing technological and organizational shifts.

Thank you for your support, follow DEV3LOPCOM, LLC on LinkedIn and YouTube.

by tyler garrett | May 30, 2025 | Data Management

Innovative organizations today are increasingly harnessing data analytics, machine learning, and artificial intelligence to stay ahead of their competition. But unlocking these powerful insights relies critically on not only accurate data collection and intelligent data processing but also responsible data management and privacy protection. Now more than ever, progressive market leaders understand that maintaining trust through customer consent compliance and transparency leads directly to sustained business growth. This blog sheds light on consent management integration with data processing, assisting decision-makers in confidently navigating their path towards data-driven innovation, trustworthy analytics, and long-lasting customer relationships.

The Importance of Consent Management in Modern Data Strategies

In an era marked by increased awareness of data privacy, consent management has emerged as a crucial component of modern business operations. Integrating consent management into your broader data warehousing strategy is not merely about adhering to regulatory requirements; it’s about building trust with your customers and ensuring sustainable growth. When effectively deployed, consent frameworks aid organizations in clearly and transparently managing user permissions for data collection, storage, sharing, and analytics purposes. Without robust consent processes, your enterprise risks operational bottlenecks, data breaches, and ethical pitfalls.

Efficient consent management works hand-in-hand with your organization’s existing strategies. For example, when employing data warehousing consulting services, consultants will design systems that proactively factor in consent validation processes and data usage tracking. This synergy empowers businesses to maintain data accuracy, support compliance audits effortlessly, and establish clear customer interactions regarding privacy. Ultimately, embedding privacy and consent from the onset strengthens your organization’s credibility, reduces legal exposures, and significantly drives business value from analytics initiatives.

Integrating Consent Management with Data Processing Workflows

To integrate consent management effectively, businesses must view it as intrinsic to existing data processes—not simply as compliance checkmarks added after the fact. The integration process often begins with aligning consent mechanisms directly within data ingestion points, ensuring granular, purpose-specific data processing. Organizations should map each interaction point—websites, apps, forms, APIs—to associated consent activities following clear protocols.

An essential aspect of successful integration involves understanding how transactional data enters production environments, processes inclusion into analytical environments, and feeds decision-making. Techniques like transactional data loading patterns for consistent target states provide a standardized approach to maintain data integrity throughout every consent-managed data pipeline. Data engineering teams integrate consent validation checkpoints within cloud databases, API gateways, and streaming-processing frameworks—ensuring data queries only run against consent-validated datasets.

Further, aligning consent management practices into your modern data stack safeguards your analytical outputs comprehensively. It ensures accumulated data resources directly reflect consumer permissions, protecting your business from unintended compliance violations. Adhering to clear standards optimizes your data stack investments, mitigates compliance-related risks, and positions your company as a responsible steward of consumer data.

Using Data Analytics to Drive Consent Management Improvements

Data-driven innovation is continually reshaping how businesses approach consent management. Advanced analytics—powered by robust data visualization tools like Tableau—can provide transformative insights into consumer behavior regarding consent preferences. By effectively visualizing and analyzing user consent data, organizations gain a detailed understanding of customer trust and trends, leading directly to customer-centric improvements in consent collection methodologies. Interested in getting Tableau set up for your analytics team? Our detailed guide on how to install Tableau Desktop streamlines the setup process for your teams.

Additionally, leveraging analytics frameworks enables compliance teams to identify potential compliance issues proactively. Powerful analytical processes such as Market Basket Analysis bring relevant combinations of consent decisions to the forefront, helping spot patterns that might indicate customer concerns or predictive compliance nuances. Combining these actionable insights with centralized consent systems helps ensure broader adoption. Analytics thus becomes instrumental in refining processes that deliver enhanced privacy communications and strategic privacy management.

Leveraging SQL and Databases in Consent Management Practices

SQL remains an essential language in consent management integration, especially considering its wide use and flexibility within relational databases. Mastery of SQL not only enables accurate data alignment but is also critical in the setup of granular consent frameworks leveraged across your organization.

For example, clearly distinguishing between collection and restriction usage scenarios is crucial. Understanding the finer points, such as the difference between SQL statements, can significantly streamline database workflows assuring proper data use. For clarifying these distinctions in practice, consider reviewing our article on understanding UNION vs UNION ALL in SQL. This foundational knowledge gives your data operations teams confidence and precision as they manage sensitive consent-related data.

More advanced roles in analytics and data science further capitalize on SQL capabilities, regularly executing audit queries and consent-specific analytics. Much like selecting a vector database for embedding-based applications, refining your database choice significantly increases the efficacy of your consent data storage and retrieval efficiency—especially when considering consent datasets in big-data contexts.

Visualization Accessibility Ensuring Ethical Consent Management

While collecting robust consent data is essential, presenting data visualization clearly and accessibly is equally critical. Ethical consent management processes increasingly require that insights from consent data analytics be understandable, transparent, and universally accessible. Your ongoing commitment to visualization accessibility guidelines and their implementation plays a key role in maintaining transparency in data practices—directly illustrating to consumers how their consent choices impact data use and subsequent business decisions.

Clear, accessible visual communication amplifies transparency, fostering consumer confidence and making explicit your organization’s ethical positions around consented privacy. Well-designed dashboards fortify your organization’s analytics and consent management efforts, serving executives the insights required to steer change effectively instead of stumbling into potential compliance or data governance crises. Need a strategy reboot for revitalizing your dashboards? Learn more by reviewing our advice on fixing a failing dashboard strategy, and empower your teams with clear, purposeful communication tools.

The Strategic Advantage: Consent Management as an Innovation Enabler

Progressive business leaders align innovation, data privacy, and consent management strategies, realizing effective data reutilization that nurtures brand loyalty and innovation culture. Highly innovative organizations integrate privacy into their strategic roadmap, leveraging insights derived from effective consent management to boost customer experience and competitive business positioning.

Creating a lasting culture of data responsibility also engages your team members—engineers, analysts, and strategists—empowering cross-functional collaboration. Robust consent infrastructure enhances knowledge-sharing dynamics between data science experts and business stakeholders, improving your internal talent development pipeline. To foster more robust interpersonal development within your organization’s scholar-practitioner analytics culture, review our helpful insights on networking with data science professionals.

Ultimately, mature consent management strategies enable your enterprise to seamlessly introduce innovative technologies to your customers without encountering privacy backlash or compliance issues. Align your governance strategy in conjunction with analytics and consent management infrastructures to turn regulatory requirements into innovations and opportunities consistently.

Conclusion

Integrated consent management isn’t simply about compliance or avoiding fines. It is a strategic enabler that fosters trust, ensures ethical data usage, and strengthens competitive advantage. Business leaders who fully leverage analytics and strategic data management build sustainable growth, fuel innovation, and navigate complexities with confidence. Investing deliberately in consent management integration helps unlock powerful insights while ensuring integrity, compliance, and responsible operations across your data landscape.

Thank you for your support, follow DEV3LOPCOM, LLC on LinkedIn and YouTube.