by tyler garrett | Jun 12, 2025 | Data Processing

Imagine being able to shave substantial processing time and significantly boost performance simply by mastering serialization techniques. In an environment where analytics, big data, and intelligent data processing are foundational to competitive advantage, optimized serialization methods often remain overlooked—but they could be the key differentiator your strategy needs. Serialization converts structured data from complicated objects into streamlined formats, ready to travel across the network or be stored efficiently. Although many decision-makers tend to trust default configurations and standard formats, custom serialization approaches can unlock massive gains in application speed, performance, and scalability. Today, we’ll guide you through the innovative tactics we use to elevate data workflows, giving you the tremendous speed advantage you’ve been seeking.

Understanding the Significance of Serialization in Modern Systems

In today’s rapidly evolving technological ecosystem, business leaders are no strangers to massive volumes of data and the urgency of extracting actionable insights quickly. Data serialization sits at the crossroads between storage efficiency, network optimization, and rapid data processing—facilitating timely and dependable decision making. As modern applications and artificial intelligence advance, the seamless transmission and storage of enormous, complex structured data are mandatory rather than optional. Yet many default serialization techniques leave substantial performance gains unrealized, offering only generic efficiency. Recognizing the importance of serialization pushes you toward innovative solutions and aligns performance optimization strategies with your larger technological vision.

Serialization directly influences how quickly data can move through your ETL (Extract-Transform-Load) pipelines. Modern platforms often demand powerful extraction, transformation, and loading methodologies to address data bottlenecks effectively. Custom serialization tricks integrate seamlessly with services like Dev3lop’s advanced ETL consulting solutions, creating opportunities to maximize throughput and transactional speed while minimizing storage costs. Effective serialization also increases clarity and consistency in your data schemas, dovetailing nicely with Dev3lop’s approach to implementing performant and reliable versioning explained in their insightful piece on semantic versioning for data schemas and APIs.

Choosing the Optimal Serialization Format

Serialization presents many format options, such as JSON, XML, Avro, Protocol Buffers, and FlatBuffers. Each format has distinct advantages, trade-offs, and precisely fitting scenarios. JSON, popular for readability and simplicity, can cause unnecessary slowness and increased storage costs due to its verbose nature. XML, an entirely adequate legacy format, tends to introduce unnecessary complexity and reduced parsing speeds compared to binary formats. Smart companies often move beyond these common formats and use advanced serialized data approaches like Apache Avro, Protobuf, or FlatBuffers to achieve superior serialization and deserialization speeds, often by orders of magnitude.

Apache Avro shines for schema evolution, making it an excellent choice when your schemas change frequently, similar to the practices recommended for schema management and evolution outlined in Dev3lop’s in-depth guide to SCD implementation in data systems. Protocol Buffers, designed by Google, offer incredible encoding speed, minimal bandwidth usage, and schema version management that facilitates disciplined, well-defined messaging within production environments. FlatBuffers, another Google innovation, offers extreme speed by allowing direct access to serialized data without parsing overhead—particularly optimal for real-time analytics and data-heavy use cases.

Implementing Zero-Copy Serialization Techniques

When speed is the quintessential requirement, zero-copy serialization tactics reduce the expensive overhead of data duplication in your serialization pipeline. Traditional approaches typically copy data between buffers before sending information to the destination system or serializing into customer’s parsers. Zero-copy serialization completely bypasses unnecessary buffer copying, dramatically reducing latency and tradeoffs in throughput. This optimized approach allows for rapid direct reads and significantly accelerates complex analytical data processes.

Zero-copy serialization benefits extend well beyond just faster streaming performance—they translate into significantly lower memory usage and enhanced system scalability. For instance, leveraging Zero-copy through FlatBuffers serialization removes unnecessary temporary data structures entirely, significantly boosting workloads involving huge real-time data streams such as financial tick data analytics, IoT telemetry, and real-time recommendation engines. Such high-performance requirements resonate well with Dev3lop’s disciplined data services targeting high-throughput analytics scenarios.

Optimizing Serialization Through Custom Encoding Schemes

The default encoding strategies that come standard with traditional serialization libraries are handy but not always optimal. Customized encoding schemes implemented specifically for your format and specific data types provide extreme boosts in serialization performance. For instance, numeric compression techniques, such as Varint encoding or Delta encoding, can significantly reduce byte-level representations of integer values, drastically decreasing storage requirements and execution times. By carefully assessing and adopting custom encoding strategies, you enable dramatic reductions in serialization size—with direct downstream benefits for network bandwidth and storage expenses.

Beyond numeric encodings, custom string encoding, including advanced dictionary encoding or specific prefix compression methods, further reduces payload size for large textual datasets. Strategically employing structured dictionary encoding positively impacts both speed and bandwidth allocation, essential when working with massive complex regulatory or industry-specific datasets requiring regular transmission over network channels. Such performance gains pair well with thoughtful, high-performing analytics dashboards and reporting standards, like those recommended in Dev3lop’s article on custom legend design for visual encodings.

Combining Serialization Tricks with Strategic Data Purging

Sometimes, the key to ridiculous data speed isn’t just faster serialization—it also involves strategizing what you keep and what you discard. Combining custom serialization tricks with strategic elimination of obsolete data can elevate your analytical speed even further. A robust serialization protocol becomes profoundly more powerful when you’re focused just on relevant, active data rather than sifting through outdated and irrelevant “zombie” records. Addressing and eliminating such “zombie data” effectively reduces pipeline overhead, data storage, and wasted computational resources, as explored in detail in Dev3lop’s insightful piece on identifying and purging obsolete data.

By integrating tools and processes that also conduct regular data hygiene at serialization time, your analytics capabilities become clearer, faster, and more accurate. Applications requiring instantaneous decision-making from large amounts of streaming or stored data achieve significant latency reductions. Likewise, enabling teams with realistic and relevant datasets drastically improves accuracy and efficiency—helping decision-makers understand the necessity of maintaining clean data warehouses and optimized data pipelines.

Measuring the Benefits: Analytics and ROI of Custom Serialization

Custom serialization strategically pays off in tangible analytics performance and measurement ROI. Faster serialization translates directly into shorter pipeline execution times and lower operating expenses. Analytical applications retuned for custom serialization often observe measurable latency reductions—improving strategic decision-making capacity across the enterprise. Once implemented, the business impact is measured not only in direct speed improvements but also in enhanced decision reaction speed, reduction in cloud-storage bills, improved user satisfaction via quicker dashboard report load times, and more transparent schema versioning.

Benchmarking serialization performance is crucial to proving ROI in strategic IT initiatives. By integrating serialization performance metrics into your larger analytics performance metrics, technical stakeholders align closely with business stakeholders—demonstrating in measurable terms the cost-savings and competitive value of custom serialization approaches. This disciplined measurement mirrors excellent practices in analytics strategy: data-driven decision-making rooted in quantitative measures and clear analytics visualization standards, as emphasized by Dev3lop’s inclusive approach to designing accessible visualization systems, and outlined through transparent insights in their informed overview of cost structures seen in Tableau’s pricing strategies.

Serialization — the Unsung Hero of Data Performance

As organizations grapple with ever-increasing data volume and complexity, custom serialization techniques can elevate data processing speed from routine to groundbreaking. Through optimal format selection, zero-copy techniques, custom encoding strategies, data hygiene, and rigorous performance measurement, you can transform serialization from a mundane concern into a competitive advantage. As specialists skilled in navigating complex data and analytics environments, we encourage experimentation, precise measurement, and strategic partnership to achieve unprecedented levels of speed and efficiency in your data workflows.

When deployed strategically, serialization not only boosts performance—it directly unlocks better-informed decisions, lower operational costs, faster analytics workflows, and higher overall productivity. Embrace the hidden potential buried in serialization techniques, and position your analytics initiatives ahead of competitors—because when performance matters, serialization makes all the difference.

Tags: Serialization, Data Optimization, Performance Tuning, ETL pipelines, Data Engineering, Analytics Strategy

by tyler garrett | Jun 12, 2025 | Data Processing

In an age where precise geospatial data can unlock exponential value—sharpening analytics, streamlining logistics, and forming the backbone of innovative digital solutions—precision loss in coordinate systems may seem small but can lead to large-scale inaccuracies and poor business outcomes. As organizations increasingly depend on location-based insights for everything from customer analytics to operational planning, understanding and mitigating precision loss becomes paramount. At Dev3lop’s advanced Tableau consulting, we’ve seen firsthand how slight inaccuracies can ripple into meaningful flaws in analytics and strategic decision-making processes. Let’s dive deeper into why coordinate precision matters, how it commonly deteriorates, and explore solutions to keep your data strategies precise and trustworthy.

Geolocation Data – More Complex Than Meets the Eye

At first glance, geolocation data seems straightforward: longitude, latitude, mapped points, and visualized results. However, the complexities hidden beneath the seemingly simple surface frequently go unnoticed—often by even advanced technical teams. Geospatial coordinates operate within an array of coordinate systems, datums, and representations, each bringing unique rules, intricacies, and potential pitfalls. Latitude and longitude points defined in one datum might temporarily serve your business intelligence strategies but subsequently cause inconsistencies when integrated with data from a different coordinate system. Such inaccuracies, if left unchecked, have the potential to mislead your analytics and result in unreliable insights—turning what seems like minor precision loss into major strategic setbacks.

Moreover, in the transition from manual spreadsheet tasks to sophisticated data warehousing solutions, businesses begin relying more heavily on exact geospatial positions to provide accurate analyses. Precise customer segmentation or efficient supply chain logistics hinge deeply on the reliability of location data, which organizations often assume to be consistent on any platform. Unfortunately, subtle inaccuracies created during the process of transforming or migrating coordinate data across multiple systems can quickly accumulate—leading to broader inaccuracies if not managed proactively from the outset.

Understanding Precision Loss and its Business Implications

Precision loss in geolocation workflows generally arises due to the way coordinate data is processed, stored, and translated between systems. Floating-point arithmetic, for example, is susceptible to rounding errors—a common issue software engineers and data analysts face daily. The slightest variance—just a few decimal places—can significantly impact the real-world accuracy, particularly for industries where spatial precision is critical. Consider logistics companies whose planning hinges on accurate route mappings: even minor discrepancies may cause unnecessary disruptions, delayed deliveries, or costly rerouting.

Precision loss also carries strategic and analytical implications. Imagine an enterprise relying on geospatial analytics for customer segmentation and market targeting strategies. Small inaccuracies multiplied across thousands of geolocation points can drastically affect targeted advertising campaigns and sales forecasting. As explained further in our article on segmenting your customer data effectively, the highest-performing analytics depend on alignment and accuracy of underlying information such as geospatial coordinates.

At Dev3lop, a company focused on Business Intelligence and innovation, we’ve witnessed precision errors that cause dashboard failures, which ultimately demands comprehensive revisits to strategic planning. Investing in proper validation methods and a robust data quality strategy early prevents costly adjustments later on.

Key Causes of Accuracy Loss in Geospatial Coordinate Systems

Floating-Point Arithmetic Constraints

The common practice of storing geospatial coordinates in floating-point format introduces rounding errors and inaccuracies in precision, especially noticeable when dealing with large geospatial datasets. Floating-point arithmetic inherently carries approximation due to how numbers are stored digitally, resulting in a cumulative precision loss as data is aggregated, processed, or migrated between systems. While this might feel insignificant initially, the accumulation of even tiny deviations at scale can yield drastically unreliable analytics.

Misalignment Due to Multiple Coordinate and Projection Systems

Organizations often source data from diverse providers, and each data supplier may rely upon different coordinate reference and projection systems (CRS). Transitioning data points from one CRS to another, such as WGS84 to NAD83 or vice versa, may create subtle positional shifts. Without careful attention or rigorous documentation, these small differences spiral into erroneous decisions downstream. As detailed in our exhaustive guide on how to mitigate such risks through our handling of late-arriving and temporal data, data integrity is paramount for strategic reliability in analytics.

Data Storage and Transmission Limitations

Data infrastructure also impacts geolocation accuracy, especially noteworthy in large-scale enterprise implementations. Issues like storing coordinates as lower precision numeric types or inaccurately rounded data during database migration workflows directly lead to diminished accuracy. Properly architecting data pipelines ensures precision retention, preventing data quality issues before they occur.

Mitigating Precision Loss for Greater Business Outcomes

Businesses seeking competitive advantage today leverage analytics and strategic insights fueled by accurate geolocation data. Legacy approaches or weak validation methods put precision at risk, but precision can be proactively protected.

One effective mitigation strategy involves implementing rigorous data quality assessments and validations. Organizations can employ automated precise validation rules or even build specialized automation tooling integrated within their broader privacy and data governance protocols. Collaborating with experts such as Dev3lop, who’ve established comprehensive frameworks such as our privacy impact assessment automation framework, can further help identify and remediate geospatial inaccuracies swiftly.

Additionally, organizations can transition from traditional input/output methods to more precise or optimized data processing techniques—such as leveraging memory-mapped files and other efficient I/O solutions. As clearly outlined in our technical comparisons between memory-mapped files and traditional I/O methods, choosing the right storage and processing approaches can help businesses keep geolocation precision intact.

Building Precision into Geolocation Strategies and Dashboards

Maintaining accuracy in geolocation workloads requires a thoughtful and strategic approach from the outset, with significant implications for analytical outcomes—including your dashboards and visualizations. As Dev3lop covered in depth in our article on fixing failing dashboard strategies, geolocation data’s accuracy directly influences business intelligence outputs. Ensuring the precision and reliability of underlying geospatial data improves your analytics quality, increasing trust in your digital dashboards and ultimately enhancing your decision-making.

Achieving geolocation accuracy begins by finding and acknowledging potential points of precision degradation and actively managing those areas. Collaborate with experts from advanced Tableau consulting services like ours—where we identify weak points within analytical workflows, build robust validation steps, and architect solutions designed to preserve coordinate accuracy at each stage.

Finally, regularly scrutinize and reprioritize your analytics projects accordingly—particularly under budget constraints. Learn more in our resource on prioritizing analytics projects effectively, emphasizing that precision-driven analytics improvements can yield significant gains for organizations invested in leveraging location insights precisely and effectively.

Navigating Precision Loss Strategically

Ultimately, organizations investing in the collection, analysis, and operationalization of geospatial data cannot afford complacency with regards to coordinate precision loss. Today’s geolocation analytical frameworks serve as a strategic cornerstone, providing insights that shape customer experiences, operational efficiencies, and innovation capabilities. Decision-makers must account for precision loss strategically—investing in proactive measures, recognizing potential pitfalls, and addressing them ahead of time. Your customer’s experiences, analytical insights, and organizational success depend on it.

Partnering with experienced consultants like Dev3lop, leaders in data-driven transformation, can alleviate the challenges associated with geolocation precision loss and reap considerable rewards. Together we’ll ensure your data strategies are precise enough not just for today, but durable and trustworthy for tomorrow.

by tyler garrett | Jun 12, 2025 | Data Processing

In the digital age, data is the lifeblood flowing through the veins of every forward-thinking organization. But just like the power plant supplying your city’s electricity, not every asset needs to be available instantly at peak performance. Using temperature tiers to classify your data assets into hot, warm, and cold storage helps businesses strike the right balance between performance and cost-effectiveness. Imagine a data strategy that maximizes efficiency by aligning storage analytics, data warehousing, and infrastructure costs with actual usage. It’s time to dive into the strategic data temperature framework, where a smart approach ensures performance, scalability, and your organization’s continued innovation.

What Are Data Temperature Tiers, and Why Do They Matter?

The concept of data temperature addresses how frequently and urgently your business accesses certain information. Categorizing data into hot, warm, and cold tiers helps prioritize your resources strategically. Think of hot data as the data you need at your fingertips—real-time actions, analytics dashboards, operational decision-making data streams, and frequently accessed customer insights. Warm data includes information you’ll regularly reference but not continuously—think monthly sales reports or quarterly performance analyses. Cold data applies to the archives, backups, and regulatory files that see infrequent access yet remain critical.

Understanding the nuances and characteristics of each temperature tier can significantly reduce your organization’s data warehousing costs and improve analytical performance. Adopting the right storage tier methodologies ensures rapid insights when you require immediacy, along with scalable economy for less frequently accessed but still valuable data. Charting a smart data tiering strategy supports the dynamic alignment of IT and business initiatives, laying the foundation to drive business growth through advanced analytics and strategic insights.

Navigating Hot Storage: Fast, Responsive, and Business-Critical

Characteristics and Use Cases for Hot Data Storage

Hot storage is built around the idea of instant access—it’s real-time sensitive, responsive, and always reliable. It typically involves the data you need instantly at hand, such as real-time transaction processing, live dashboards, or operational fleet monitoring systems. Leading systems like in-memory databases or solid-state drive (SSD)-powered storage solutions fit this category. Hot storage should be prioritized for datasets crucial to your immediate decision-making and operational procedures—performance here is paramount.

Key Considerations When Implementing Hot Data Tier

When developing a hot storage strategy, consider the immediacy and cost relationship carefully. High-performance solutions are relatively more expensive, thus requiring strategic allocation. Ask yourself these questions: Does this dataset need instant retrieval? Do I have customer-facing analytics platforms benefitting directly from instant data access? Properly structured hot-tier data empowers stakeholders to make split-second informed decisions, minimizing latency and improving the end-user experience. For instance, effectively categorized hot storage drives measurable success in tasks like mastering demand forecasting through predictive analytics, significantly boosting supply chain efficiency.

The Warm Tier: Finding the Sweet Spot Between Performance and Cost

Identifying Warm Data and Its Ideal Storage Scenarios

Warm storage serves data accessed regularly, just not immediately or constantly. This often covers reports, historical financials, seasonal analytics, and medium-priority workloads. Organizations frequently leverage cloud-based object storage solutions, data lakes, and cost-efficient network-attached storage (NAS)-style solutions for the warm tier. Such data assets do require reasonable responsiveness and accessibility, yet aren’t mission-critical on a second-to-second basis. A tailored warm storage strategy provides accessible information without unnecessarily inflating costs.

Implementing Effective Warm Data Management Practices

Effective organization and strategic placement of warm data within your data lake or data fabric can boost analytical agility and responsiveness when tapping into past trends and reports. Employing data fabric visualization strategies enables intuitive stitching of hybrid workloads, making it effortless for stakeholders to derive insights efficiently. The warm data tier is ideal for analytics platforms performing periodic assessments rather than real-time analyses. By properly managing this tier, organizations can significantly decrease storage expenditure without sacrificing essential responsiveness—leading directly toward optimized business agility and balanced cost-performance alignment.

Entering the Cold Data Frontier: Long-Term Archiving and Reliability

The Importance of Cold Data for Regulatory and Historical Purposes

Cold storage comprises data that you rarely access but must retain for regulatory compliance, historical analysis, backup recovery, or legacy system migration. Relevant examples include compliance archives, historical financial records, infrequent audit trails, and logs no longer frequently reviewed. Solutions for this tier range from lower-cost cloud archive storage to offline tape solutions offering maximum economy. Strategically placing historical information in cold storage significantly reduces unnecessary costs, allowing funds to be shifted toward higher-performing platforms.

Successful Strategies for Managing Cold Storage

Effectively managing cold storage involves clearly defining retention policies, backup protocols, and data lifecycle practices such as backfill strategies for historical data processing. Automation here is key—leveraging metadata and tagging makes cold data discoverable and streamlined for infrequent retrieval tasks. Consider adopting metadata-driven access control implementations to manage data securely within cold tiers, ensuring regulatory compliance and sustained data governance excellence. Smart cold-tier management doesn’t just protect historical data; it builds a robust analytical foundation for long-term operational efficiency.

Integrating Temperature Tiers into a Cohesive Data Strategy

Constructing an Adaptive Analytics Infrastructure

Your organization’s success hinges upon leveraging data strategically—and temperature tiering provides this capability. Smart organizations go beyond merely assigning data into storage buckets—they actively integrate hot, warm, and cold categories into a unified data warehousing strategy. With careful integration, these tiers support seamless transitions across analytics platforms, offering intuitive scalability and improved reliability. For example, quick-loading hot data optimizes interactive analytics dashboards using tools like Tableau Desktop. You can easily learn more about installing this essential tool effectively in our guide on installing Tableau Desktop.

Optimizing Total Cost of Ownership (TCO) with Tiered Strategy

An intelligent combination of tiered storage minimizes overall spend while maintaining outstanding analytics capabilities. Deciding intelligently regarding data storage temperatures inherently optimizes the Total Cost of Ownership (TCO). Holistic tiered data integration enhances organizational agility and drives strategic financial impact—direct benefits include optimized resource allocation, improved IT efficiency, and accelerated innovation speed. Our team at Dev3lop specializes in providing tailored data warehousing consulting services, positioning our clients ahead of the curve by successfully adopting temperature-tiered data strategies.

Begin Your Journey with Expert Insights and Strategic Support

Choosing the optimal data storage temperature tier demands strategic foresight, smart technical architecture, and a custom-tailored understanding to maximize business value. Whether you are performing real-time analytics, seasonal performance reviews, or working toward comprehensive regulatory compliance, precise data tiering transforms inefficiencies into innovation breakthroughs. Our expert technical strategists at Dev3lop offer specialized hourly consulting support to help your team navigate storage decisions and implementation seamlessly. Make the most of your infrastructure budget and explore opportunities for strategic efficiency. Learn right-sizing analytics, platforms optimization, and more, leveraging analytics insights to grow your capabilities with our blog: “10 Effective Strategies to Boost Sales and Drive Revenue Growth“.

Your journey toward strategic hot, warm, and cold data management begins today—let’s innovate and accelerate together.

by tyler garrett | Jun 9, 2025 | Data Processing

Imagine you’re an analytics manager reviewing dashboards in London, your engineering team is debugging SQL statements in Austin, and a client stakeholder is analyzing reports from a Sydney office. Everything looks great until you suddenly realize numbers aren’t lining up—reports seem out of sync, alerts are triggering for no apparent reason, and stakeholders start flooding your inbox. Welcome to the subtle, often overlooked, but critically important world of time zone handling within global data processing pipelines. Time-related inconsistencies have caused confusion, errors, and countless hours spent chasing bugs for possibly every global digital business. In this guide, we’re going to dive deep into the nuances of managing time zones effectively—so you can avoid common pitfalls, keep your data pipelines robust, and deliver trustworthy insights across global teams, without any sleepless nights.

The Importance of Precise Time Zone Management

Modern companies rarely function within a single time zone. Their people, customers, and digital footprints exist on a global scale. This international presence means data collected from different geographic areas will naturally have timestamps reflecting their local time zones. However, without proper standardization, even a minor oversight can lead to severe misinterpretations, inefficient decision making, and operational hurdles.

At its core, handling multiple time zones accurately is no trivial challenge—one need only remember the headaches that accompany daylight saving shifts or determining correct historical timestamp data. Data processing applications, streaming platforms, and analytics services must take special care to record timestamps unambiguously, ideally using coordinated universal time (UTC).

Consider how important precisely timed data is when implementing advanced analytics models, like the fuzzy matching algorithms for entity resolution that help identify duplicate customer records from geographically distinct databases. Misalignment between datasets can result in inaccurate entity recognition, risking incorrect reporting or strategic miscalculations.

Proper time zone handling is particularly critical in event-driven systems or related workflows requiring precise sequencing for analytics operations—such as guaranteeing accuracy in solutions employing exact-once event processing mechanisms. To drill deeper, explore our recent insights on exactly-once processing guarantees in stream processing systems.

Common Mistakes to Avoid with Time Zones

One significant error we see repeatedly during our experience offering data analytics strategy and MySQL consulting services at Dev3lop is reliance on local system timestamps without specifying the associated time zone explicitly. This common practice assumes implicit knowledge and leads to ambiguity. In most database and application frameworks, timestamps without time zone context eventually cause headaches.

Another frequent mistake is assuming all servers or databases use uniform timestamp handling practices across your distributed architecture. A lack of uniform practices or discrepancies between layers within your infrastructure stack can silently introduce subtle errors. A seemingly minor deviation—from improper timestamp casting in database queries to uneven handling of daylight saving changes in application logic—can escalate quickly and unnoticed.

Many companies also underestimate the complexity involved with historical data timestamp interpretation. Imagine performing historical data comparisons or building predictive models without considering past daylight saving transitions, leap years, or policy changes regarding timestamp representation. These oversights can heavily skew analysis and reporting accuracy, causing lasting unintended repercussions. Avoiding these pitfalls means committing upfront to a coherent strategy of timestamp data storage, consistent handling, and centralized standards.

For a deeper understanding of missteps we commonly see our clients encounter, review this article outlining common data engineering anti-patterns to avoid.

Strategies and Best-Practices for Proper Time Zone Handling

The cornerstone of proper time management in global data ecosystems is straightforward: standardize timestamps to UTC upon data ingestion. This ensures time data remains consistent, easily integrated with external sources, and effortlessly consumed by analytics platforms downstream. Additionally, always store explicit offsets alongside local timestamps, allowing translation back to a local event time when needed for end-users.

Centralize your methodology and codify timestamp handling logic within authoritative metadata solutions. Consider creating consistent time zone representations by integrating timestamps into “code tables” or domain tables; check our article comparing “code tables vs domain tables implementation strategies” for additional perspectives on managing reference and lookup data robustly.

Maintain clear documentation of your time-handling conventions across your entire data ecosystem, encouraging equilibrium in your global teams’ understanding and leveraging robust documentation practices that underline metadata-driven governance. Learn more in our deep dive on data catalog APIs and metadata access patterns, providing programmatic control suitable for distributed teams.

Finally, remain vigilant during application deployment and testing phases, especially when running distributed components in different geographies. Simulation-based testing and automated regression test cases for time-dependent logic prove essential upon deployment—by faithfully reproducing global use scenarios, you prevent bugs being identified post-deployment, where remediation usually proves significantly more complex.

Leveraging Modern Tools and Frameworks for Time Zone Management

Fortunately, organizations aren’t alone in the battle with complicated time zone calculations. Modern cloud-native data infrastructure, globally distributed databases, and advanced analytics platforms have evolved powerful tools for managing global timestamp issues seamlessly.

Data lakehouse architectures, in particular, bring together schema governance and elasticity of data lakes with structured view functionalities akin to traditional data warehousing practices. These systems intrinsically enforce timestamp standardization, unambiguous metadata handling, and schema enforcement rules. For transitioning teams wrestling with heterogeneous time data, migrating to an integrated data lakehouse approach can genuinely streamline interoperability and consistency. Learn more about these practical benefits from our detailed analysis on the “data lakehouse implementation bridging lakes and warehouses“.

Similarly, adopting frameworks or libraries that support consistent localization, such as moment.js replacement libraries like luxon or date-fns for JavaScript applications, or Joda-Time/Java 8’s built-in date-time APIs in Java-based apps can reduce significant manual overheads and inherently offset handling errors within your teams. Always aim for standardized frameworks that explicitly handle intricate details like leap seconds and historical time zone shorts.

Delivering Global Personalization Through Accurate Timing

One crucial area where accurate time zone management shines brightest is delivering effective personalization strategies. As companies increasingly seek competitive advantage through targeted recommendations and contextual relevance, knowing exactly when your user interacts within your application or website is paramount. Timestamp correctness transforms raw engagement data into valuable insights for creating genuine relationships with customers.

For businesses focusing on personalization and targeted experiences, consider strategic applications built upon context-aware data policies. Ensuring accuracy in timing allows stringent rules, conditions, and filters based upon timestamps and user locations to tailor experiences precisely. Explore our recent exploration of “context-aware data usage policy enforcement” to learn more about these cutting-edge strategies.

Coupled with accurate timestamp handling, personalized analytics dashboards, real-time triggered messaging, targeted content suggestions, and personalized product offers become trustworthy as automated intelligent recommendations that truly reflect consumer behaviors based on time-sensitive metrics and events. For more insights into enhancing relationships through customized experiences, visit our article “Personalization: The Key to Building Stronger Customer Relationships and Boosting Revenue“.

Wrapping Up: The Value of Strategic Time Zone Management

Mastering globalized timestamp handling within your data processing frameworks protects the integrity of analytical insights, product reliability, and customer satisfaction. By uniformly embracing standards, leveraging modern frameworks, documenting thoroughly, and systematically avoiding common pitfalls, teams can mitigate confusion effectively.

Our extensive experience guiding complex enterprise implementations and analytics projects has shown us that ignoring timestamp nuances and global data handling requirements ultimately cause severe, drawn-out headaches. Plan deliberately from the start—embracing strong timestamp choices, unified standards, rigorous testing strategies, and careful integration into your data governance frameworks.

Let Your Data Drive Results—Without Time Zone Troubles

With clear approaches, rigorous implementation, and strategic adoption of good practices, organizations can confidently ensure global timestamp coherence. Data quality, reliability, and trust depend heavily on precise time management strategies. Your organization deserves insightful and actionable analytics—delivered on schedule, around the globe, without any headaches.

by tyler garrett | Jun 7, 2025 | Data Processing

Data may appear dispassionate, but there’s a psychology behind how it impacts our decision-making and business insights. Imagine confidently building forecasts, dashboards, and analytics, only to have them subtly fail due to a seemingly invisible technical limitation—integer overflow. The subtle psychological shift occurs when teams lose trust in the analytics outputs they’re presented when incorrect insights are generated from faulty data types. Decision-makers depend on analytics as their compass, and integer overflow is the silent saboteur waiting beneath the surface of your data processes. If you want your data and analytics initiatives to inspire trust and deliver strategic value, understanding the nature and impact of integer overflow is no longer optional, it’s business-critical.

What Exactly is Integer Overflow and Why Should You Care?

Integer overflow occurs when arithmetic operations inside a computational environment exceed the maximum memory allocated to hold the data type’s value. It’s a bit like placing more water in a container than it can hold—eventually, water spills out, and data become scrambled and unpredictable. In the realm of analytics, overflow subtly shifts meaningful numbers into misleading and unreliable data points, disrupting both computations and strategic decisions derived from them.

For data-driven organizations and decision-makers, the implications are massive. Consider how many critical business processes depend upon accurate analytics, such as demand forecasting models that heavily rely on predictive accuracy. If integer overflow silently corrupts numeric inputs, outputs—especially over long data pipelines—become fundamentally flawed. This hidden threat undermines the very psychology of certainty that analytics aim to deliver, causing stakeholders to mistrust or question data quality over time.

Moving beyond manual spreadsheets, like those highlighted in our recent discussion on the pitfalls and limitations of Excel in solving business problems, organizations embracing scalable big data environments on platforms like Google Cloud Platform (GCP) must factor integer overflow into strategic assurance planning. Savvy businesses today are partnering with experienced Google Cloud Platform consulting services to ensure their analytics initiatives produce trusted and actionable business intelligence without the hidden risk of integer overflow.

The Hidden Danger: Silent Failures Lead to Damaged Trust in Analytics

Integer overflow errors rarely announce themselves clearly. Instead, the symptoms appear subtly and intermittently. Revenues or order volumes which spike unexpectedly or calculations that fail quietly between analytical steps can escape immediate detection. Overflows may even generate sensible-looking but incorrect data, leading stakeholders unwittingly into flawed strategic paths. It erodes confidence—which, in data-driven decision-making environments, is vital to organizational psychological well-being—and can irreparably damage stakeholder trust.

When data falls victim to integer overflow, analytics teams frequently face a psychological uphill climb. Decision-makers accustomed to clarity and precision begin to question the accuracy of dashboard insights, analytical reports, and even predictive modeling. This is especially important in sophisticated analytics like demand forecasting with predictive models, where sensitivity to slight calculation inaccuracies is magnified. Stakeholders confronted repeatedly by integer-overflow-influenced faulty analytics develop skepticism towards all information that follows—even after resolving the underlying overflow issue.

Data strategists and business executives alike must acknowledge that analytics quality and confidence are inextricably linked. Transparent, trustworthy analytics demand detecting and proactively resolving integer overflow issues early. Modern analytical tools and approaches—such as transitioning from imperative scripting to declarative data transformation methods—play a crucial role in mitigating overflow risks, maintaining organizational trust, and preserving the psychological capital gained through accurate analytics.

Identifying at Risk Analytics Projects: Where Integer Overflow Lurks

Integer overflow isn’t confined to any particular area of analytics. Still, certain analytics use cases are particularly susceptible, such as data transformations of large-scale social media datasets like the scenario explained in our current exploration of how to effectively send Instagram data to Google BigQuery using Node.js. Large aggregations, sums, running totals, or any repeated multiplication operations can lead to integer overflow vulnerabilities very quickly.

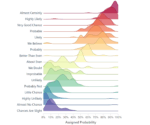

Similarly, complex multidimensional visualizations run the risk of overflow. If you’re creating advanced analytics, such as contour plotting or continuous variable domain visualizations, data integrity is critical. Overflow errors become catastrophic, shifting entire visualizations and undermining stakeholder interpretations. As strategies evolve and analytics mature, integer overflow quietly undermines analytical confidence unless explicitly addressed.

In visualization contexts like Tableau—a business intelligence software we extensively explored in-depth through our popular blog The Tableau Definition From Every Darn Place on the Internet—overflow may manifest subtly as incorrect chart scaling, unexpected gaps, or visual anomalies. Stakeholders begin interpreting data incorrectly, impacting critical business decisions and removing strategic advantages analytics sought.

Proactively identifying analytical processes susceptible to integer overflow requires a vigilant strategic approach, experienced technical guidance, and deep understanding of both analytical and psychological impacts.

Simple Solutions to Preventing Integer Overflow in Analytics

Integer overflow seems intimidating, but avoiding this silent analytical killer is entirely achievable. Organizations can incorporate preventive analytics strategies early, ensuring overflow stays far from critical analytical pipelines. One excellent preventive approach involves explicitly choosing data types sized generously enough when dealing with extremely large datasets—like those created through big data ingestion and analytics pipelines.

Moving toward robust, standardized data transformation methods also helps teams ward off overflow risks before they materialize into problems. For example, introducing declarative data transformation approaches, as we’ve discussed in our recent article on moving beyond imperative scripts to declarative data transformation, empowers data operations teams to define desired outcomes safely without the psychological baggage of constant overflow surveillance.

Similarly, in complex multidimensional analytics scenarios, leveraging color channel separation for multidimensional encoding, or other visual-analysis principles, helps detect and isolate abnormalities indicating data calculation irregularities—such as potential overflow—before harming final visualizations.

Finally, ongoing analytical rigor, including regular code audits, proactive overflow testing, and implementing “guardrail” analytical operations ensures strategic vulnerabilities won’t arise unexpectedly. Organizations leveraging professional GCP consulting services enjoy significant support implementing these solutions, providing both technical and psychological reassurance that analytical data is robust and overflow-proofed.

Ensuring Psychological Assurance: Building Analytics You Can Trust

Integer overflow doesn’t merely create technical data challenges; it also wakes psychological disruptions for stakeholders who rely upon analytics. Leaders need assured, confident analytics—uncompromised by silent overflow errors—that steer strategic execution with clarity and certainty. Analytical efforts and advanced dashboards, like our examples of creating interactive dashboards in Tableau, lose strategic impact if they’re psychologically undermined by mistrust.

Preventing integer overflow positions organizations to leverage analytics strategically and psychologically. Confident stakeholders engage fully with analytical insights and trust the conclusions presented by reliable data-driven strategies. Directly confronting integer overflow enhances overall strategic performance, building robust analytics pipelines that embed analytical rigor at every step and generate stakeholder confidence continuously.

Integer overflow is a clear example of data psychological sabotage, silently harming strategic analytics goals. Now is the time leaders—from C-suite to senior analytical teams—to acknowledge and proactively manage integer overflow risk. Doing so builds trust, aligns analytics strategically, and psychologically prepares organizations to excel confidently in today’s analytics-first era.