by tyler garrett | May 25, 2025 | Data Visual

In the contemporary world of analytics, making sense of continuous data variables can be overwhelming without the right approach. Continuous data variables can reveal hidden trends, unlock unprecedented competitive advantages, and maximize return on your investment in data analytics tools. However, only when these insights are effectively communicated through intelligent binning strategies can your organization unlock their full potential. Visual binning transforms complex numerical data into intuitive, easy-to-grasp visuals—enabling faster, smarter, and strategic business decision-making. In this article, we’ll explain powerful visual binning techniques and strategies, ensuring your analytics yield clarity, accuracy, and actionable insights that transform raw numbers into strategic advantage.

Understanding the Need for Visual Binning in Data Analysis

The overwhelming abundance of continuous numerical data holds immense potential, yet often remains untapped due to its inherent complexity. With numbers spanning infinite ranges, continuous data can become difficult to interpret without an effective method to simplify its granularity into understandable, actionable categories. This is precisely where visual binning emerges—offering strategists and stakeholders invaluable insights by segmenting continuous variables into bins, then visually presenting these bins to facilitate comprehension and decision-making. Rather than sifting through rows of complex numbers, stakeholders are armed with intuitive visual groupings that clearly portray trends, outliers, patterns, and anomalies.

Visual binning addresses common business scenarios in predictive analytics, including accurate demand prediction, profit forecasting, risk assessments, and marketing segmentation analysis. Effective binning enables organizations to unlock insights that improve forecasting accuracy, streamline data-driven decisions, and boost marketing efficacy.

For example, consider working with PostgreSQL databases under complex data handling scenarios; a skilled expert from our team specializing in PostgreSQL consulting services could efficiently build stored procedures or views to help visualize bins effectively at the database level itself, thus enhancing your analytics processes end-to-end.

Approaches to Visual Binning: Selecting the Right Methodology

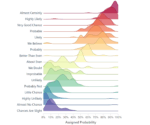

Choosing the right visual binning strategy hinges upon clearly understanding the type, distribution, and specific business questions associated with your data. Common binning methodologies include Equal-width binning, Equal-frequency (quantile) binning, and Custom interval binning.

Equal-width Binning: Simplicity in Visualization

Equal-width binning divides continuous variables into segments with consistent interval widths. For example, ages between 10-20, 20-30, and so on. This popular method is straightforward to interpret and highly intuitive for visualization, making it ideal for deploying quick, actionable insights. If your analysis goal involves easily understandable breakdowns, particularly for broad decision guidance, equal-width binning provides simplicity and clarity.

However, simplicity may obscure subtle distribution irregularities or mask important outliers, leaving business-critical fluctuations hidden in a single bin. For organizations chasing deeper insights into subtle patterns—for example, when considering subtle operational optimizations like those discussed in predictive pipeline scaling based on historical workloads—Equal-width binning should be deployed carefully alongside additional analytical methods.

Equal-frequency (Quantile) Binning: Precise Insights Delivered

Quantile binning divides data into bins holding an even distribution of data points rather than consistent intervals. For instance, quartiles and percentiles use this approach. Equal-frequency bins adeptly highlight density differentiation—capturing subtle differences and outliers, thus popularizing it among advanced analytics applications. This approach works exceptionally well for businesses that must closely monitor distribution shifts, outliers, or intense competitive analytical scenarios where deeper insights directly create strategic advantage.

For situations like customer segmentation and profitability analyses, where understanding subtle trends at specific intervals is crucial, quantile binning provides superior granularity. Businesses adopting modern practices, such as those explored in our recent article on real-time data processing using Node.js, would significantly benefit from precise quantile binning.

Custom Interval Binning: Tailored for Your Organization’s Needs

In highly specialized contexts, standard methods won’t suffice. That’s where custom interval binning steps into play—empowering organizations to create personalized bins based upon domain-specific expertise, business logic, or industry standards. Often utilized in areas that require precise categorization, such as healthcare analytics, financial credit risk modeling, or customer segmentation related to highly specific metrics, custom binning provides unmatched flexibility and strategic insight.

Establishing custom bins entails significant domain expertise and data-driven rationale aligned with clear business objectives. By leveraging custom intervals, stakeholders align analytics close to business objectives such as gathering clear data for case studies—something we explore deeply in creating data-driven case studies that convert. Precision control and tailored visualizations are hallmark advantages of this approach, helping precisely inform complex decisions.

Visualization Best Practices: Transforming Insight into Action

No matter which binning methodology you adopt, effective visualization remains crucial. Making data accessible to decision-makers requires implementing tangible visual best practices. Clearly labeling bins, defining intervals transparently, and incorporating appropriate visual encoding techniques are essential.

Animated transitions in visualizations, as explored in our guide on animated transitions in interactive visualizations, further augment user experience. Animated transitions enable stakeholders to trace clearly through the story your data reveals—bridging the gap between analysis and business strategy effectively.

Interactive visualizations also enhance organizational understanding—allowing stakeholders to dive deeper into the data or dynamically adjust binning strategies. Dashboards that showcase visual binning paired with intuitive, interactive consumer controls effectively enable non-technical stakeholders, empowering them with real-time, actionable insights tailored specifically to their evolving business context.

Advanced Strategies: Enhancing Your Visual Binning Capabilities

Beyond standard visualization strategies, businesses should explore advanced methodologies including data security implementations, pipeline optimization, and leveraging AI-powered software tools.

For instance, integrating database-level row-level security as illustrated in our article on row-level security implementation in data transformation flows ensures secure visualizations and analytics—improving stakeholder trust.

In addition, optimizing your data pipeline using techniques such as those detailed in our guide on Bloom filter applications for pipeline optimization helps accelerate analytics and removes unnecessary latency from visualizations. Embracing AI also profoundly expands analytic capabilities, as outlined in 20 use cases where ChatGPT can help small businesses, a starter resource for organizations looking to innovate further in their strategic capabilities.

Being conscious about software and operational costs proves essential too; as highlighted in our insights into escalating SaaS costs, adopting flexible and cost-effective analytics tooling directly boosts continuous success.

Applying Visual Binning to Your Business

Proper implementation of visual binning strategies allows businesses to make smarter decisions, identify underlying risks and opportunities faster, and accelerate stakeholder understanding. Identifying the right methodology, integrating powerful visualization practices, adopting strategic security measures, and continuously evaluating operational optimization ensures your organization can confidently leverage continuous data variables for sustainable, strategic decision-making.

Are you ready to leverage visual binning strategies in your analytics process? Reach out today, and let our seasoned consultants strategize your analytics journey, unleashing the full potential of your business data.

Thank you for your support, follow DEV3LOPCOM, LLC on LinkedIn and YouTube.

by tyler garrett | May 24, 2025 | Solutions

In the digital age, organizations are constantly navigating the evolving landscape of data management architectures—striving to extract maximum business value from increasingly large and complex data sets. Two buzzing concepts in contemporary data strategy discussions are Data Mesh and Data Lake. While both aim to structure and optimize data utilization, they represent distinct philosophies and methodologies. As decision-makers, navigating these concepts can seem daunting, but understanding their differences and ideal use-cases can greatly streamline your analytics journey. At Dev3lop LLC, we specialize in empowering businesses to harness data strategically. Let’s demystify the debate of Data Mesh vs. Data Lake, clarifying their fundamental differences and helping you identify the architecture best suited to propel your organization’s analytics and innovation initiatives.

The Fundamental Concepts: What is a Data Lake?

A Data Lake is a centralized repository designed for storing vast volumes of raw, structured, semi-structured, and unstructured data. Unlike traditional relational databases that require schemas before data loading, Data Lakes operate on a schema-on-read approach. In other words, data is stored in its original format, only becoming structured when queried or processed. This flexibility allows organizations to ingest data rapidly from different sources without extensive pre-processing, a significant advantage in settings demanding agility and speed.

The Data Lake architecture became popular with big data technologies such as Apache Hadoop and has evolved considerably over the years into cloud-based solutions like AWS S3, Azure Data Lakes, and Google Cloud Storage. Data Lakes are particularly beneficial when working with extensive data sets for machine learning and real-time analytics, enabling data scientists and analysts to explore datasets freely before settling on established schemas. If you’re curious about modern real-time approaches, check out our expert insights in our detailed guide on real-time data processing with Node.js.

However, Data Lakes, while powerful and flexible, aren’t without challenges. Without diligent governance and rigorous metadata management, Lakes can quickly transform into “data swamps,” becoming unwieldy and difficult to manage, inadvertently introducing silos. Understanding and tackling this issue proactively is critical: here’s an insightful article we wrote to help businesses overcome this problem on spotting data silos holding your business back.

Introducing Data Mesh: A Paradigm Shift?

Unlike centralized Data Lakes, a Data Mesh represents a decentralized approach to data architecture—embracing domain-driven design principles and distributed data responsibility. Pioneered by tech leader Zhamak Dehghani, Data Mesh seeks to distribute ownership of data management and governance to individual business domains within the company. Each domain autonomously manages and produces data as a product, prioritizing usability across the organization. Thus, rather than centralizing data authority with IT departments alone, a Data Mesh links multiple decentralized nodes across the organization to drive agility, innovation, and faster decision-making.

This distributed accountability encourages precise definitions, versioned datasets, and increased data quality, empowering non-technical stakeholders (domain experts) with greater control. The Data Mesh approach reframes data consumers into prosumers—in both producing and consuming valuable analytical assets—resulting in more effective cross-team collaboration. At Dev3lop, we guide clients toward advanced analytics and innovative data-driven cultures; explore our specialized focus in this space on our advanced analytics consulting services page to find out more.

When Should You Consider a Data Mesh Approach?

A Data Mesh approach proves particularly beneficial for organizations experiencing data scalability challenges, data quality inconsistencies, and slow innovation cycles due to centralized, monolithic data team bottlenecks. Enterprises focusing heavily on complex, diverse data products across departments (marketing analytics, financial forecasts, and customer experience analysis) often thrive under a Data Mesh architecture.

Of course, shifting architecture or embracing decentralization isn’t without its hurdles; established businesses often face challenges innovating within existing infrastructures. To effectively manage this digital transformation, consider reading our expert guidelines on how to innovate inside legacy systems without replacing them.

Comparing Data Lake vs. Data Mesh Architectures: Key Differences Explained

Centralized vs. Distributed Governance

One of the key differences between Data Lakes and Data Mesh architectures is how data governance is handled. Data Lakes traditionally use centralized governance models, where a dedicated data team handles quality control, metadata management, and security. Conversely, Data Mesh relies on decentralized governance structures, empowering domain-specific teams to independently manage their own data, adopting domain-led decision-making standard practices that enhance agility across enterprise organizations.

Adopting decentralized data governance requires a well-understood semantic structure across your organization. Explore our guide entitled What is a Semantic Layer, and Why Should You Care? to better understand the benefits.

Technology Stack and Complexity

Data Lakes have matured technologically and come with clearly defined architectures optimized for rapid scaling—especially cloud-based solutions—and straightforward implementation. In contrast, a Data Mesh requires a more intricate set of technologies, demanding domain-specific expertise and advanced automation tools. Distributed architectures inherently come with higher complexity—not only technological complexity, but cultural challenges as well. Organizations aspiring towards a self-service analytics implementation flicker between an approach geared towards open exploration with tools like Tableau (check out our quick guide here on how to download Tableau desktop) and distributed governance rules established for Data Mesh compatibility.

Real World Applications: When Does Each Architecture Make the Most Sense?

Data Lakes are ideal when centralization, speed of ingestion, cost-efficiency in handling massive unstructured data, and straightforward implementation are primary objectives. They work exceptionally well for organizations where large-scale analytics, machine learning, and big data experimentation provide strategic wins. If you’re facing situations in which Excel spreadsheets dominate analytical processes, centralized alternatives like Data Lakes could modernize your analytics pipeline—see our discussion on Excel’s limitations from a strategic standpoint in our article “If You Use Excel to Solve a Problem, You’re in a Waterfall Project”.

On the other hand, a Data Mesh best suits complex organizations striving toward a data-driven culture. Multi-domain businesses, enterprises with diverse analytical needs, or organizations launching innovation initiatives benefit greatly from its decentralized approach. Data Mesh encourages continuous innovation through domain expertise and evidence-driven decision-making. For those considering this approach, our piece on strategically growing through data utilization, “Uncovering Hidden Opportunities: Unleashing Growth Potential Through Data Analytics”, provides valuable insights into maximizing your architectural choice.

Best Practices for Choosing Your Ideal Data Architecture

Start by addressing methodical questions about business goals, complexity of data domains, data governance maturity, operational readiness for decentralization, and organizational culture. Both architectures can deliver exceptional value in the right context: companies must select architectures strategically based on their current state and desired analytics trajectory.

In parallel, emphasizing transparency, ethics, and trust in data architectures is critical in today’s regulatory landscape and business outcomes. Organizations looking toward innovation and excellence should view data ethics as core to their roadmap—read more in our detailed discussion on ethical data collection and analysis practices.

Conclusion: Aligning Data Architecture to Your Strategic Goals

Choosing between Data Lake and Data Mesh architectures involves clearly assessing your organization’s unique analytics challenges, governing patterns, scale of analytics efforts, and technological maturity. At Dev3lop, we guide organizations through strategic analytics decisions, customizing solutions to achieve your goals, enhance data visualization capabilities (check out our article on Data Visualization Principles), and foster innovation at all organizational levels.

by tyler garrett | May 24, 2025 | Data Processing

As enterprises grow and data proliferates across global boundaries, ensuring the efficient operation of data pipelines across data centers is no longer just smart—it’s essential. Carefully crafting a cross-datacenter pipeline topology allows businesses to minimize latency, optimize costs, and maintain service reliability. For organizations stepping into international markets or scaling beyond their initial startup boundaries, understanding how to architect data transfers between geographically dispersed servers becomes crucial. At our consultancy, we have witnessed firsthand how effective topology design can dramatically improve operational efficiency, accuracy in analytics, and overall competitive advantage. In this blog, we’ll delve deeper into what businesses should know about cross-datacenter pipeline topology design, including best practices, common pitfalls, innovations like quantum computing, and valuable lessons learned from successful implementations.

The Importance of Datacenter Pipeline Topology

At a basic level, pipeline topology refers to the structured arrangement determining how data flows through various points within a system. When we expand this concept across multiple data centers—potentially spread across regions or countries—a thoughtful topology ensures data pipelines perform efficiently, minimizing latency issues and balancing workloads effectively.

Without a well-designed topology, organizations risk bottlenecks, data inconsistencies, and slow delivery of vital analytics insights. Decision-makers often underestimate the strategic significance of how data centers communicate. However, as proven in many successful ETL implementations, adopting strategic pipeline topology layouts enhances an organization’s abilities to leverage real-time or near-real-time analytics.

Effective topology design is especially critical where sophisticated visual analytics platforms like Tableau are deployed. As experts in the space—highlighted within our advanced Tableau consulting services—we frequently observe how datacenter topology profoundly impacts dashboard load speeds and overall user satisfaction. Ultimately, topology choices directly affect how quickly analytics become actionable knowledge, influencing both customer-centric decision-making and internal operations efficiency.

Optimizing Data Flow in Cross-Datacenter Pipelines

Optimizing data flow hinges on a few core principles: reducing latency, efficiently balancing traffic loads, and ensuring redundancy to support consistent uptime. Organizations that wisely choose data center locations can take advantage of strategically placed clusters, minimizing distances and thus significantly cutting latency. For instance, enterprises pursuing analytics for improving community wellness and safety—similar to the initiatives detailed in our featured resource on data analytics enhancing public safety in Austin—depend heavily on real-time data availability, making latency reduction crucial.

A common challenge is maintaining necessary synchronization among data centers. When properly synchronized, modern technologies like automated system snapshotting and backups become swift tasks rather than time-consuming activities. Businesses employing solutions such as automatic snapshots (as explained in our resource on Tableau server automated dashboard images) realize substantial gains in operational efficiency and recovery speed.

Additionally, complexity often compounds with the addition of multiple multi-cloud providers. Integrating hybrid cloud strategies demands a comprehensive understanding of topology best practices. Leveraging cloud-native applications helps organizations target critical optimizations and align data flows more effectively. Pipeline architects must constantly reassess and fine-tune routing rules, interpreting traffic analytics throughout production environments.

Harnessing Advanced Technologies for Topology Design

Modern technologies open novel opportunities and innovations for enhancing cross-datacenter pipeline topology designs. Traditionally, IT teams primarily relied upon conventional relational database technologies. However, increasingly organizations are exploring alternatives like Node.js to streamline processes efficiently. Our insights into streamlining data pipelines with Node.js clearly illustrate the significant performance improvements possible using event-driven, non-blocking platforms. Integrating node-based pipelines into your topology can substantially lower latencies and increase pipeline reliability—key aspects for organizations managing large-scale international data workflows.

Beyond traditional server-based approaches, cutting-edge innovations are approaching commercialization rapidly. Quantum computing, for example, is positioned as a transformative force that could revolutionize real-time analytic capabilities. In our resource detailing the impact of quantum computing, we explored how quantum computing could revolutionize data processing, highlighting significant enhancements in data handling speeds and computational efficiency. As quantum capacities mature, pipeline topology designs will become even more sophisticated, leveraging quantum algorithms to process workloads faster, smarter, and more efficiently than ever before.

By investing today in modern architectures that leave room for rapid technological advancements, organizations set themselves up for ongoing success and future-proof their infrastructure for new innovations and opportunities.

Avoiding Common Pitfalls in Pipeline Topology Implementations

Effective topology design also involves recognizing mistakes before they impact your organization negatively. One of the most common pitfalls is not fully considering redundancy and failover processes. Reliability is paramount in today’s data-driven market, and system outages often result in significant lost opportunities, damaged reputations, and unexpected expenses. Implementing multiple availability zones and mirrored environments helps teams maintain continuous operation, thereby significantly reducing downtime and mitigating potential disruptions.

A second notable pitfall is resource misallocation—over or under-provisioning of infrastructure resources due to inadequate workload forecasting. Decision-makers often assume that creating redundancy or buying excess capacity translates into efficient design. However, this approach can easily result in increased operating costs without commensurate performance gains. Conversely, undersized architectures frequently lead to performance bottlenecks, causing frustrated end-users and intensifying demands on IT personnel.

Finally, another frequent oversight is insufficient monitoring and failure to adequately utilize real-time diagnostics. Businesses need appropriate analytics embedded into their pipelines to fully understand resource usage patterns and data traffic issues. Implementing these analytical insights encourages smarter decision-making, driving continuous improvements in data pipeline reliability, latency, and resource utilization.

Strategically Visualizing Pipeline Data for Enhanced Decision-Making

Visual analytics take on special importance when applied to datacenter topology designs. Effective visualizations allow stakeholders—from C-suite executives to technical architects—to quickly spot potential choke points, qualifying issues such as overloaded or underutilized nodes. Insights derived from powerful visualization tools facilitate faster resolutions and better-informed infrastructure optimizations. Techniques described in our guide to creative ways to visualize your data empower both business and technology personas to stay aligned and proactive about potential issues.

Organizations investing in thoughtfully created data visualizations enjoy greater agility in handling challenges. They become adept at identifying inefficiencies and planning proactive strategies to optimize communication across geographies. Visual data clarity also enables quicker reactions to unexpected scenario changes, allowing teams to dynamically manage data pipelines and make better-informed capacity-planning decisions.

However, enterprises should also be mindful that visual analytics alone don’t guarantee sound decision-making. Effective visualization should always complement strong underlying data strategies and informed decision processes—an idea elaborated in our analysis on why data-driven doesn’t always mean smart decisions. Deploying contextual knowledge and insight-oriented visualization dashboards accelerates intelligent, purposeful decisions aligned with business goals.

Future-proofing Your Cross-Datacenter Pipeline Strategy

The world of data analytics and technology continuously evolves. Organizations that adopt a forward-looking stance toward pipeline topology ensure their competitive edge remains sharp. Your pipeline topology design should be scalable—ready for regulatory changes, geographical expansion, and increased data volumes. Future-proofing means designing architectures that allow companies to easily incorporate emerging technologies, optimize operations, and handle complexity without significant disruptions or costly system-wide restructuring.

In particular, companies should closely watch emerging tech like quantum computing, new virtualization technologies, and heightened security requirements to shape their strategic roadmap. Being prepared for innovations while maintaining flexibility is the hallmark of intelligent architecture planning.

As a consultancy focused on data, analytics, and innovation, we continually advise clients to adopt industry best practices, incorporating new technology developments strategically. Whether businesses confront particular error-handling scenarios (like those illustrated in our technical article on resolving this service cannot be started in safe mode errors) or aim to explore transformative opportunities like quantum computing, prioritizing flexibility ensures a robust and future-ready pipeline topology.

Tapping into professional expertise and proactively planning helps businesses to design cross-datacenter pipeline topologies that become intelligent catalysts of growth, efficiency, and innovation—remaining agile despite the inevitable shifts and complexities the future brings.

by tyler garrett | May 24, 2025 | Data Processing

In today’s rapidly innovating technology environment, businesses deal with mountains of streaming data arriving at lightning-fast velocities. Traditional approaches to data processing often stumble when confronted with high-throughput data streams, leading to increased latency, operational overhead, and spiraling infrastructure costs. This is precisely where probabilistic data structures enter the picture—powerful yet elegant solutions designed to approximate results efficiently. Embracing probabilistic approximations allows businesses to enjoy speedy analytics, reliable estimates, and streamlined resource utilization, all critical advantages in highly competitive, real-time decision-making scenarios. Let’s explore how harnessing probabilistic data structures can empower your analytics and innovation, enabling you to extract maximum value from streaming data at scale.

What Are Probabilistic Data Structures and Why Should You Care?

Probabilistic data structures, as the name implies, employ probabilistic algorithms to provide approximate answers rather than exact results. While this might initially seem like a compromise, in practice, it allows you to drastically reduce your memory footprint, achieve near-real-time processing speeds, and rapidly visualize critical metrics without sacrificing meaningful accuracy. Compared to conventional data structures that require linear space and time complexity, probabilistic alternatives often utilize fixed, small amounts of memory and provide results quickly—making them ideally suited for handling immense volumes of real-time data streaming into systems. Businesses that implement probabilistic data structures frequently realize enormous benefits in infrastructure cost savings, enhanced processing efficiency, and rapid analytics turn-around.

As software consultants specializing in data, analytics, and innovation, we often advise clients in sectors from finance and digital marketing to IoT and supply-chain logistics on the strategic use of probabilistic tools. Particularly if you’re handling massive user-generated data sets—such as social media data—probabilistic approaches can radically simplify your larger analytics workflows. Consider investing in solutions like these, to significantly streamline practices and deliver immediate value across multiple teams. Whether your goal is reliable anomaly detection or faster decision-making processes, understanding probabilistic approximations allows you to clearly focus resources on what truly matters—applying actionable insight toward effective business strategies.

Commonly Used Probabilistic Data Structures for Stream Processing

Bloom Filters: Efficient Membership Queries

Bloom filters efficiently answer questions about whether a data item is possibly in a dataset or definitely not. Operating in a remarkably small memory footprint and providing answers with negligible latency, they serve best when handling massive real-time streams, caching layers, and database queries—scenarios where sacrificing a tiny false-positive rate is a sensible tradeoff for massive performance gains. Companies handling high-velocity user streams—for example, social media networks or web analytics services—leverage Bloom filters for quickly checking duplicate items, optimizing database reads, and filtering potentially irrelevant inputs in early processing stages.

Beyond traditional analytics infrastructure, creative use of Bloom filters aids approximate query processing in interactive data exploration scenarios by immediately filtering irrelevant or redundant records from vast data pools. Strategically implementing Bloom filtering mechanisms reduces overhead and enables quicker decision-making precisely when business responsiveness matters most.

HyperLogLog: Rapid Cardinality Estimations

HyperLogLog algorithms excel at rapidly and resource-efficiently estimating distinct counts (cardinality) in massive live-data streams. Traditional counting methods—such as hashing values and maintaining large sets—become impractical when data volume and velocity explode. HyperLogLog, however, can handle counts into the billions using mere kilobytes of memory with exceptional accuracy—typically within one or two percent of true counts.

For businesses focused on user experiences, real-time advertisement performance, or assessing unique users at scale (like social media data analytics), HyperLogLogs become invaluable tools. Leveraging HyperLogLog structures is perfect for use alongside innovative analytic approaches, such as those explored in our detailed guide to understanding why to data warehouse your social media data. Deciding decisively with accurate approximations accelerates your analytics and unlocks fresh, high-value insights.

Count-Min Sketch: Efficient Frequency Counting

When streaming data requires frequency estimations while under strict memory constraints, Count-Min Sketch has emerged as the leading probabilistic solution. Designed to efficiently approximate the frequency of items appearing within constant streams, the Count-Min Sketch provides quick insights needed for analytics or anomaly detection. This algorithm is exceedingly useful for identifying trending products, pinpointing system anomalies in log data, or developing highly responsive recommendation systems.

Practical implementations of Count-Min Sketch are especially relevant for real-time dashboarding, system operations analysis, and AI-powered anomaly detection tasks. If your business analytics relies on frequency-based trend detection, consider implementing Count-Min Sketch algorithms. This approach complements advanced schema methodologies like those we’ve previously discussed in detail—such as polymorphic schema handling in data lakes—to maximize operational efficiency and analytical effectiveness.

Practical Business Use Cases of Probabilistic Data Structures

To illustrate clearly why businesses increasingly gravitate toward probabilistic data structures, let’s explore practical scenarios of high-impact application. Online retailers leverage Bloom filters to quickly streamline searches of product recommendations, cache lookups, and shopper profiles. Social media firms utilize HyperLogLog for measuring the precise yet scalable reach of online campaigns. Similarly, cybersecurity applications frequently employ Count-Min Sketches—detecting anomalous network traffic patterns indicative of virtual intruders attempting access attempts.

Beyond technical implementation, probabilistic data structures directly encourage innovative thinking and faster decision-making. Businesses devoted to exploring causation and fully leveraging data-backed decision processes will want to explore related analytic methodologies like causal inference frameworks for decision support. By layering probabilistic data structures, these innovative analytic models empower competitive insights and enriched decision-making frameworks within your organization.

Integrating Probabilistic Structures into Your Data Processing Pipeline

Implementing probabilistic structures requires focused expertise, strategic planning, and attentive management of accuracy-performance tradeoffs. By leveraging scalable technology tools—such as Node.js for real-time solutions (detailed expert guidance is found through our Node.js Consulting Services)—businesses ensure performant stream processing seamlessly aligns with organizational objectives. Carefully integrating probabilistic data structures into live analytic and operational systems ensures their full advantage is properly extracted and optimized.

Companies undertaking the digital transformation journey strategically position themselves ahead of competitors by complementing traditional storage and analytic strategies—such as backward-forward schema compatibility mechanisms described in our discussion on schema evolution patterns, or the effective visualization practices outlined in our comparative analysis on Data Visualization Techniques. Developing a robust, innovative data posture based upon strategic implementation of probabilistic approaches generates meaningful long-term competitive advantage.

The Future: Synergies Between Probabilistic Structures and Advanced Analytics

Looking forward, probabilistic data approaches perfectly complement the ongoing data analytics revolution—most clearly manifested through rapidly developing AI and ML solutions. Advanced machine learning algorithms naturally integrate probabilistic models for anomaly detection, clustering analysis, predictive insights, and sophisticated data categorization workflows. With AI and ML practices rapidly reshaping data industry trends, probabilistic data structures offer essential tools, ensuring accurate yet scalable analytic outputs without straining performance or infrastructure resources.

If you are interested in exploring deeper connections between probabilistic methods and modern artificial intelligence and machine learning, consider examining our insights regarding the AI and ML revolution. Strategically integrating these emerging analytics patterns facilitates understanding complex user behaviors, interpreting market trends, and making competitively astute decisions.

by tyler garrett | May 24, 2025 | Data Processing

In today’s rapidly evolving data landscape, the ability to efficiently handle data insertions and updates—known technically as upserts—is crucial for organizations committed to modern analytics, data integrity, and operational excellence. Whether managing customer details, real-time analytics data, or transactional information, a robust upsert strategy ensures consistency and agility. Understanding how upsert implementations differ across various data stores empowers strategic technology leaders to select the optimal platform to sustain data-driven growth and innovation. This blog post provides clarity on common upsert patterns, highlights pertinent considerations, and guides informed decision-makers through the architectural nuances that can shape successful data practices.

What is an Upsert?

An upsert—a combination of “update” and “insert”—is an operation in database management that seamlessly inserts a new record if it does not already exist, or updates it if it does. By merging two critical database operations into one atomic task, upserts simplify application complexity, optimize performance, and ensure data integrity. Understanding the power behind this hybrid command allows technology leaders to implement structured solutions streamlined around efficient data management.

Upsert logic plays a pivotal role across a vast spectrum of applications from real-time analytics dashboards to complex ETL pipelines. Efficient implementation significantly speeds up data synchronization, enhances data accuracy, and simplifies transactional handling. Rather than relying on separate logic for insert-and-update scenarios, businesses can encapsulate complex logic within scalable applications. Leveraging upserts appropriately can unlock productivity gains, ensuring development resources remain available for higher-value activities focused on business goals rather than routine technical intricacies. An optimized upsert strategy streamlines your data architecture and amplifies operational efficiencies.

Upsert Strategies in Relational Databases

Traditional SQL Databases and Upsert Techniques

In the relational database landscape—dominated by SQL-based platforms like PostgreSQL, MySQL, SQL Server, and Oracle—several standardized methodologies have emerged. Platforms usually implement specialized SQL commands such as “INSERT INTO… ON DUPLICATE KEY UPDATE” for MySQL or “INSERT INTO… ON CONFLICT DO UPDATE” in PostgreSQL. SQL Server utilizes the “MERGE” statement to cleverly handle parallel update and insert requirements, whereas Oracle similarly employs its powerful “MERGE INTO” syntax.

Leveraging these built-in relational database mechanisms provides reliable transaction processing, ensures data integrity rules are strictly enforced, and reduces complexity—thus enabling agile data teams to design powerful business logic without complicated boilerplate. Decision-makers adopting SQL-centric data architecture benefit from the simplified yet robust nature of regularized upsert logic, ensuring processes remain streamlined and maintainable.

Additionally, understanding fundamental SQL concepts such as the differences between UNION and UNION ALL can significantly enhance a technology team’s capabilities in structuring intelligent, performance-focused upsert solutions within relational environments.

NoSQL Databases: Understanding and Optimizing Upserts

MongoDB and Document-Based Stores

NoSQL Databases, typified by MongoDB, Cassandra, or Couchbase, favor flexibility, scalability, and agile schema design compared to traditional SQL databases. Among these, MongoDB upserts have gained prominence as a critical operational tool, using commands like “updateOne()”, “updateMany()”, or “findAndModify()” with the upsert:true option to facilitate efficient self-contained updates or inserts.

MongoDB’s efficient handling of native JSON-like document structures supports agile data mapping, enabling rapid development workflows. Development teams often find this dramatically simplifies data ingestion tasks associated with modern applications, real-time analytics, or IoT monitoring scenarios. Moreover, NoSQL upsert capabilities smoothly align with Node.js implementations, where flexible, lightweight data manipulation via MongoDB drivers helps foster streamlined data pipelines. For expert Node.js development guidance, you might explore our specialized Node.js consulting services.

Beyond mere performance gains, NoSQL platforms offer inherent fault tolerance, geographical data replication, and scalability across extensive datasets—key features for organizations focused on innovation. Purposeful upsert implementation in this domain provides an effective way to leverage NoSQL readiness for evolving business requirements and dynamic schema changes.

Cloud Data Warehouses: Optimizing Analytics Workflows

Redshift, BigQuery, and Snowflake Upsert Techniques

Cloud-native data warehouses such as AWS Redshift, Google’s BigQuery, and Snowflake streamline analytical workflows, leveraging massive scalability and distributed computing advantages. Upserting in these platforms often involves distinct replacement or merging approaches through SQL commands or platform-specific functions. For example, BigQuery offers MERGE operations tailored to effortlessly consolidate enormous datasets with impressive efficiency and accuracy.

Leveraging upserts within cloud data warehouses becomes especially beneficial in ELT (Extract, Load, Transform) architecture, which has consistently demonstrated powerful results in real-world analytical applications. Dive deeper into why this matters through our article covering real-use cases where ELT significantly outperformed ETL. Cloud data warehouses function impeccably within ELT workflows due to their capability to manage massive-scale merges and incremental refresh scenarios effectively.

Strategically selecting modern, cloud-native platforms for enterprise analytics, complemented by carefully planned upsert approaches, empowers analytic teams and improves query performance, data freshness, and overall agility. Effective upsert strategies in cloud environments ultimately drive organizational competitiveness and informed decision-making via timely, actionable insights.

Real-Time Upserts in Streaming Platforms

Apache Kafka and Stream Processing Solutions

Modern businesses increasingly depend on capturing and leveraging real-time data to maintain competitive advantages. The burgeoning importance of event-streaming platforms like Apache Kafka, coupled with message processing systems such as Apache Flink, Apache Beam, or Node.js-based frameworks, makes real-time upsert handling critical.

Stream processing solutions allow companies to execute operations that blend incoming data streams with existing data. Apache Kafka’s KTable abstraction, for example, elegantly supports event-sourced logic, ensuring data changes progressively update existing states rather than overwriting entire datasets. This real-time operational transparency notably enhances user experience and maintains privacy integrity—an aspect detailed further in our analysis of data privacy in fintech.

Implementing efficient real-time upserts can translate into meaningful benefits ranging from near-instantaneous financial transaction reconciliations to dynamic personalization in user dashboards. Businesses wielding the power of event-driven patterns combined with intelligent upsert practices drastically improve data immediacy, accuracy, and responsiveness.

Upsert Challenges and Best Practices

Avoiding Pitfalls in Implementation

Implementing an efficient upsert strategy requires understanding common challenges—such as performance bottlenecks, concurrency conflicts, and schema management. One common challenge arises when complex data transformations and pipeline dependencies create cascading impacts across data ingestion—a topic explored further in our article, fixing failing dashboard strategies. Clearly defining update-vs-insert hierarchies, ensuring unique identifier integrity, and handling conflicts predictably with minimal performance impact are key considerations in navigating pitfalls.

Best practices for handling upsert conflicts include careful management of unique constraints, smart indexing strategies, leveraging transactions for consistency, and choosing the appropriate database or data pipeline mechanisms. Businesses will benefit significantly by investing time upfront in understanding how their chosen platform aligns with core application data needs, analyzing real-world use cases, and planning capacity and concurrency limits.

Clearly articulated policies, well-defined procedures, and understanding your analytical goals shaped by informed strategic implementation set positive directions. To further foster consumer trust in accurate data handling, teams can explore our best-practice advice for enhancing user experience through clear privacy policies.

Conclusion: Strategic Upserts Drive Innovation and Efficiency

An effective upsert strategy transforms analytical workflows, optimizes data-driven agility, and provides businesses with significant competitive advantages. Choosing the correct upsert implementation strategy demands assessing your business goals, evaluating workloads realistically, and understanding both relational and NoSQL data nuances.

When implemented strategically, an optimized upsert solution strengthens data pipelines, enables insightful analytics, and powers impactful innovation across your organization. Explore several practical examples through our detailed report: Case studies of successful ETL implementations.