by tyler garrett | Jun 18, 2025 | Data Visual

Harnessing renewable energy is crucial in today’s energy landscape, yet understanding the performance quality and gaps of solar panels and wind turbines can pose challenges for organizations without robust analytics. Solar and wind performance dashboards represent a seismic shift from traditional, opaque decision-making processes, empowering businesses and stakeholders to utilize data-driven insights strategically. Real-time analytics, machine learning integration, and dynamic visualizations transform renewable energy performance data from raw numbers into actionable intelligence. As the business world accelerates toward sustainable practices, embracing analytics innovations such as renewable energy performance dashboards is no longer optional—it’s imperative. In this article, we’ll break down how stakeholders can leverage comprehensive analytics dashboards to maximize the efficiency, effectiveness, and return-on-investment of renewable energy projects.

Unlocking Insight with Solar and Wind Performance Dashboards

In an age of sustainability and keen environmental awareness, renewable energy sources like wind and solar have transitioned from supplementary solutions to primary energy providers. This transition comes with a heightened responsibility to ensure maximum efficiency and transparency. Renewable energy dashboards offer visibility, accessibility, and actionable insights into solar arrays and wind farms by aggregating key performance indicators (KPIs), power output metrics, predictive maintenance alerts, and weather trend data— all encapsulated within straightforward visualizations and real-time monitoring systems.

Utilizing structured dashboards, operators can predict hardware maintenance needs, detect performance outliers, and monitor how weather patterns impact energy generation. Consider, for instance, the critical role of real-time data aggregation in enhancing system responsiveness; a targeted implementation of microservice telemetry aggregation patterns for real-time insights can significantly increase situational awareness. Professionals leading such implementations must recognize and strategically prioritize real-time analytics over batch processing; however, under certain conditions, batch processing can be surprisingly more beneficial, offering improved accuracy and reliability for historical analysis and large data sets.

With clear dashboards at their fingertips, decision-makers proactively assess and strategize their renewable energy initiatives, aligning infrastructure investments with actual performance insights. From executive stakeholders to technical managers, dashboards democratize data access, facilitating smarter operational, financial, and environmental decisions.

Harnessing the Power of Data Integration and Analytics

The backbone of effective solar and wind dashboard systems revolves around data integration. Renewable energy operations create immense quantities of real-time and historical data, calling for expert handling, pipeline automation, and robust analytical foundations. Ensuring seamless integration across hardware telemetry, weather data APIs, energy grid feeds, and compliance systems represents a sophisticated data challenge best addressed with proven analytical and integration methodologies.

To ensure real-time dashboard accuracy, organizations often explore integrations via customized APIs, capitalizing on specialized consultants who offer unique solutions, much like services targeted at specific technology stacks, such as Procore API consulting services. Such integrations streamline data syncing and enhance dashboard responsiveness, reducing data latency issues that plague traditional energy analytics models. Properly implemented data architectures should embrace immutable storage paradigms to protect the data lifecycle, highlighting the importance of strong immutable data architectures and their beneficial implementation patterns for sustained accuracy and traceability.

Critical to successful analytics implementation is deep understanding of SQL, database structures, and data flows inside analytics platforms. A practical grasp of foundational concepts like accurately executing table selection and joins is best explained in articles such as demystifying the FROM clause in SQL, proving invaluable to engineers seeking efficient and accurate analytical queries that underpin trustworthy dashboards.

Protecting Data Security in a Renewable Energy Environment

As businesses increasingly rely on renewable energy analytics dashboards, ensuring data privacy and maintaining secure environments becomes paramount. Robust security and compliance methodologies must underpin every aspect of renewable analytics, reducing risk exposure from vulnerabilities or breaches. In light of stringent privacy regulations, analytics leadership must clearly understand and apply rigorous strategies surrounding data privacy and regulatory standards. To implement effective governance, consider exploring deeper insights available within our comprehensive resource, Data privacy regulations and their impact on analytics, to understand compliance requirements thoroughly.

Furthermore, organizations should adopt automated machine learning methodologies to identify sensitive or personally identifiable information, employing best practices like those detailed in automated data sensitivity classification using ML. Leveraging advanced machine learning algorithms can continuously monitor incoming datasets and dynamically flag sensitive data fields, ensuring continuous compliance and regulatory adherence. Renewable energy plants generate large volumes of operational data streams potentially containing security-sensitive or compliance-relevant parameters requiring continuous review.

Taking the additional step of clearly establishing roles, permissions, and privileges, such as those laid out within our guide to granting privileges and permissions in SQL, enables organizations to maintain clear accountability and security standards. Clear security practices empower organizations’ analytics teams and reinforce trust when collaborating and sharing actionable insights.

Optimizing Performance with Semantic Layer Implementation

Renewable energy businesses utilize semantic layers to bridge the gap between raw analytical data and understandable business insights. Integrating a semantic layer into renewable energy dashboards—covering essential KPIs like solar power efficiency, turbine functionality, downtime predictions, and output variation alerts—dramatically simplify data comprehension and expedite strategic response. To better understand the semantic layer impact, consider reviewing our expert resource: “What is a semantic layer and why should you care?“, designed to clarify and simplify adoption decisions for leaders ready to turbocharge their analytics clarity.

Through semantic layers, complicated technical terms and detailed datasets transform into straightforward, intuitive business metrics, facilitating clear communication between technical and non-technical team members. Semantic layers ensure consistent data interpretations across teams, significantly bolstering strategic alignment regarding renewable energy operations and investment decisions. Additionally, data field management within dashboards should include proactive identification and alerts for deprecated fields, guided by practices detailed within our resources such as data field deprecation signals and consumer notification, ensuring the long-term accuracy and usability of your dashboards.

Adopting semantic layer best practices helps stakeholders maintain confidence in analytics outputs, driving improved operational precision and strategic engagement. Simply put, semantic layers amplify renewable energy analytics capabilities by eliminating ambiguity, fostering shared understanding, and emphasizing accessible clarity.

Driving Futures in Renewable Energy through Intelligent Analytics

In today’s competitive renewable energy landscape, organizations cannot afford to leave their decision-making processes to chance or intuition. The future of solar and wind energy depends heavily on harnessing sophisticated analytics at scale. Solar and wind performance dashboards empower organizations with transparency, actionable insights, and intelligent predictions, democratizing knowledge and unlocking fresh growth opportunities. In doing so, renewable energy stakeholders pivot from being reactive observers to proactive innovators, leading positive change in sustainability and resource management.

Whether you’re strategizing the next upgrade cycle for wind farms, pinpointing locations for optimal solar installation, or supporting green corporate initiatives, embracing advanced analytics vastly increases your competitive edge. Renewable energy is destined to redefine global energy markets, and with intelligent dashboards guiding your decision-making, your organization can confidently pioneer sustainable innovation, economic success, and environmental responsibility.

Ready to unlock the transformative potential of renewable energy analytics within your organization? Contact us today to speak to our experts and discover how cutting-edge analytics empower industry-leading renewable energy performance.

Thank you for your support, follow DEV3LOPCOM, LLC on LinkedIn and YouTube.

by tyler garrett | Jun 18, 2025 | Data Visual

The global pandemic has dramatically underscored the critical need for advanced analytics and visualization tools in managing health crises. Decision-makers must rely on accurate forecasting, timely insights, and clear visualizations to act promptly and effectively. Pandemic preparedness analytics, powered by sophisticated data visualization models, offers powerful capabilities to predict disease spread dynamics and evaluate intervention effectiveness. Leveraging cutting-edge data warehousing and analytics enables organizations to implement proactive strategies, allocate resources efficiently, and ultimately save lives. As we increasingly encounter complex, real-world health challenges, integrating practical analytics solutions becomes non-negotiable. Let’s explore how visualization models are transforming pandemic preparedness and response, bridging gaps between data complexity and actionable insights.

Why Visualizing Disease Spread Matters

Visualizing disease spread is essential because it provides stakeholders clarity amid uncertainty. When facing rapidly escalating infections, incomplete information leads to reactive instead of proactive responses. Visualization models transform raw epidemiological data into understandable maps, heatmaps, temporal trends, and interactive dashboards—enhancing stakeholders’ decision-making abilities. Being equipped with such advanced visualization tools helps policymakers visualize contagion pathways, hotspots, population vulnerability, and resource deficits clearly, facilitating targeted actions and timely initiatives.

Disease visualizations also enable effective communication among data scientists, public officials, healthcare organizations, and the general populace. With transparency and straightforward representations, data visualization mitigates misinformation and confusion. It empowers communities and institutions to base decisions on scientific insights rather than conjecture and fear. Moreover, real-time visualization solutions directly relate to quicker policy adaptations and improved situational awareness. Properly implemented data visualization solutions connect critical data points to answer difficult questions promptly—such as calculating and minimizing resource strain or evaluating lockdown measures effectiveness. For organizations seeking expert assistance harnessing their data effectively, consider exploring professional data warehousing consulting services in Austin, Texas.

Predictive Modeling: Forecasting Future Disease Trajectories

Predictive analytics modeling helps health professionals anticipate infection pathways, potential outbreak magnitudes, and geographical spread patterns before they become overwhelming crises. Leveraging historical and real-time health datasets, statistical and machine learning models assess risk and duration, forecasting future hotspots. These predictive visualizations effectively communicate complex statistical calculations, helping public health leaders act swiftly and decisively. By including variables such as population movement, vaccination rates, climate impacts, and preventive plans, visualization models reliably anticipate epidemic waves, accurately predicting infection transmission dynamics weeks ahead.

With predictive modeling, healthcare authorities can optimize resource allocation, hospital capacity, vaccine distribution strategies, and targeted interventions, ensuring minimal disruption while curbing infection rates. For instance, trend-based contour plots, such as those described in the article on contour plotting techniques for continuous variable domains, provide stakeholders detailed visual clarity regarding affected geographic locations and projected case distributions. Therefore, proactive strategies become achievable realities rather than aspirational goals. Integrating visualization-driven predictive modeling into public health management ensures readiness and preparedness—leading to earlier containment and reduced health repercussions.

Geospatial Analytics: Mapping Infection Clusters in Real-Time

Geospatial analytics uniquely leverages geographical data sources—GPS-based tracking, case data, demographic vulnerability databases—to track epidemics spatially. With spatial analytics tools, epidemiologists rapidly identify infection clusters, revealing hidden patterns and outbreak epicenters. Heat maps and real-time dashboards serve as actionable insights, pinpointing concentrations of disease, timeline progressions, and emerging high-risk areas. This speed-of-analysis allows policymakers, hospitals, and emergency response teams to swiftly redirect resources to communities facing immediate threats and prioritize intervention strategies effectively.

Most importantly, geovisualizations empower users to drill into local data, identifying granular infection rate trends to promote targeted restrictions or redistribution of medical supplies. Tools that leverage strong underlying analytics infrastructure built on hexagonal architecture for data platforms offer flexibility and scalability needed to handle data-intensive geospatial analysis reliably and quickly. Robust spatial visualization dashboards embed historical progression data to understand past intervention outcomes, allowing stakeholders to learn from previous waves. The direct visualization of infection clusters proves indispensable for intervention deployment, significantly shortening response timeframes.

Real-time vs Batch Processing: Accelerating Pandemic Response Through Stream Analytics

Traditional batch processing techniques, while comfortable and widely practiced, potentially delay crucial insights needed in pandemic responses. By contrast, real-time streaming analytics transforms pandemic preparedness, delivering instantaneous insights on disease spread—enabling rapid mitigation actions benefiting public safety and resource optimization. Adopting analytics methodologies that treat data as continuous flows rather than periodic batches allows near-instantaneous understanding of unfolding situations. For a deeper perspective comparing these two paradigms, consider exploring insights provided in the insightful article “Batch is comfortable, but stream is coming for your job”.

Real-time streaming empowers immediate updates to dashboards, interactive time-series charts, and live alert mechanisms that convey essential milestones, trends, and anomalies explicitly. Equipped with instantaneous visual analytics, healthcare strategists become agile, acting with remarkable efficiency to contain outbreaks instantly. Integrating real-time analytics helps policymakers capitalize faster on early warning indicators, curb exposure risks, and enhance overall emergency response effectiveness, delivering decisive health benefits to populations at risk.

Tackling Data Challenges: Data Privacy, Storage, and Performance

Incorporating effective visualization modeling faces inherent challenges, including data skewness, computational storage bottlenecks, confidentiality worries, and parallel processing inefficiencies. Addressing these considerations is crucial to real-world deployment success. Safeguarding individual privacy while managing sensitive medical information in analytics pipelines requires stringent adherence to data privacy regulations, such as HIPAA and GDPR. Organizations must ensure all visualization analytics respect confidentiality while deriving accurate insights necessary for informed decision-making processes. Meanwhile, computationally demanding visualizations may benefit from harnessing advanced storage approaches—as outlined in insights about computational storage when processing at the storage layer makes sense.

Data skewness, particularly prevalent in healthcare datasets due to inaccurate reporting or bias, can distort visualization outcomes. Mitigating these imbalances systematically requires proactive data skew detection and handling in distributed processing. Efficient analytics also hinge on robust parallel processing mechanisms like thread-local storage optimization for parallel data processing, ensuring timely analytic results without computational bottlenecks. Addressing these critical components fosters the smooth delivery of precise, actionable pandemic visualizations stakeholders trust to guide impactful interventions.

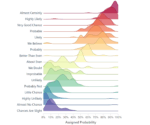

Designing Intuitive Visualizations for Pandemic Preparedness Dashboards

Ultimately, the efficacy of disease visualization models hinges upon intuitive, accessible, and actionable dashboards that effectively leverage preattentive visual processing in dashboard design. Incorporating these cognitive science principles ensures dashboards facilitate fast comprehension amidst crisis scenarios, enabling immediate decision-making. Design considerations include simplicity, clarity, and special emphasis on intuitive cues that quickly inform stakeholders of changing conditions. Pandemic dashboards should accommodate diverse user skills, from public officers and healthcare providers to general community members, clearly indicating actionable insights through color-coding, succinct labels, animation, and clear graphical anchors.

Effective dashboards incorporate interactive elements, allowing flexible customization according to varying stakeholder needs—basic overviews for policy presentations or deep dives with detailed drill-down capabilities for epidemiologists. Employing optimized visualization techniques that leverage preattentive features drives immediate interpretation, significantly reducing analysis paralysis during emergent situations. Ultimately, investing in thoughtful design significantly enhances pandemic preparedness, permitting robust responses that ensure communities remain resilient, informed, and safe.

Thank you for your support, follow DEV3LOPCOM, LLC on LinkedIn and YouTube.

by tyler garrett | Jun 18, 2025 | Data Visual

In the digital age of satellite-dependent communications, navigation, and global operations, the reality of space debris represents an accelerating risk factor. From defunct satellites and rocket stages to remnants of space missions past, an estimated millions of fragments now orbit Earth, moving at velocities high enough to pose serious threats of collision and catastrophic damage. Leveraging sophisticated data analytics, advanced visualization technologies, and predictive algorithms empowers decision-makers to address collision risks proactively and sustainably manage the orbital environment. In this guide, we delve deep into the critical aspects of orbital visualization and collision prediction, showcasing how strategic investments in analytics and data solutions can revolutionize space asset safety and resource optimization.

Understanding the Complexity of Space Debris

Space debris is essentially human-made objects or fragments orbiting the Earth that no longer serve a useful purpose. From worn-out satellites to bits of launch vehicles left from past missions, this debris affects operational satellites, spacecraft, and even manned missions aboard the International Space Station. The sheer speed of these objects—often exceeding 17,500 mph—turns even tiny fragments into serious hazards, capable of substantial damage upon collision.

Scientific estimates suggest there are currently over 500,000 debris pieces larger than one centimeter orbiting our planet, and millions of smaller fragments remain undetected but dangerous. Visualizing this debris in near real-time requires robust analytics infrastructure and data integration solutions that effectively consolidate diverse data streams. This scenario represents an exemplary use-case for technologies like advanced spatial analytics, ETL processes, and efficient data governance strategies as described in our detailed guide, “The Role of ETL in Data Integration and Data Management”.

By deepening comprehension of the intricate spatial distributions and velocities of debris, analysts and decision-makers gain crucial insights into orbit management. Comprehensive visualization helps identify clusters, anticipate potential collisions well beforehand, and enhance ongoing and future orbital missions’ safety—protecting both investments and human lives deployed in space.

Orbital Visualization Technology Explained

Orbital visualization acts as a window into the complex choreography taking place above Earth’s atmosphere. Advanced software tools utilize data harvested from ground and space-based tracking sensors, combining sophisticated analytics, predictive modeling, and cutting-edge visualization interfaces to vividly depict orbital spatial environments. These visualizations enable managers and engineers to operate with heightened awareness and strategic precision.

Effective visualization tools provide stakeholders with intuitive dashboards that clarify complex scenarios, offering interactive interfaces capable of real-time manipulation and analysis. Leveraging expert consulting solutions, like those we describe in our service offering on advanced Tableau consulting, can further streamline complex data into actionable intelligence. These tools visualize orbital parameters such as altitude, angle of inclination, debris density, and related risks vividly and clearly, facilitating immediate situation awareness.

Orbital visualization technology today increasingly integrates powerful SQL databases, such as those explained in our practical tutorial on how to install MySQL on Mac. These databases store massive volumes of orbital data efficiently, making visualization outcomes more precise and accessible. Stakeholders can conduct range-based queries effortlessly, utilizing tools like the SQL BETWEEN operator, fully explained in one of our powerful guides Mastering Range Filtering with the SQL BETWEEN Operator.

Predictive Analytics for Collision Avoidance

Preventing orbital collisions demands sophisticated analytics far beyond the capability of mere observational solutions. By implementing predictive analytics techniques, organizations can act proactively to minimize risk and prevent costly incidents. Modern collision prediction models fuse orbital tracking data, statistical analytics, and machine learning algorithms to forecast potential collision events days or even weeks in advance.

This capability rests on the quality and integrity of data gathered from tracking sensors and radar arrays globally—a process greatly enhanced through well-designed data pipelines and metadata management. Our informative article on Pipeline Registry Implementation: Managing Data Flow Metadata offers strategic insights for optimizing and maintaining these pipelines to ensure predictive efforts remain effective.

The predictive algorithms themselves rely on sophisticated mathematical models that calculate positional uncertainties to determine collision probabilities. Advanced data analytics frameworks also factor historical collision records, debris movements, orbital decay trends, and gravitational variables to develop highly precise forecasts. By capitalizing on these insights through advanced analytics consulting, stakeholders can prioritize collision avoidance maneuvers and effectively allocate available resources while safeguarding mission-critical assets, reducing both immediate risk and potential economic losses significantly.

Implementing Responsible AI Governance in Space Operations

As artificial intelligence increasingly integrates into collision prediction and debris management, it’s paramount to address AI’s ethical implications through rigorous oversight and clear governance frameworks. Responsible AI governance frameworks encompass methods and processes ensuring models operate fairly, transparently, and accountably—particularly important when safeguarding valuable orbital infrastructure.

In collaboration with experienced data analytics advisors, organizations can deploy responsible AI frameworks efficiently. Interestingly, space operations closely mirror other high-stakes domains in terms of AI governance. Our thorough exploration in Responsible AI Governance Framework Implementation elucidates the foundational principles essential for regulated AI deployments, such as fairness monitoring algorithms, transparency assessment methods, and accountability practices.

Within orbit planning and operations, responsibly governed AI systems enhance analytical precision, reduce potential biases, and improve the reliability of collision alerts. Strategic implementation ensures algorithms remain comprehensible and auditable, reinforcing trust in predictive systems that directly influence multimillion-dollar decisions. Partnering with analytics consulting specialists helps organizations develop sophisticated AI governance solutions, mitigating algorithmic risk while driving data-driven orbital decision-making processes forward.

Data Efficiency and Optimization: Storage vs Compute Trade-offs

Given the vast scale of orbital data streaming from satellites and global radar installations, organizations inevitably confront critical decisions surrounding data management strategy: specifically, storage versus compute efficiency trade-offs. Optimizing between storage costs and computational power proves crucial in maintaining an economically sustainable debris tracking and prediction infrastructure.

As outlined in our comprehensive article on The Economics of Data Deduplication: Storage vs Compute Trade-offs, managing terabytes of orbital data without efficient deduplication and storage optimization rapidly becomes untenable. Sophisticated data management principles, including deduplication and proper ETL workflows, maximize available storage space while preserving necessary computational flexibility for analytics processing.

Implementing intelligent data deduplication methods ensures organizations avoid unnecessary data redundancy. When smart deduplication is coupled with optimal database architecture and effective management practices as emphasized by our expert consultants, stakeholders can drive substantial cost reduction without compromising analytics performance. Decision-makers in growing aerospace initiatives benefit from carefully balancing computing resources with smart storage strategies, ultimately enhancing operational efficiency and maximizing data-driven innovation opportunities.

The Future of Safe Orbital Management

Moving forward, sustained advancements in analytics technology will continue shaping orbital debris/maneuvering risk management. Increasingly intelligent algorithms, responsive data integration solutions, real-time analytics processing, and intuitive visualization dashboards will redefine safe orbital practice standards—placing the industry at the forefront of technological innovation.

Adopting proactive collision prediction approaches using cutting-edge visualization technology and smart data management strategies directly addresses core operational risks that challenge satellites, spacecraft, and global space resource utilization. Beyond immediate asset protection, data-driven orbital management solutions help organizations fulfill accountability and sustainability obligations, preserving long-term utilization of invaluable orbital infrastructure.

Strategic investment in knowledge transfer through expertly tailored analytical consulting engagements ensures stakeholders maintain competitive efficiency across their orbit management initiatives. Leveraging expert advice from industry-leading data analytics and visualization specialists translates investments into actionable insights—unlocking safer, smarter, and continually innovative orbit management practices.

Harnessing analytics capability represents the first critical step toward long-term sustainable orbital parameters, protecting current and future space asset value against increasingly crowded orbital environments.

Interested in harnessing analytics innovation for your organization’s strategic needs? Learn how our experienced team delivers solutions to your toughest data challenges with our Advanced Tableau Consulting Services.

Thank you for your support, follow DEV3LOPCOM, LLC on LinkedIn and YouTube.

by tyler garrett | Jun 18, 2025 | Data Visual

Today’s forward-thinking companies have embraced sustainable practices as a cornerstone of their growth and brand identity. But sustainability isn’t simply about setting goals—it’s about effectively measuring and communicating the impact of your initiatives. With carbon emissions more critical and scrutinized than ever, organizations need actionable insights and clear visuals to showcase their environmental impact transparently. Here, analytical tools become crucial in simplifying complex sustainability data for decision-makers. In this article, we’ll explore the role of data analytics and visualization in corporate sustainability, and specifically how carbon footprint visualization empowers businesses to drive impactful sustainability strategies.

The Importance of Carbon Footprint Visualization for Enterprise Sustainability

As businesses face increasing pressure from regulators, consumers, and investors alike to lower their carbon emissions, there’s a renewed emphasis on transparency and actionable insights. Understanding environmental impact through raw data and lengthy reports is challenging, and fails to resonate effectively across stakeholder groups. Effective carbon footprint visualization transforms these intricacies into comprehensive, easy-to-understand visuals, granting clarity to otherwise complex datasets—addressing the common pain point decision-makers face in gleaning actionable insights from sustainability data.

The ability to visualize carbon data inherently equips you with the insights required to make informed, strategic decisions. With advanced visualization techniques—such as implementing zoom-to-details in multi-resolution visualizations—leaders can explore granular sustainability metrics across departments, locations, or specific production processes with ease. Visualization not only fosters internal accountability but also amplifies credibility externally, helping your organization communicate your sustainability initiatives clearly to partners, clients, and investors.

Visualization also allows enterprises to better track their progress toward sustainability goals, identify opportunities for improvement, and take measured steps to reduce emissions. For example, by introducing interactive dashboards and scenario simulations, organizations can explore hypothetical changes and their potential impact—making informed decisions effortlessly and confidently.

Deploying Advanced Analytics to Maximize Sustainability Insights

Effectively leveraging corporate sustainability analytics starts with accurate data acquisition, collection, aggregation, and enrichment. To achieve this, enterprises must focus on robust master data survivorship rules implementation, ensuring that data integrity and consistency are maintained at scale. Building your analytics practice upon high-quality data is paramount in delivering meaningful sustainability insights through visualization tools.

Advanced analytics techniques help businesses quickly uncover correlations between operations, emissions levels, energy consumption, and activities across supply chains. Leveraging predictive modeling and scenario analysis, leaders gain a proactive approach—allowing them to forecast emissions trajectories, pinpoint risks, and devise effective mitigation strategies preemptively. Analytics platforms such as Microsoft Azure can significantly streamline high-value data solutions—combining powerful cloud infrastructure with built-in AI capabilities. Explore how expert Azure consulting services can support your corporate sustainability analytics roadmap to drive stronger insights faster.

The combination of sophisticated analytics and intuitive visualizations empowers your organization with concise, actionable knowledge. With innovative data classification methods, like robust user-driven data classification implementations, you’ll establish accountability and clarity in sustainability data governance—ensuring internal and external reporting aligns seamlessly with your corporate sustainability goals and standards.

Integrating Carbon Footprint Visualization into Corporate Strategy

Carbon footprint visualization isn’t only a tool for after-the-fact reporting—it’s integral to long-term corporate strategic development. Successful integration begins when sustainability visualization becomes embedded into executive-level decision-making processes. Aligning these analytics visualizations within your business intelligence environment ensures sustainability becomes ingrained within strategic conversations and planning discussions. This enables senior leaders to observe not only general emissions impacts but also detailed, predictive analyses for future sustainability pathways.

Visualizations combining historical emissions data with projections and targets facilitate robust strategic comparisons such as year-over-year emissions performance, departmental carbon intensity, or sustainability investments vs. outcomes. For example, strategic use of vectorized query processing significantly accelerates deep analytics pipelines, enabling executives to access and interact with sustainability data efficiently and quickly—essential for strategic-level decision-making.

If organizations aspire to implement truly successful sustainability strategies, data visualization tools must permeate various levels of operations and decisions. The establishment of strategic visual dashboards with full integration to existing analytical tools and workflows enhances the organization’s sustainability culture, empowers clarity around carbon impacts, and creates data-driven accountability to effectively track and achieve sustainability commitments.

Enhancing User Experience and Decision-Making Through Advanced Visualization Techniques

At its core, impactful carbon footprint visualization remains a user-centric pursuit. Decision-makers often face overwhelming amounts of information; hence visualizations should adhere to clear design principles that enable quick comprehension without sacrificing detail. Here lies the importance of thoughtful UI/UX concepts like designing visualizations that account for cognitive load in complex data displays, as such visual clarity significantly enhances decision-makers’ ability to quickly grasp insights and swiftly act on sustainability results.

Advanced visualization approaches such as multi-dimensionality, interactive data exploration, and spatial-temporal mapping allow for intuitive understanding and engagement. Consider the utilization of sophisticated GIS methods and spatio-temporal indexing structures for location intelligence, helping teams analyze geographically dispersed emission impacts, track environmental performance over time, or pinpoint sustainability hotspots effectively and efficiently.

Ensuring effective user experiences directly correlates with faster adoption rates organization-wide, enhances executives’ willingness to engage deeply with sustainability strategies, and ultimately accelerates organizational advancement toward defined sustainability goals. Interactive visualizations that are straightforward, immersive, and effortless to navigate encourage a culture of transparency and facilitate informed decision-making processes at every organizational level.

Securing Sustainability Data through Best-In-Class Governance Practices

Sustainability data remains a highly sensitive and critical corporate asset. As enterprises scale their sustainability analytics efforts—including expansive carbon footprint visualizations—proper data governance practices become essential. Implementing comprehensive data security measures, such as time-limited access control implementation for data assets, guarantees data confidentiality and compliance within stringent regulatory environments.

Improperly governed sustainability data poses reputational, regulatory, operational, and financial risks—all of which are avoidable with strong data governance oversight. Leaders must ensure governance standards extend from managing carbon footprint data accuracy to protecting sensitive emissions data securely. Rigorous security frameworks and robust data asset controls offer organizations peace of mind and demonstrate reliability and transparency to key stakeholders.

Additionally, innovative governance practices including AI-powered evaluations—such as detailed AI code reviews—contribute to your sustainability system’s reliability, accuracy, and maintainability. Proactively adopting rigorous data governance measures secures your organization’s sustainability analytics integrity, protects valuable IP and compliance adherence, and ultimately delivers credible, trusted insights to guide sustainable corporate initiatives.

Conclusion: Visualize, Analyze, and Act Sustainably for the Future

Today’s enterprise decision-makers stand at a pivotal juncture, one at which sustainability commitments must evolve into action and measurable impact. Visualization of corporate carbon footprints has grown beyond reporting requirements—it now embodies a critical strategic and analytical tool that informs, improves, and accelerates transformative changes toward sustainability. Equipped with advanced analytics solutions, world-class visualization techniques, powerful governance practices, and expert guidance, organizations are well positioned to navigate sustainability journeys confidently and purposefully.

The intersection of data analytics, innovative visualization, and sophisticated governance ensures corporate sustainability becomes actionable, accessible, and meaningful across organizational layers. Businesses investing thoughtfully will not only achieve sustainability objectives but also gain competitive advantage, enhanced brand reputation, and stakeholder trust that endures. It’s time for your enterprise to leverage intelligent analytics and creative visualizations, driving an informed, transparent, and sustainable future.

Thank you for your support, follow DEV3LOPCOM, LLC on LinkedIn and YouTube.

by tyler garrett | Jun 18, 2025 | Data Visual

In today’s increasingly complex technology landscape, organizations rely heavily on multi-cloud architectures to stay agile, scalable, and competitive. With workloads spread across a variety of cloud providers such as AWS, Azure, and Google Cloud Platform, keeping track of expenses and effectively optimizing spend has become crucial yet daunting. Without a clear understanding of multi-cloud costs, companies risk overpaying, misallocating resources, and losing strategic competitive advantages. A compelling, actionable approach is needed: multi-cloud cost visualization. By visualizing cloud cost data in a cohesive and easily understandable manner, businesses can uncover hidden insights, create actionable optimizations, and ultimately achieve greater operational efficiency. In this guide, we’re sharing insights from our experience at Dev3lop, a trusted partner leading innovative data visualization consulting services. We will walk you through the importance, challenges, and best practices associated with multi-cloud cost visualization, helping you harness analytics to stay ahead of the curve.

Why Multi-Cloud Cost Visualization Matters Now More Than Ever

Enterprises today aren’t limited to a single cloud provider. Leveraging multi-cloud environments means businesses can optimize deployments for cost-effectiveness, geographic proximity, availability, redundancy, and more. However, this flexibility comes with complexity—making it increasingly challenging to track expenses seamlessly. Each provider often has its own pricing structures, billing cycles, and unique cost metrics. Without a clear visualization platform, organizations risk losing track of critical budgetary controls and missing strategic budget-saving opportunities.

At Dev3lop, our experience with data architecture patterns for microservices has demonstrated that accurately aggregating cost-related data points from multiple providers requires strategic planning and insightful visualization. Customized dashboards not only illustrate current spend clearly but also project future costs, giving management and budget owners the confidence to make informed decisions promptly.

Further, businesses are seeking stronger regulatory compliance and fairness in data governance frameworks. Employing advanced cost visualization methods aligns seamlessly with purpose limitation enforcement in data usage, ensuring expenses relate directly to approved purposes and business functions. Multi-cloud visualization isn’t a luxury—it’s a strategic necessity for enterprises navigating cost-conscious growth in competitive industries.

The Core Challenges Facing Multi-Cloud Cost Management

Diverse Pricing Models and Complex Billing Systems

Cloud cost management is already a challenge when dealing with a single cloud provider. When scaling to multiple providers, things get exponentially complicated. Each platform—whether AWS, Azure, GCP, or others—employs distinct pricing hinges, including different metrics and billing cycles such as pay-as-you-go, reserved instances, spot instances, or hybrid approaches. As these accumulate, the level of complexity introduces confusion, oversights, and costly inefficiencies.

Business leaders risk overlooking additive costs from seemingly minor deployments, such as commercial licensed database options or enhanced networking capabilities, without accurate and detailed visualizations. To efficiently tackle these complexities, analytical visualizations crafted by experts in this domain—such as those offered by our firm—must effectively communicate this complicated financial data, enabling clarity and decisive action.

Lack of Visibility Into Resource Utilization

Lack of clear insight into cloud resource usage directly impacts cost efficiency. Organizations often overspend, unaware that cloud infrastructure utilizes significantly fewer resources than provisioned. Inefficiencies such as idle virtual machines, oversized instances, and orphaned storage accounts become almost invisible without proper cost visualization dashboards. At the intersection of efficiency and analytics, Dev3lop understands the crucial role that sophisticated analytics play. Using techniques such as density contour visualization for multivariate distribution, data visualization experts can reveal hidden cost-saving opportunities across your cloud architecture.

Best Practices in Multi-Cloud Cost Visualization

Implementing an Aggregated View as a Single Source of Truth

Establishing an aggregated reporting system across cloud platforms provides a single pane of glass to visualize expenses dynamically. This centralization represents the foundation of a streamlined cost visualization strategy. At Dev3lop, we emphasize the importance of single source of truth implementations for critical data entities. With unified reporting, stakeholders gain unique insights into cost behaviors and patterns over time, harnessing focused reporting for greater operational efficiency, improved governance, and long-term strategic planning.

Leveraging Real-Time Analytics and Customized Visualization Dashboards

In-depth data analytics and interactive visualizations unlock faster, smarter decisions. Employing real-time visual analytics not only charts immediate cost behavior but allows visibility into trends or anomalies as they surface. Our expertise utilizing leading BI and analytical tools such as Tableau—recognizable immediately by the iconic Tableau logo—allows us to construct customized, intuitive dashboards tailored precisely to stakeholder requirements. This amplifies decision-making and enables more strategic and timely optimizations, significantly reducing unnecessary spend.

Moreover, by employing interactive features to drill down and aggregate data—strategies discussed comprehensively in our blog about group-by aggregating and grouping data in SQL—organizations can perform detailed analysis on individual applications, regions, provider selections, and project budgets, helping management strategically make impactful budgeting decisions.

Custom Vs. Off-The-Shelf Visualization Solutions: Making the Right Call

Organizations often wonder if choosing an off-the-shelf visualization tool is the right approach or whether customized solutions aligned specifically to their business needs are necessary. Utilization of pre-packaged cloud visualization services seems advantageous initially, offering speedy deployment and baseline functionality. However, these solutions rarely address the unique intricacies and detailed cost calculations within multi-cloud environments.

In contrast, fully customized visualization solutions offer precise applicability to an organization’s specific needs. At Dev3lop, we regularly assist clients through analyzing custom vs. off-the-shelf applications. Our recommendation typically balances cost-effectiveness and customization—enabling tailored visualizations incorporating exact tracking needs, usability, security compliance, and analytic functionalities not available in generic visualization packages. This tailored approach yields superior cost-saving insights without sacrificing usability or resource efficiency.

Leveraging Skilled Resources and Innovation to Stay Ahead

Beyond visualization alone, multi-cloud spend optimization requires talent who understand both technology and advanced data analytics thoroughly. Investment in fostering dedicated skill sets across your teams ensures sustainable control and continuous improvement for the multi-cloud environment. As experienced consultants within Austin’s tech ecosystem, we deeply understand the vital role data analytics plays across industries—highlighted thoroughly in the impact of data analytics on the Austin job market.

Staying ahead also means integrating emerging technologies, creating robust visualizations powered by real-time data feeds and dynamic analytics frameworks. Our development experts routinely integrate advanced tools like JavaScript-driven visualizations—understanding, as detailed in our article on lesser-known facts about JavaScript, that visualization innovation continually evolves. With strategic investments in the right talent and technology partners, your teams continuously gain deeper insights and greater optimization, evolving toward competitive excellence.

Empower Strategic Visibility for Smarter Decision-Making

Multi-cloud cost visualization isn’t merely a technical afterthought—it’s an essential strategic competence for digitally-driven enterprises. With insightful analytics, powerful visualizations, clear governance, and continuous optimization, organizations unlock clearer decision pathways, smarter budget allocation, and sustainable competitive advantage.

At Dev3lop, we offer specialized expertise to transform complex multi-cloud spending data into powerful, actionable insights. Ready to elevate your approach to multi-cloud cost visualization and analytics? Discover how we can enable smarter decisions today.

Thank you for your support, follow DEV3LOPCOM, LLC on LinkedIn and YouTube.