by tyler garrett | May 14, 2025 | Data Processing

In an era defined by data-driven decision making, businesses today grapple with increasingly complex and diverse data landscapes. As data pours in from countless applications, legacy databases, cloud storage solutions, external partnerships, and IoT devices, establishing seamless integration becomes not merely beneficial but absolutely critical. Without a robust strategy and reusable approach, integration projects can quickly spiral into complicated, costly endeavors fraught with inefficiencies, delays, and missed insights. Introducing a Data Integration Pattern Library—a curated collection of reusable solutions that simplify complexity, accelerate deployment timelines, and improve your ability to derive strategic insights from your data streams. As seasoned advisors in data analytics and innovation, we’ve seen firsthand how successful integration hinges upon effectively leveraging repeatable and strategic templates rather than reinventing the wheel each time. Let’s explore exactly how a well-defined Data Integration Pattern Library can empower your organization.

Why Your Organization Needs a Data Integration Pattern Library

Complex data ecosystems have become common across industries, leading many organizations down a path filled with manual customization, duplicated work, and unnecessarily slow data delivery. Without standardization and clearly defined solutions, integration efforts tend to evolve into an endless cycle of inconsistency, resulting in increased technical debt and unclear data governance. To strategically utilize emerging technologies such as AI-enhanced analytics and Power BI solutions, maintaining clear data integration patterns is no longer simply desirable; it’s essential.

Developing a Data Integration Pattern Library establishes a structured foundation of reusable templates, categorically addressing typical integration challenges, enabling teams to rapidly configure proven solutions. Not only do these reusable patterns optimize delivery timeframes for integration solutions, but they also foster consistency, accuracy, and long-term maintainability. Organizations that adopt this approach frequently experience enhanced collaboration across teams, accelerated adoption of governance standards, and better informed strategic decision-making resulting from timely and reliable data insights.

A Data Integration Pattern Library further complements innovative techniques, such as those found in our article regarding ephemeral computing for burst analytics workloads, allowing teams to readily configure their integration pipelines with minimal friction and maximum scalability. Leveraging the consistency and reliability of reusable patterns positions your organization to address evolving data landscapes proactively and strategically rather than reactively and tactically.

Key Components of an Effective Pattern Library

An efficient Data Integration Pattern Library isn’t just a loose collection of templates. It strategically categorizes proven methods addressing common integration use cases. Each template typically includes documentation, visual diagrams, technology recommendations, and clear instructions on implementation and customization. This library acts as a centralized knowledge base, shortening the learning curve for existing staff and quickly onboarding new talent.

For maximum efficacy, patterns must cover multiple facets of a data integration strategy from centralized storage such as modern data warehouses—which we discuss extensively in our blog why data warehouses are critical for breaking free from manual reporting loops—to advanced semantic data governance patterns, detailed clearly in our article about semantic layers and why they’re critical. Patterns regularly evolve, aligning with new technologies and innovations, which is why continuous management of the pattern framework ensures relevancy and alignment to emerging standards and integration advances.

Another important component is to articulate clearly what each template achieves from a business perspective. Highlighting practical business outcomes and strategic initiatives fulfilled by each pattern helps bridge the gap between technology teams and executive decision-makers. Effective patterns clearly outline technical complexity issues, potential pitfalls, and recommended steps, minimizing hidden challenges and reducing the likelihood of running into costly data engineering anti-patterns along the way.

Implementing Data Integration Patterns in Your Existing Technology Landscape

Your data integration ecosystem is inevitably influenced by your organization’s existing infrastructure, often including legacy systems and processes that may seem outdated or restrictive. Instead of defaulting towards expensive rip-and-replace methodologies, organizations can integrate strategic pattern libraries seamlessly into their existing technology framework. We cover this extensively in a blog focused on innovating within legacy systems without forcibly replacing them entirely. Adopting a strategically developed pattern library provides an effective bridge between outdated systems and modern analytic capabilities, charting a cost-effective path toward integration excellence without abruptly dismantling mission-critical systems.

Leveraging reusable integration templates also simplifies integration with leading analytics platforms and visualization tools such as Power BI, facilitating smoother adoption and improved reporting consistency. With reduced friction around the integration process, businesses can quickly adopt critical analytic methodologies, streamline data pipeline workflows, and promptly identify valuable insights to inform remaining legacy system modernization efforts.

Moreover, pattern library implementation minimizes the risk and complexity of introducing advanced predictive techniques, including parameter-efficient approaches to time series forecasting. When clearly structured integration patterns support advanced analytics, organizations can continuously optimize their infrastructure for meaningful innovation, enhancing their competitive position in the marketplace without disrupting ongoing business-critical operations.

Accelerating Innovation Through Data Integration Templates

One of our core objectives with implementing a well-structured Data Integration Pattern Library is to accelerate time-to-insight and enable innovation. One powerful example we’ve explored extensively is how structured and reusable integration patterns contributed to what we’ve learned in building an AI assistant for client intake. By utilizing prestructured integrations, innovation teams can swiftly experiment, iterate, and scale sophisticated projects without the initial time-intensive groundwork typically associated with complex data combinations.

Additionally, enabling powerful yet straightforward repeatability inherently supports the innovative culture crucial to breakthroughs. Freeing your team from manually troubleshooting basic integrations repeatedly enables them to focus on creativity, experimentation, and strategic data use cases, rapidly testing groundbreaking ideas. Clean data, effectively addressed in our post on ensuring your data is accurate and reliable for trustworthy visualization, becomes easily obtainable when utilizing a consistent integration framework and approach.

In short, a reusable pattern library positions your enterprise not only for immediate success but also long-term transformational innovation. When strategically implemented, readily accessible, and consistently updated, this library exponentially accelerates time from project initiation to strategic impact—positioning your organization as a data-driven leader driven by insights and accelerated innovation.

Sustaining and Evolving Your Integrated Data Patterns Over Time

Data ecosystems continually evolve: new technologies emerge, analytical demands shift, and integrations expand beyond initial use cases. Therefore, maintaining the vitality, completeness, and applicability of your Data Integration Pattern Library requires deliberate and continuous effort. Assigning clear ownership of your integration architecture and conducting regular reviews and audits ensures that patterns remain relevant and effective tools capable of addressing evolving demands.

Organizations practicing agile methodologies find this an excellent fit—pattern libraries adapt readily to agile and iterative project approaches. Regular reviews and iterative enhancements to individual data integration patterns proactively guard against stagnation and technical obsolescence. Encouraging user community involvement facilitates practical feedback and accelerates innovative improvement as organizational requirements evolve and adapt.

Your strategic integration library also aligns seamlessly with advanced architectures and strategic partnerships, positioning your organization to influence industry trends rather than just follow them. Continuously evolving your integration templates sets the stage for early adopter advantages, strategic flexibility, and innovation pilot projects with reduced barriers, continually shaping your organization’s digital leadership.

Conclusion: A Strategic Investment With Lasting Benefits

Implementing a Data Integration Pattern Library provides more than merely technical templates—it delivers strategic advantages through clarity, repeatability, and accelerated decision-making capabilities. Whether your organization engages in complex legacy-system integration, seeks robust analytic clarity through semantic layering, or explores innovative AI-driven business solutions, strategic patterns remain invaluable enablers. Investing strategically upfront in curated integration templates—clear, reusable, comprehensive, and consistently maintained—brings immeasurable value to your decision-making processes, innovation potential, and operational agility.

Now is the ideal time to position your business as an innovative leader proactively addressing the data integration challenges of tomorrow with strategic readiness today. Take control of your integration efforts with carefully structured, clearly articulated, reusable solutions—and unlock the transformative insights hidden within your diverse and complex data landscapes.

by tyler garrett | May 14, 2025 | Data Processing

In today’s fast-paced technological landscape, businesses rely heavily on data-driven insights to achieve competitive advantages and fuel innovation. However, rapid development cycles, evolving frameworks, and ever-changing data formats often cause version compatibility headaches. Legacy systems, storied yet indispensable, must continue operating seamlessly despite technological advancements. Version-aware data processing is the strategic solution enabling organizations to gracefully adapt and transform data flows to remain robust and backward-compatible. By approaching data from a version-aware perspective, companies can enhance agility, reduce long-term maintenance costs, and ensure smooth transitions without compromising business-critical analytics. In this guide, we’ll unpack the significance of version-aware data processing and delve into methodologies that simplify complex version compatibility issues, empowering decision-makers and technical leaders to strategically future-proof their data ecosystems.

Why Backward Compatibility Matters in Data Processing

Backward compatibility ensures new data structures, formats, or APIs introduced in software development remain operable with older systems and schema. Without backward compatibility, data consumers—ranging from real-time analytics, data visualization applications, and prediction systems to historical reporting tools—would break, leading to costly downtimes, reduced trust in analytics, and delayed business decisions. Designing for backward compatibility enhances your organization’s technical agility, allowing your IT infrastructure to evolve without causing disruptions for users or clients who depend on legacy data structures.

Furthermore, maintaining backward compatibility safeguards crucial historical insights crucial for analytics. Businesses commonly depend upon years of historical data, spanning multiple data format variations, to generate accurate forecasting models, identify trends, and make informed decisions. Any strategic oversight in managing version compatibility could lead to inaccurate metrics, disrupt trend analyses, and potentially misinform data-driven decisions. Maintaining data continuity and compatibility is thus key to ensuring long-term business resilience and accurate strategic decision-making.

Integrating version-aware practices within data processes elevates your organization’s robustness when handling historic and evolving data assets. Version-aware processing is not only about maintaining system interoperability; it’s also about creating a durable data strategy that acknowledges agile iteration of technologies without compromising analytical accuracy or historical understanding.

The Challenges of Versioning in Modern Data Pipelines

Modern data pipelines are complex environments, composed of several interconnected technologies and components—such as real-time streaming platforms, event-driven databases, serverless architectures, machine learning models, and analytics dashboards. Each part of this data ecosystem evolves separately and at speed, potentially leading to compatibility mismatches.

For instance, as described in our blog about machine learning pipeline design, deploying new model versions regularly presents compatibility challenges. Different variations of schema and pre-processing logic must remain aligned if older predictions and historical inferences remain valuable. Data processing structures may shift as business requirements evolve or as data teams adopt new transformation logic—this imposes demands for pipelines that proactively anticipate and handle legacy data schemas alongside new ones.

Further complicating the situation is the spread of data processing logic within modern isomorphic environments. In our article on isomorphic data processing, we highlight the value of shared logic between client-side and server-side infrastructures. While valuable for rapid development and maintenance, complex isomorphic patterns increase the risk of version misalignments across platforms if backward compatibility is neglected.

Coupled with issues of technical debt, unclear schema evolution policies, and insufficient testing against older datasets, these challenges can drastically impair your data platform’s capability to reliably inform strategic business decisions. To avoid these issues, businesses need to embed backward-compatible strategies right into their architecture to protect operations against unexpected disruptions caused by schema or code changes.

Best Practices for Version-Aware Data Processing

Semantic Versioning and Data Schemas

Adopting semantic versioning for your data schemas provides clarity around compatibility expectations. Clearly labeling data schema versions enables downstream data consumers and visualization applications to quickly establish compatibility expectations without confusion. By defining major, minor, and patch schema updates explicitly, technical and non-technical stakeholders alike will understand precisely how schema alterations influence their current or future implementations. This transparency encourages stable, maintainable data systems and improved team communication around data implementations.

Keeping Data Transformations Transparent

Transparency in data transformations is critical for achieving versioned backward compatibility while preserving data provenance and accuracy. Transparent transformations allow older data models to understand their history clearly and preserve business-critical analytical connections. Our article on explainable computation graphs emphasizes how clear visibility into historic transformations simplifies troubleshooting and aligning datasets post-update. Explaining transformations enhances trust in data, enhancing the credibility of analytical insight.

Strategic Deployment of API Gateways and Interfaces

Careful orchestration of API gateways and interfaces supports compatibility between data provider and consumer, acting as a vital communication layer. APIs should deliberately limit breaking changes and transparently communicate changes to downstream consumers, providing entities that bridge backward compatibility. API wraps, shims, or versioned endpoints strategically abstract the underlying data infrastructure, enabling legacy clients and dashboards to function reliably alongside updated implementations, ensuring business continuity as data ecosystems evolve.

Embracing Continuous Improvement in Version Compatibility

Your organization can leverage the philosophy of continuous learning and improvement in data pipelines to further embed compatibility practices. Iterative and incremental development encourages constant feedback from data consumers, identifying early signs of compatibility problems in evolving formats. Regular feedback loops and anomaly version checks ensure minimal disruption, avoiding costly mistakes when integrating new data capabilities or shifting to updated frameworks.

Continuous improvement also means ongoing team training and cultivating a forward-thinking approach to data management. Encourage data engineering and analytics teams to regularly review evolving industry standards for backward compatibility. Internal knowledge-sharing workshops, documentation improvements, and frequent iteration cycles can significantly strengthen your team’s capability to manage backward compatibility issues proactively, creating robust, adaptive, and resilient data infrastructures.

Leveraging Better Visualization and Communication to Support Compatibility

Clear, meaningful data visualization is instrumental in effectively communicating compatibility and schema changes across teams. Effective visualization, as explained in our article on the importance of data visualization in data science, enables rapid understanding of differences between schemas or compatibility across multiple versions. Visualization software, when leveraged appropriately, quickly identifies potential pitfalls or data inconsistencies caused by version incompatibilities, fostering quicker resolution and enhancing inter-team transparency on schema evolution.

Moreover, it’s vital that data visualizations are structured correctly to avoid data distortion. Following guidelines outlined in our content on appropriate scales and axes, companies can present data accurately despite compatibility considerations. Proper visualization standards bolster the ability of business leaders to confidently rely on analytics insights, maintaining accurate historical records and clearly highlighting the impact of schema changes. This transparency provides clarity, consistency, and stability amid complex backend data management operations.

Conclusion: Strategic Thinking Around Backward Compatibility

In today’s fast-paced, data-driven business environment, strategic thinking around version-aware data processing and backward compatibility is paramount. Organizations that proactively embed data version management within their data processing environments benefit from reduced operational downtimes, decreased technical debt, robust data analytics, easier long-term maintenance, and a clearer innovation pathway.

By adopting semantic schema versioning, promoting transparent data transformations, deploying strategic API structures, embracing continuous improvement, and utilizing robust data visualization standards, organizations significantly mitigate backward compatibility risks. Decision-makers who prioritize strategic backward compatibility enable their organizations to accelerate confidently through technology evolutions without compromising stability, accuracy, or data trust.

Empower your organization’s innovation and analytics capabilities by strategically adopting version-aware data processes—readying your business for a robust and flexible data-driven future.

by tyler garrett | May 14, 2025 | Data Processing

In today’s highly competitive data-driven landscape, accurate estimation of pipeline resources is crucial to delivering projects that meet critical business objectives efficiently. Estimations determine cost, timelines, infrastructure scalability, and directly impact an organization’s bottom-line. Yet, the complex interplay between processing power, data volume, algorithm choice, and integration requirements often makes accurate resource estimation an elusive challenge for even seasoned professionals. Decision-makers looking to harness the full potential of their data resources need expert guidance, clear strategies, and intelligent tooling to ensure efficient resource allocation. By leveraging advanced analytical approaches, integrating modern data pipeline management tools, and encouraging informed strategic decisions rather than purely instinctive choices—organizations can avoid common pitfalls in data pipeline resource management. In this comprehensive exploration, we’ll delve into key methodologies, powerful tools, and modern best practices for pipeline resource estimation—offering practical insights to empower more efficient, smarter business outcomes.

Why Accurate Pipeline Estimation Matters

Accurate pipeline resource estimation goes well beyond simple project planning—it’s foundational to your organization’s overall data strategy. Misjudgments here can lead to scope creep, budget overruns, missed deadlines, and inefficient resource allocation. When your estimation methodologies and tooling are precise, you can confidently optimize workload distribution, infrastructure provisioning, and cost management. Conversely, poor estimation can cascade into systemic inefficiencies, negatively impacting both productivity and profitability. Effective resource estimation directly accelerates your ability to better leverage advanced analytical methodologies such as those demonstrated in our vectorized query processing projects, helping you ensure swift, economical, and high-performing pipeline executions. Moreover, precise estimation nurtures transparency, fosters trust among stakeholders, and clearly sets expectations—critical for aligning your teams around shared goals. Strategies that utilize rigorous methodologies for estimating resources are essential to not only avoiding potential problems but also proactively identifying valuable optimization opportunities that align perfectly with your organization’s broader strategic priorities.

Essential Methodologies for Pipeline Resource Estimation

Historical Analysis and Benchmarking

One primary technique for accurate pipeline estimation revolves around leveraging well-documented historical data analysis. By analyzing past project performances, your team can establish meaningful benchmarks for future work, while also identifying reliable predictors for project complexity, resource allocation, and pipeline performance timelines. Analytical queries and models developed using a robust database infrastructure, such as those supported through PostgreSQL consulting services, provide actionable insights derived from empirical real-world scenarios. Historical benchmarking helps proactively identify potential bottlenecks by aligning previous datasets, workflow patterns, and technical details to current estimation challenges. However, this requires robust, accurate data management and planned documentation. Organizations must consistently update existing datasets and institutionalize meticulous documentation standards. When effectively implemented, historical analysis becomes a cornerstone methodology in accurate, sustainable forecasting and strategic decision-making processes.

Proof of Concept (POC) Validation

Before investing significantly in infrastructure or initiating large-scale pipeline development, the strategic use of proof-of-concept (POC) projects provides tremendous advantage. Streamlining pipeline estimation begins with a controlled, efficient approach to experimentation and validation. Such trials offer clear, tangible insight into performance requirements, processing durations, and resource consumption rates, especially when conducted collaboratively with stakeholders. We recommend referencing our detailed approach to building client POCs in real time to streamline the evaluation stage of your pipeline planning. By effectively conducting pilot programs, stakeholders gain visibility into potential estimation inaccuracies or resources misalignments early in the process, providing key insights that positively refine the overall pipeline blueprint prior to full-scale implementation.

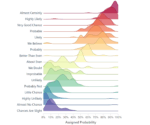

Statistical and Predictive Analytics Techniques

More advanced estimation approaches incorporate statistical modeling, predictive analytics, and machine learning frameworks to achieve highly accurate forecasts. Methods such as Linear Regression, Time-Series Analysis, Random Forest, and Gradient Boosting techniques offer scientifically sound approaches to pipeline resource predictions. These predictive methodologies, as discussed extensively in our previous article about machine learning pipeline design for production, allow organizations to rapidly generate sophisticated computational models that measure the impacts of changes in data volume, compute power, or concurrent jobs. Leveraging predictive analytics dramatically improves accuracy while also empowering your team to proactively uncover deeper strategic drivers behind resource consumption and pipeline performance. Such techniques notably increase your competitive advantage by introducing rigorous, data-centric standards into the resource estimation phase.

Best Practices in Pipeline Resource Estimation

Continuous Collaboration and Communication

Effective estimation methods go hand-in-hand with strong collaboration practices. Teams should maintain open channels of communication to ensure continuous information flow around project scopes, new requirements, and technology challenges. Regularly scheduled standups, sprint reviews, and expectation management sessions offer perfect occasions to validate and update pipeline estimations dynamically. By seamlessly integrating expert insights from data science professionals—something we address extensively in our guide on networking with data science professionals—organizations enhance cross-functional transparency, decision confidence, and achieve greater strategic alignment. Collaborating closely with subject matter experts also provides a proactive safeguard against creating unrealistic expectations, underscoping the necessary processing power, or underserving best-practice data ethics. It ensures organizational readiness as estimation accuracy hinges on frequent information verification sessions among team stakeholders.

Understand Visualization Needs and Intended Audience

When refining pipeline resource estimates, consider who will interpret your forecasts. The clarity of resource allocation data visualizations dramatically influences stakeholder comprehension and their consequent strategic actions. Our blog entry emphasizes the importance of knowing your visualization’s purpose and audience, guiding you toward visualization choices that help decision-makers quickly understand resource allocation scenarios. Using tailor-made visualization tools and carefully presented dashboards ensures stakeholders accurately grasp the complexity, constraints, and drivers behind pipeline resource estimation. Emphasizing clear visualization enables stakeholders to make informed and effective strategic decisions, vastly improving resource allocation and pipeline efficiency.

Ethical and Strategic Considerations in Pipeline Estimation

It’s crucial to recognize the ethical dimension in pipeline resource estimation, particularly in data-centric projects. Accurately anticipating data privacy implications, bias risks, and responsible data usage protocols allows your estimation efforts to go beyond mere cost or timing resources alone. Drawing on ethical best practices, detailed in our analysis of ethical considerations of data analytics, organizations strengthen credibility and accountability among regulatory agencies, auditors, and end-customers. Adopting strategic, ethical foresight creates responsible governance practices that your team can rely upon to justify decisions transparently to both internal and external stakeholders. Focusing on responsible estimation ensures you maintain compliance standards, mitigate reputational risks, and safeguard stakeholder trust throughout the pipeline lifecycle.

Embracing Smart Data-Driven Resource Estimations

While the importance of being data-driven may seem obvious, our experience has taught us this does not always equate to effective decision-making. Estimation accuracy requires a targeted, rigorous usage of data that directly addresses project-specific strategic needs. As highlighted in our post discussing why “data-driven decisions aren’t always smart decisions,” being truly data-smart demands critical assessments of relevant data contexts, assumptions, and strategic outcomes. Estimation methods must factor comprehensive views of business requirements, scenario mapping, stakeholder alignment, and interdisciplinary coordination to truly maximize efficiency—something we discuss further in our resource-focused guide: Improved Resource Allocation. Leveraging smarter data-driven estimation techniques ensures pipeline sustainability and organizational adaptability—essential factors in empowering better decision making.

Establishing a comprehensive and strategic pipeline resource estimation practice is a critical step toward creating empowered, agile, and innovative data-driven companies. Embracing modern tools, frameworks, and collaborative techniques positions your organization to unlock higher levels of insight, efficiency, and competitiveness across your data strategy initiatives.

by tyler garrett | May 14, 2025 | Data Processing

In an era characterized by data-driven innovation and rapid scalability, organizations face increasing demands to optimize their shared resources in multi-tenant environments. As multiple clients or business units leverage the same underlying infrastructure, managing resources effectively becomes paramount—not only for performance but also cost control, reliability, and customer satisfaction. Today’s powerful data tools demand sophisticated strategies to deal with resource contention, isolation concerns, and dynamic resource scaling. Becoming proficient at navigating these complexities is not merely valuable—it is essential. As experienced software consultants specializing in advanced MySQL consulting services and data-driven innovation, we understand that effective multi-tenant resource allocation requires more than technical expertise; it requires strategic thinking, precise methodology, and a well-crafted approach to technology management.

The Importance of Structured Architecture in Multi-Tenant Environments

At its core, multi-tenancy involves sharing computational or data resources across multiple discrete users or organizations—tenants—while preserving security, isolation, and performance. Achieving optimal multi-tenant resource allocation begins by defining a precise architectural blueprint. A clearly defined and structured architecture ensures each tenant experiences seamless access, robust isolation, and optimized resource usage. This architectural foundation also inherently supports scalability, allowing businesses to seamlessly ramp resources up or down based on real-time demand while guarding against deployment sprawl or resource hoarding.

Structured data architecture extends beyond mere database optimization and covers critical practices such as data partitioning, schema designs, tenant isolation levels, and administrative workflows. A well-designed multi-tenant architecture is akin to a thoroughly crafted building blueprint, facilitating efficiencies at every level. Implementing suitable structures—such as schema-per-tenant, shared schemas with tenant identifiers, or custom schema designs—can significantly streamline data management, bolstering performance, security, and analytic capabilities. We emphasize the critical importance of strategic data modeling as a necessary blueprint for achieving measurable data-driven success. This approach, when executed proficiently, enables clients to effectively leverage their resources, gain increased analytical clarity, and supports smarter decision-making processes.

Resource Management Techniques: Isolation, Partitioning, and Abstraction

Efficient resource allocation in multi-tenant environments centers heavily on effective management strategies like isolation, partitioning, and abstraction. Resource isolation is foundational; tenants must remain individually secure and unaffected by other tenants’ resource use or changes. Virtualized or containerized environments and namespace segregation approaches can provide logical isolation without sacrificing manageability. Effective isolation ensures that heavy resource usage or security breaches from one tenant never impacts another, enabling businesses to securely host multiple tenants on single infrastructure setups.

Furthermore, employing advanced partitioning techniques and abstraction layers helps to optimize data processing platforms dynamically and transparently. Partitioning, by tenant or by data access frequency, can vastly improve query performance and resource allocation efficiency. Additionally, abstraction allows IT administrators or application developers to implement targeted, strategic resource controls without continually rewriting underlying code or configurations. This aligns neatly with cutting-edge methodologies such as declarative data transformation methods, which enable businesses to adapt data processing dynamically as requirements evolve—leading to more efficient resource allocation and minimizing overhead management.

Leveraging Adaptive Parallelism for Dynamic Scaling

In resource-intensive, data-driven infrastructures, adaptive parallelism has emerged as an innovative strategic approach to efficient resource handling. Adaptive parallelism enables processing environments to dynamically scale resources based on real-time analytics and load conditions. Platforms can automatically adjust computing resources, leveraging parallel executions that scale horizontally and vertically to meet peak demands or minimal needs. For organizations that process substantial volumes of streaming data—such as integrating data from platforms like Twitter into big data warehouses—dynamic resource allocation ensures consistent performance. Our recent insights on adaptive parallelism highlight how dynamic scaling resources can dramatically enhance data processing efficiency and management flexibility.

With adaptive parallelism, underlying technologies and resource allocation become more responsive and efficient, preserving optimal throughput with minimal manual intervention. Whether consolidating social media feeds or streaming analytical workloads to Google BigQuery, dynamic scaling ensures that resources are provisioned and allocated precisely according to necessity, providing seamless operational adaptability. Every decision-maker looking to optimize their shared resource environment should explore these dynamic strategies for immediate and sustainable benefit.

Enhancing Analytics through Strategic Tenant-Aware Data Systems

In multi-tenant settings, analytics functionality should never be overlooked. An effective tenant-aware analytical system allows organizations deep insight into performance patterns, resource utilization, customer behavior, and operational bottlenecks across individual tenants. Proper resource allocation is not just about maximizing infrastructure efficiency; it’s also crucial for robust business intelligence and user experience enhancement. Businesses must strategically choose the right analytical frameworks and tools such as dashboards from platforms like Google Data Studio. For deep integration scenarios, we recommend exploring options such as our guide on Embedding Google Data Studio visualizations within applications.

Strategic data systems that leverage tenant-awareness allow analytics platforms access to nuanced prioritization and usage data. Data-driven insights derived through smart managed analytics infrastructures ensure each tenant receives appropriate resources tailored to their respective predictive and analytical needs, creating a dynamic and responsive ecosystem. Effective multi-tenant analytics platforms can further incorporate advanced geospatial analyses like those described in our recent exploration on geospatial tensor analyses designed for multidimensional location intelligence, greatly enriching the contextual understanding of resource allocation patterns, usage trends, and business behaviors across the entire tenant ecosystem.

Solutions for Handling High-Priority Issues: Building Smart Tooling Chains

The timely resolution of high-priority tenant issues is critical to successful multi-tenant resource allocation strategies. Prioritizing tenant incidents and quickly addressing high-performance concerns or resource contention is key to maintaining customer satisfaction and service reliability. Proper tooling, incident management systems, and smart tooling chains streamline operational efficiency. For inspiration and practical insights, we recommend reviewing our innovative approach to creating an efficient system for addressing high-priority issues through comprehensive tooling chains.

Smart tooling solutions empower organizations by providing integrated capabilities such as algorithmic detection of potential issues, automated alerts, elevated incident tracking, and AI-driven optimization. Such streamlined toolchains proactively identify constraints, enabling administrators to swiftly rectify any issues that arise, thus ensuring minimal disruptions and optimum performance standards. For organizations running multi-tenant systems, the ability to identify, escalate, address, and solve issues rapidly ensures the enduring health and agility of their shared processing environments, greatly contributing to overall operational efficiency and tenant satisfaction.

Bridging the Resource Gap: The Strategic Recruitment Advantage

As companies evolve toward sophisticated multi-tenant platforms, leadership teams often face resource gaps relating to managing increasingly complex data and analytics systems. Strategic talent acquisition becomes essential, yet optimal hiring decisions are crucial. Interestingly, the most effective early data hires are not always data scientists—businesses must first establish proper contexts, structures, and data engineering foundations before rapidly expanding data science team efforts. Our insightful article on Why Your First Data Hire Shouldn’t Be a Data Scientist offers key clarity and direction on building the right teams for resource-intensive environments.

To bridge resource gaps effectively, companies need clear strategic understanding of their platforms, data infrastructure optimization, and genuine requirements. Practical hires—such as data engineers, database specialists, or solutions architects—can build scalable platforms ready for future growth. Strategic hiring enhances resource optimization immensely, setting the stage for eventual analytical expansion and accelerating growth and profitability. Aligning technology gaps with skilled resources results in measurable operational outcomes and proves instrumental in driving revenue growth and boosting organizational performance.

by tyler garrett | May 13, 2025 | Data Processing

Data is growing exponentially, and with it comes the critical need for sound strategies that optimize processing power and accelerate analytics initiatives. Organizations amass vast volumes of structured and unstructured data every day, making it crucial to manage computational resources wisely. Dataset sampling techniques stand at the forefront of efficient data-driven innovation, enabling businesses to derive insightful analytics from smaller, yet highly representative snapshot datasets. As industry-leading data strategists, we understand that optimization through strategic sampling isn’t just good practice—it’s essential for maintaining agility, accuracy, and competitive advantage in today’s data-intensive landscape.

Understanding the Need for Optimized Dataset Sampling

In an era dominated by big data, organizations confront the challenge not just to gather information—tons of information—but also to process and utilize it in a timely and cost-effective manner. Complete analysis of vast datasets consumes significant computational resources, memory, and time, often beyond reasonable budgets and deadlines. It’s simply impractical and inefficient to process an entire mammoth-sized dataset every time stakeholders have questions. Thus, sampling techniques have become fundamental towards optimizing data processing.

Data analysts and engineers increasingly leverage analytics project prioritization to tackle projects effectively—even within constrained budgets. Strategic allocation of resources, as discussed in our guide on how to prioritize analytics projects with limited budgets, underscores the importance of processing optimization. Sampling techniques mitigate this issue by selectively extracting subsets of data, rigorously chosen to accurately reflect the characteristics of the entire dataset, significantly reducing computational burdens while preserving analytic integrity.

This approach is especially valuable in contexts like real-time analytics, exploratory analysis, machine learning model training, or data-driven optimization tasks, where agility and accuracy are paramount. With well-crafted sampling techniques, businesses can rapidly derive powerful insights, adjust strategies dynamically, and maintain competitive agility without sacrificing analytical depth.

Key Dataset Sampling Techniques Explained

Simple Random Sampling (SRS)

Simple Random Sampling is perhaps the most straightforward yet effective technique for dataset optimization. This method selects data points entirely at random from the larger dataset, giving each entry equal opportunity for selection. While it’s uncomplicated and unbiased, SRS requires properly randomized selection processes to avoid hidden biases.

This randomness ensures that sampling errors are minimized and that generated subsets accurately represent population characteristics, allowing analytics teams rapid insights without complete resource commitments. Organizations keen on accuracy and precision should refer first to analytics strategies discussed in our guide about ensuring accurate data representation.

Stratified Sampling

Stratified sampling divides the dataset into distinct “strata” or subgroups based on specific characteristics similar within each subgroup. Samples are randomly drawn from each stratum, proportionate to the strata’s sizes relative to the entire dataset.

This approach offers more precision than SRS because each subgroup of interest is proportionally represented, making it uniquely advantageous where data diversity or critical sub-segments significantly impact overall analytics and insights. Stratified sampling gives data practitioners more targeted analytical leverage, especially to support informed decision-making about resource allocation.

Cluster Sampling

Cluster sampling splits data into naturally occurring clusters or groups, after which certain clusters are randomly selected for analysis. Unlike stratified sampling—where individual data points are chosen—cluster sampling uses whole groups, leading to simplified logistics and reduced complexity during large-scale datasets.

Applied correctly, this approach delivers rapid analytics turnaround, especially where the dataset’s physical or logistical organization naturally lends itself to clusters. For example, geographical data often aligns naturally with cluster sampling, enabling quick assessments of localized data-changes or trends without an exhaustive analysis.

Advanced Sampling Techniques Supporting Data Analytics Innovation

Systematic Sampling

Systematic sampling involves selecting every n-th data point from your dataset after initiating a random starting point. It maintains simplicity and efficiency, bridging the gap between pure randomness and structured representation. This technique thrives when data points don’t follow hidden cyclic patterns, offering reliable subsets and optimized performance.

Systematic sampling is particularly valuable in automated data processing pipelines aimed at enhancing reliability and maintaining efficiency. Our insights discussed further in designing data pipelines for reliability and maintainability showcase systematic sampling as an intelligent stage within robust data engineering frameworks.

Reservoir Sampling

Reservoir sampling is indispensable when dealing with streaming or real-time datasets. This algorithm dynamically selects representative samples from incoming data streams even if the total data extent remains unknown or immeasurable in real-time.

This powerful sampling method optimizes resource management drastically, removing the necessity to store the entire dataset permanently, and benefiting scenarios with high volumes of transient data streams like IoT systems, market feeds, or real-time analytics applications. Leveraging reservoir sampling can drastically improve real-time analytics delivery, integrating efficiently with rapidly evolving AI- and machine-learning-driven analyses. Learn more about trusting AI systems and integrating robust software strategies effectively in our article covering trusting AI software engineers.

Adaptive Sampling

Adaptive sampling dynamically adjusts its strategy based on certain conditions or early analytical results from prior sampling stages. Encountering significant variations or “metric drift,” adaptive sampling intelligently changes sampling criteria to maintain dataset representativeness throughout the analysis.

Additionally, adaptive sampling profoundly benefits data-quality monitoring efforts, extending beyond optimization to maintain continuous oversight of critical data metrics and populations. We discuss approaches to data quality and metrics variations comprehensively in our guide on metric drift detection and monitoring data health.

Practical Considerations and Best Practices for Sampling

Successfully executing dataset sampling doesn’t just rely on theoretical methods—it depends greatly on understanding data structures, business context, and analytical goals. Always clearly define your objectives and analytical questions before implementing sampling techniques. Misalignment between these elements might result in incorrect or biased interpretations and decisions.

Leveraging sampling best practices includes comprehensive documentation and clearly defined selection criteria, greatly facilitating repeatability, audit trails, and long-term maintainability. Treat sampling methods as integral parts of your broader data strategy, embedded within your organizational culture around data-driven innovation.

Consider partnering with expert consultants specializing in visualization and data interpretation—such as the services provided by our industry-leading data visualization consulting services. Professional expertise combined with sampled insights can amplify the precision and clarity of your data storytelling and enhance strategic communication, driving business success.

Implementing Sampling Techniques for Analytics and Innovation in Austin, Texas

In a thriving technology hub like Austin, leveraging dataset sampling can offer exceptional insight generation and optimized processing power critical for sustained innovation. Texas businesses seeking competitive differentiation through data analytics will find immense value in exploring sampling techniques that improve speed, reduce cost, and deliver rapid results.

From startup accelerators to Silicon Hills’ enterprise giants, impactful analytics strategies can provide businesses invaluable growth opportunities. Explore our dedicated coverage on 11 ideas for using data analytics in Austin, Texas to further connect dataset sampling to local driving forces in analytics and innovation.

By embracing thoughtful, targeted sampling strategies, Austin-based ventures, enterprises, and public sector organizations can position themselves for future-ready analytics capabilities, effectively navigating data complexity while generating powerful, enlightening insights.

In conclusion, dataset sampling techniques provide invaluable pathways toward efficient, accurate, and agile analytics. Understanding, selecting, and optimizing these techniques lays the foundation supporting true data-driven decision-making and organizational resilience, allowing leadership to pursue business insights confidently and strategically.