by tyler garrett | Jun 12, 2025 | Data Processing

In today’s complex digital ecosystems, streaming applications have shifted from being beneficial tools to mission-critical platforms. Businesses increasingly rely on these real-time data integrations to deliver insights, automate processes, and predict operational outcomes. Yet, the growing dependency exposes organizations to significant risk—when one part of your streaming application falters, it can jeopardize stability across the entire system. Fortunately, adopting the Bulkhead Pattern ensures fault isolation, improving both reliability and resilience of streaming architectures. Want real-world proof of strategies that minimize downtime? Explore our insights on predicting maintenance impacts through data analysis, which effectively illustrates the importance of preemptive fault management in software infrastructures. Let’s dive into how the Bulkhead Pattern can streamline your path to uninterrupted performance and resilient data streaming environments.

Understanding the Bulkhead Pattern Concept

In construction and shipbuilding, a bulkhead is a partitioning structure designed to prevent leaks or failure in one compartment from impacting another, thus ensuring the integrity of the entire structure. The concept translates elegantly into software design as the Bulkhead Pattern: isolating and compartmentalizing components so that the failure of one part does not cascade, spreading failure throughout the entire application infrastructure. By enforcing clear boundaries between application segments, developers and architects guard against unforeseen resource exhaustion or fault propagation, particularly critical in streaming applications characterized by high-speed continuous data flows.

The Bulkhead Pattern not only maintains stability, but enhances overall resilience against faults by isolating troubled processes or streams. If a service undergoes unusual latency or fails, the impact remains confined to its dedicated bulkhead, preventing widespread application performance degradation. This makes it an ideal choice for modern applications, like those powered by robust backend frameworks such as Node.js. If your team is considering strengthening your architecture using Node.js, learn how our specialized Node.js consulting services help implement fault-tolerant designs that keep your streaming apps resilient and responsive.

Effectively adopting the Bulkhead Pattern requires precise identification of resource boundaries and knowledgeable design choices geared towards your application’s specific context. Done right, this approach delivers consistently high availability and maintains a graceful user experience—even during peak traffic or resource-intensive transactions.

When to Implement the Bulkhead Pattern in Streaming Apps

The Bulkhead Pattern is particularly beneficial for streaming applications where real-time data is mission-critical and uninterrupted service delivery is non-negotiable. If your streaming infrastructure powers essential dashboards, financial transactions, or live integrations, any downtime or inconsistent performance can result in poor user experience or lost business opportunities. Implementing a fault isolation strategy helps maintain predictable and stable service delivery during stream processing bottlenecks or unusual spikes in demand.

For example, your streaming application might run numerous streaming pipelines—each handling distinct tasks such as ingestion, transformation, enrichment, and visualization. Consider integrating the Bulkhead Pattern when there’s potential for a single heavy workload to adversely affect the overall throughput. Such scenarios are common, especially in data-intensive industries, where integrating effective temporal sequence visualizations or contextually enriched visualizations can significantly impact performance without fault isolation mechanisms in place.

Another clear indicator for employing a Bulkhead Pattern emerges when your team frequently faces challenges cleaning and merging divergent data streams. This scenario often occurs when businesses routinely deal with messy and incompatible legacy data sets— a process effectively handled through reliable ETL pipelines designed to clean and transform data. By creating logical isolation zones, your streaming application minimizes conflicts and latency, guaranteeing stable processing when handling intricate data flows.

Core Components and Implementation Techniques

The Bulkhead Pattern implementation primarily revolves around resource isolation strategies and carefully partitioned application structures. It’s necessary to identify and clearly separate critical components that handle intensive computations, transaction volumes, or complex data transformations. Achieving the optimal fault isolation requires skilled awareness of your application’s system architecture, resource dependencies, and performance interdependencies.

Begin by isolating concurrency—limiting concurrent resource access ensures resources required by one process do not hinder another. This is commonly managed through thread pools, dedicated connection pools, or controlled execution contexts. For an application that continuously processes streams of incoming events, assigning event-handling workloads to separate groups of isolated execution threads can significantly enhance reliability and help prevent thread starvation.

Another key approach is modular decomposition—clearly defining isolated microservices capable of scaling independently. Embracing modular separation allows distinct parts of the application to remain operational, even if another resource-intensive component fails. It is also imperative to consider isolating database operations in strongly partitioned datasets or leveraging dedicated ETL components for effective fault-tolerant data migration. Gain deeper insights on how organizations successfully adopt these techniques by reviewing our actionable insights resulting from numerous ETL implementation case studies.

Additionally, data streams frequently require tailored cross-pipeline data-sharing patterns and formats implemented through message-queuing systems or data brokers. Employing isolation principles within these data exchanges prevents cascade failures— even if one pipeline experiences issues, others still produce meaningful results without business-critical interruptions.

Visualization Essentials—Clear Dashboarding for Fault Detection

Effective and clear dashboards represent an essential strategic tool enabling organizations to recognize faults early, assess their scope, and initiate efficient mitigations upon encountering streaming faults. Implementing the Bulkhead Pattern presents a perfect opportunity to refine your existing visual tooling, guiding prompt interpretation and effective response to system anomalies. Detailed visual encodings and thoughtful dashboard design facilitate instant identification of isolated segment performance, flag problem areas, and promote proactive intervention.

Choosing the right visualization techniques requires understanding proven principles such as the visual encoding channel effectiveness hierarchy. Prioritize quickly discernible visuals like gauge meters or performance dropline charts (see our detailed explanation about event dropline visualizations) pinpointing exactly where anomalies originate in the streaming process. Ensuring visualizations carry embedded context creates self-explanatory dashboards, minimizing response time during critical conditions.

Moreover, clutter-free dashboards simplify the detection of critical events. Implementing tested dashboard decluttering techniques simplifies diagnosing bulkhead-oriented system partitions exhibiting performance degradation. Keeping your visualizations streamlined enhances clarity, complements fault isolation efforts, reinforces rapid fault response, and significantly reduces downtime or degraded experiences among end users.

Database-Level Support in Fault Isolation

While the Bulkhead Pattern is predominantly associated with functional software isolation, efficient data management at the database level often emerges as the backbone for fully effective isolation strategies. Database isolation can range from implementing transaction boundaries, leveraging table partitioning strategies, or creating dedicated databases for each service pipeline. Employing isolated databases significantly reduces interference or data contention, allowing your applications to send signals, isolate faulty streams, and resume business-critical operations seamlessly.

When faults occur that necessitate data cleanup, isolation at the database level ensures safe remediation steps. Whether employing targeted deletion operations to remove contaminated records—such as those outlined in our resource on removing data effectively in SQL—or implementing data versioning to retain accurate historical state, database isolation facilitates fault recovery and maintains the integrity of unaffected application services.

Furthermore, database-level fault isolation improves data governance, allowing clearer and precise audits, tracing data lineage, simplifying recovery, and enhancing user confidence. Ultimately, database-level fault management partnered with software-level Bulkhead Pattern solutions results in robust fault isolation and sustainably increased reliability across your streaming applications.

Final Thoughts: Why Adopt Bulkhead Patterns for Your Streaming App?

Employing the Bulkhead Pattern represents proactive technical leadership—demonstrating clear understanding and anticipation of potential performance bottlenecks and resource contention points in enterprise streaming applications. Beyond providing stable user experiences, it contributes significantly to the bottom-line by reducing service downtime, minimizing system failures, enabling proactive fault management, and preventing costly outages or processing interruptions. Companies that successfully integrate the Bulkhead Pattern gain agile responsiveness while maintaining high service quality and improving long-term operational efficiency.

Ready to leverage fault isolation effectively? Let our team of dedicated experts guide you on your next streaming application project to build resilient, fault-tolerant architectures positioned to meet evolving needs and maximize operational reliability through strategic innovation.

by tyler garrett | Jun 12, 2025 | Data Processing

Picture orchestrating a bustling city where thousands of tenants live harmoniously within a limited space. Each resident expects privacy, security, and individualized services, even as they share common infrastructures such as electricity, water, and transportation. When expanding this metaphor into the realm of data and analytics, a multi-tenant architecture faces similar challenges within digital environments. Enterprises today increasingly adopt multi-tenancy strategies to optimize resources, drive efficiencies, and remain competitive. Yet striking a balance between isolating tenants securely and delivering lightning-fast performance can appear daunting. Nevertheless, modern advancements in cloud computing, engineered databases, and dynamically scalable infrastructure make effective isolation without compromising speed not only achievable—but sustainable. In this article, we explore precisely how companies can reap these benefits and confidently manage the complexity of ever-growing data ecosystems.

Understanding Multi-Tenant Architecture: Simultaneous Efficiency and Isolation

Multi-tenancy refers to a software architecture pattern where multiple users or groups (tenants) securely share computing resources, like storage and processing power, within a single environment or platform. Centralizing workloads from different customers or functional domains under a shared infrastructure model generates significant economies of scale by reducing operational costs and resource complexity. However, this arrangement necessitates vigilant control mechanisms that ensure a high degree of tenant isolation, thus protecting each tenant from security breaches, unauthorized access, or resource contention impacting performance.

Primarily, multi-tenant frameworks can be categorized as either isolated-tenant or shared-tenant models. Isolated tenancy provides separate physical or virtual resources for each client, achieving strong isolation but demanding additional operational overhead and higher costs. Conversely, a shared model allows tenants to leverage common resources effectively. Here, the challenge is more pronounced: implementing granular access control, secure data partitioning, and intelligent resource allocation become paramount to achieve both cost-efficiency and adequate isolation.

A robust multi-tenancy architecture integrates best practices such as database sharding (distributing databases across multiple physical nodes), virtualization, Kubernetes-style orchestration for containers, and advanced access control methodologies. Granular privilege management, as seen in our discussion on revoking privileges for secure SQL environments, serves as a foundation in preventing data leaks and unauthorized tenant interactions. Leveraging cutting-edge cloud platforms further enhances these advantages, creating opportunities for effortless resource scaling and streamlined operational oversight.

Data Isolation Strategies: Protecting Tenants and Data Integrity

The bedrock of a successful multi-tenant ecosystem is ensuring rigorous data isolation practices. Such measures shield critical data from unauthorized tenant access, corruption, or loss while facilitating swift and seamless analytics and reporting functions. Several layers and dimensions of isolation must be factored in to achieve enterprise-grade security and performance:

Logical Data Partitioning

Logical partitioning, sometimes called “soft isolation,” leverages schema designs, row-level security, or tenant-specific tablespaces to separate data logically within a unified database. Modern cloud data warehouses like Amazon Redshift facilitate highly customizable logical partitioning strategies, allowing for maximum flexibility while minimizing infrastructure overhead. Our team’s expertise in Amazon Redshift consulting services enables implementing intelligent logical isolation strategies that complement your strategic performance goals.

Physical Data Isolation

In contrast, physical isolation involves distinct infrastructures or databases assigned explicitly to individual tenants, maximizing data safety but introducing increased complexity and resource demands. Deploying a data warehouse within your existing data lake infrastructure can effectively strike a cost-benefit balance, accommodating specifically sensitive use-cases while preserving scalability and efficiency.

Combining logical and physical isolation strategies enables enterprises to optimize flexibility and tenant-specific security needs. Such comprehensive approaches, known as multi-layered isolation methods, help organizations extend control frameworks across the spectrum of data governance and establish a scalable framework that aligns seamlessly with evolving regulatory compliance requirements.

Performance Tuning Techniques for Multi-Tenant Architectures

Achieving uncompromised performance amidst multi-tenancy necessitates precision targeting of both systemic and infrastructural optimization solutions. Engineers and technical leaders must strike the perfect balance between resource allocation, tenant prioritization, monitoring, and governance frameworks, reinforcing both speed and isolation.

Resource Allocation and Management

Proactive strategies around dynamic resource quotas and intelligent workload management significantly enhance performance stability. Cloud native solutions often embed functionalities wherein resources dynamically adapt to distinct tenant needs. Leveraging real-time analytics monitoring with intelligent automatic provisioning ensures consistently high responsiveness across shared tenant systems.

Data Pipeline Optimization

Data agility matters significantly. A critical tenant workload handling strategy involves streamlined ETL processes. Effective ETL pipeline engineering can reduce data pipeline latency, accelerate tenant-specific insights, and maintain operational transparency. Likewise, adopting proven principles in ambient data governance will embed automated quality checkpoints within your multi-tenant infrastructure, significantly reducing delays and ensuring accessible, accurate tenant-specific analytics and reporting insights.

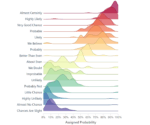

Chart Optimization via Perceptual Edge Detection

Beyond the data, intuitive visualization for accuracy and immediate insight requires methodical implementation of chart optimization techniques, such as perceptual edge detection in chart design. Enhancing visualization clarity ensures that analytics delivered are intuitive, insightful, rapidly processed, and precisely catered to unique tenant contexts.

The Role of Security: Protecting Tenants in a Shared Framework

Security considerations must always permeate any discussion around multi-tenant workloads, given the increased complexity inherent within shared digital ecosystems. Secure architecture design includes stringent data access patterns, encrypted communication protocols, and advanced privacy frameworks. As emerging cyber threats evolve, organizations must continuously apply best practices, as detailed in “Safeguarding Information in the Quantum Era“, reserving a heightened emphasis on privacy through quantum-safe cryptography, endpoint security, and channelized security control validation.

Establishing precise identity access management (IAM) guidelines, automated vulnerability monitoring, and proactive threat alert systems further secures multi-access infrastructures. Comprehensive user-level identification and defined access privileges diminish unnecessary exposure risks, ensuring security measures are deeply intertwined with multi-tenant strategies, not merely added afterward. Invest regularly in tailored implementations of leading-edge security mechanisms, and you’ll achieve a resilient security model that extends seamlessly across disparate tenant spaces without diminishing performance capabilities.

Innovation Through Multi-Tenant Environments: Driving Forward Your Analytics Strategy

Properly executed multi-tenant strategies extend beyond just resource optimization and security. They form a powerful foundation for innovation—accelerating development of impactful analytics, streamlining complex data integrations, and driving organizational agility. Enterprises navigating intricate data landscapes often face the challenge of harmonizing multiple data sources—this resonates with our approach detailed in “Golden Record Management in Multi-Source Environments,” shaping common frameworks to assemble disparate data streams effectively.

Successful multi-tenant analytics platforms promote continuous improvement cycles, often introducing advanced analytical solutions—such as seamlessly integrating TikTok’s analytics data into BigQuery—generating actionable insights that drive strategic decision-making across diverse organizational units or client segments. In short, an intelligently designed multi-tenant architecture doesn’t just offer optimized workload deployment—it serves as a powerful catalyst for sustained analytics innovation.

Conclusion: The Strategic Advantage of Proper Multi-Tenant Management

Effectively managing multi-tenant workloads is critical not only for platform stability and agility but also for sustained long-term organizational advancement. Leveraging advanced isolation mechanisms, intelligent resource optimization, infrastructure tuning, and disciplined security practices enables organizations to maintain impeccable performance metrics without sacrificing necessary tenant privacy or security.

A thoughtfully designed and implemented multi-tenancy strategy unlocks enormous potential for sustained efficiency, robust analytics innovation, enhanced customer satisfaction, and strengthened competitive positioning. Embrace multi-tenant models confidently, guided by strategic oversight, informed by proven analytical expertise, and grounded in data-driven solutions that transform enterprise challenges into lasting opportunities.

by tyler garrett | Jun 12, 2025 | Data Processing

In the fast-paced arena of data-driven decision-making, organizations can’t afford sluggish data analytics that hinder responsiveness and innovation. While computation power and storage scale has exploded, just throwing processing horsepower at your analytics won’t guarantee peak performance. The savvy technical strategist knows there’s a hidden yet critical component that unlocks true speed and efficiency: data locality. Data locality—the strategic placement of data close to where processing occurs—is the secret weapon behind high-performance analytics. Whether you’re crunching numbers in real-time analytics platforms, training complex machine learning models, or running distributed data pipelines, mastering locality can significantly accelerate insights, lower costs, and deliver a competitive edge. Let’s explore how data locality principles can optimize your analytics infrastructure, streamline your data strategy, and drive transformative results for your organization.

What Exactly Is Data Locality?

Data locality—often referred to as ‘locality of reference’—is a fundamental principle in computing that dictates placing data physically closer to the processing units that execute analytical workloads. The closer your data is to the compute resources performing the calculations, the faster your applications will run. This reduces latency, minimizes network congestion, and boosts throughput, ultimately enabling faster and more responsive analytics experiences.

Understanding and exploiting data locality principles involves optimizing how your software, infrastructure, and data systems interact. Consider a scenario where your analytics workloads run across distributed data clusters. Keeping data sets diagonally across geographically distant nodes can introduce unnecessary delays due to network overhead. Strategic deployment and efficient utilization of cloud, edge, or hybrid on-premise architectures benefit immensely from locality-focused design. With well-engineered data locality, your team spends less idle time waiting on results and more energy iterating, innovating, and scaling analytics development.

Why Does Data Locality Matter in Modern Analytics?

In today’s landscape, where big data workloads dominate the analytics scene, performance bottlenecks can translate directly into lost opportunities. Every millisecond counts when serving real-time predictions, delivering personalized recommendations, or isolating anomalies. Poor data locality can cause bottlenecks, manifesting as latency spikes and throughput limitations, effectively throttling innovation and negatively impacting your organization’s competitive agility and profitability.

Imagine a streaming analytics pipeline responsible for real-time fraud detection in e-commerce. Delayed results don’t just inconvenience developers; thousands of dollars are potentially at risk if fraud monitoring data isn’t swiftly acted upon. Similar delays negatively affect machine learning applications where time-sensitive forecasts—such as those discussed in parameter efficient transfer learning—rely heavily on immediacy and responsiveness.

In contrast, optimized data locality reduces costs by mitigating inefficient, costly cross-region or cross-cloud data transfers and empowers your organization to iterate faster, respond quicker, and drive innovation. High-performance analytics fueled by locality-focused data architecture not only impacts bottom-line revenue but also boosts your capacity to adapt and evolve in a fiercely competitive technological marketplace.

How Getting Data Locality Right Impacts Your Bottom Line

Adopting a thoughtful approach towards data locality can have profound effects on your organization’s economic efficiency. Companies unaware of data locality’s significance might unknowingly be spending unnecessary amounts of time, resources, and budget attempting to compensate for performance gaps through sheer computing power or additional infrastructure. Simply put, poor optimization of data locality principles equates directly to wasted resources and missed opportunities with substantial revenue implications.

Analyzing operational inefficiencies—such as those identified in insightful articles like finding the 1% in your data that’s costing you 10% of revenue—often reveals hidden locality-related inefficiencies behind frustrating latency issues and escalating cloud bills. Implementing thoughtful data locality strategies ensures compute clusters, data warehouses, and analytics workloads are harmoniously aligned, minimizing latency and enhancing throughput. The overall result: rapid insight extraction, robust cost optimization, and streamlined infrastructure management.

Practitioners leveraging locality-focused strategies find that they can run advanced analytics at lower overall costs by significantly reducing cross-regional bandwidth charges, lowering data transfer fees, and consistently achieving higher performance from existing hardware or cloud infrastructures. A deliberate locality-driven data strategy thus offers compelling returns by maximizing the performance of analytics pipelines while carefully managing resource utilization and operational costs.

Data Locality Implementation Strategies to Accelerate Analytics Workloads

Architectural Decisions That Support Data Locality

One fundamental first step to effective data locality is clear understanding and informed architectural decision-making. When designing distributed systems and cloud solutions, always keep data and compute proximity in mind. Employ approaches such as data colocation, caching mechanisms, or partitioning strategies that minimize unnecessary network involvement, placing compute resources physically or logically closer to the datasets they regularly consume.

For instance, employing strategies like the ones covered in our analysis of polyrepo vs monorepo strategies outlines how effective organization of data and code bases reduces cross dependencies and enhances execution locality. Architectures that leverage caching layers, edge computing nodes, or even hybrid multi-cloud and on-premise setups can powerfully enable stronger data locality and provide high-performance analytics without massive infrastructure overhead.

Software & Framework Selection for Enhanced Locality

Choosing software frameworks and tools purposely designed with data locality at the center also greatly enhances analytics agility. Platforms with built-in locality optimizations such as Apache Spark and Hadoop leverage techniques like locality-aware scheduling to minimize data movement, greatly increasing efficiency. Likewise, strongly typed programming languages—as shown in our guide on type-safe data pipeline development—facilitate better manipulation and understanding of data locality considerations within analytics workflows.

Tools granting fine-grained control over data sharding, clustering configuration, and resource allocation are indispensable in achieving maximum locality advantages. When choosing analytics tools and frameworks, ensure locality options and configurations are clearly defined—making your strategic analytics solution robust, responsive, efficient, and highly performant.

The Long-term Impact: Creating a Culture Around Data Locality

Beyond immediate performance gains, embracing data locality principles cultivates a culture of informed and strategic data practice within your organization. This cultural shift encourages analytical pragmatism, proactive evaluation of technology choices, and establishes deeper technical strategy insights across your technology teams.

By embedding data locality concepts into team knowledge, training, design processes, and even internal discussions around data governance and analytics strategy, organizations ensure long-term sustainability of their analytics investments. Effective communication, evangelizing locality benefits, and regularly creating data-driven case studies that convert internal stakeholders fosters sustainable decision-making grounded in reality-based impact, not anecdotal promises.

This data-centric culture around locality-aware analytical systems allows businesses to respond faster, anticipate challenges proactively, and innovate around analytics more confidently. Investing in a data locality-aware future state isn’t merely technical pragmatism—it positions your organization’s analytics strategy as forward-thinking, cost-effective, and competitively agile.

Ready to Embrace Data Locality for Faster Analytics?

From quicker insights to cost-effective infrastructure, thoughtful implementation of data locality principles unlocks numerous advantages for modern organizations pursuing excellence in data-driven decision-making. If you’re ready to make data faster, infrastructure lighter, and insights sharper, our experts at Dev3lop can guide your organization with comprehensive data warehousing consulting services in Austin, Texas.

Discover how strategic data locality enhancements can transform your analytics landscape. Keep data local, keep analytics fast—accelerate your innovation.

by tyler garrett | Jun 12, 2025 | Data Processing

In the fast-paced, data-centric business landscape of today, leaders stand at the crossroads of complex decisions affecting systems reliability, efficiency, and data integrity. Understanding how data moves and how it recovers from errors can mean the difference between confidence-driven analytics and uncertainty-plagued reports. Whether you’re refining sophisticated analytics pipelines, building real-time processing frameworks, or orchestrating robust data architectures, the debate on exactly-once versus at-least-once processing semantics is bound to surface. Let’s explore these pivotal concepts, dissect the nuanced trade-offs involved in error recovery strategies, and uncover strategic insights for better technology investments. After reading this, you’ll have an expert angle for making informed decisions to optimize your organization’s analytical maturity and resilience.

The Basics: Exactly-Once vs At-Least-Once Semantics in Data Processing

To build resilient data pipelines, decision-makers must understand the fundamental distinction between exactly-once and at-least-once processing semantics. At-least-once delivery guarantees that every data message or event will be processed successfully, even if this means occasionally repeating the same message multiple times after an error. Although robust and simpler to implement, this methodology can lead to duplicate data; thus, downstream analytics must handle deduplication explicitly. Conversely, exactly-once semantics ensure each data point is processed precisely one time—no more, no less. Achieving precisely-once processing is complex and resource-intensive, as it requires stateful checkpoints, sophisticated transaction logs, and robust deduplication mechanisms inherently designed into your pipelines.

The deciding factor often hinges upon what use cases your analytics and data warehousing teams address. For advanced analytics applications outlined in our guide on types of descriptive, diagnostic, predictive, and prescriptive analytics, accuracy and non-duplication become paramount. A financial transaction or inventory system would surely gravitate toward the guarantee precisely-once processing provides. Yet many operational monitoring use cases effectively utilize at-least-once semantics coupled with downstream deduplication, accepting slightly elevated complexity in deduplication at query or interface layer to streamline upstream processing.

The Cost of Reliability: Complexity vs Simplicity in Pipeline Design

Every architectural decision has attached costs—exactly-once implementations significantly amplify the complexity of your data workflows. This increase in complexity correlates directly to higher operational costs: significant development efforts, rigorous testing cycles, and sophisticated tooling. As a business decision-maker, you need to jointly consider not just the integrity of the data but the return on investment (ROI) and time-to-value implications these decisions carry.

With exactly-once semantics, your teams need powerful monitoring, tracing, and data quality validation frameworks ingrained into your data pipeline architecture to identify, trace, and rectify any issues proactively. Advanced features like checkpointing, high-availability storage, and idempotency mechanisms become non-negotiable. Meanwhile, the at-least-once approach provides relative simplicity in upstream technical complexity, shifting the deduplication responsibility downstream. It can lead to a more agile, streamlined pipeline delivery model, with teams able to iterate rapidly, plugging easily into your existing technology stack. However, this inevitably requires smarter analytics layers or flexible database designs capable of gracefully handling duplicate entries.

Performance Considerations: Latency & Throughput Trade-Off

Decision-makers often wonder about the implications on performance metrics like latency and throughput when choosing exactly-once over at-least-once processing semantics. Exactly-once processing necessitates upstream and downstream checkpointing, acknowledgment messages, and sophisticated downstream consumption coordination—resulting in added overhead. This can increase pipeline latency, potentially impacting performance-critical applications. Nevertheless, modern data engineering advances, including efficient stream processing engines and dynamic pipeline generation methodologies, have dramatically improved the efficiency and speed of exactly-once mechanisms.

In authorship experiences deploying pipelines for analytical and operational workloads, we’ve found through numerous integrations and optimization strategies, exactly-once mechanisms can be streamlined, bringing latency close to acceptable ranges for real-time use cases. Yet, for high-throughput applications where latency is already pushing critical limits, choosing simpler at-least-once semantics with downstream deduplication might allow a more performant, simplified data flow. Such scenarios demand smart data architecture practices like those described in our detailed guide on automating impact analysis for schema changes, helping businesses maintain agile, responsive analytics environments.

Error Recovery Strategies: Designing Robustness into Data Architectures

Error recovery design can significantly influence whether exactly-once or at-least-once implementation is favorable. Exactly-once systems rely on well-defined state management and cooperative stream processors capable of performing transactional restarts to recover from errors without duplication or data loss. Innovative architectural models, even at scale, leverage stateful checkpointing that enables rapid rollback and restart mechanisms. The complexity implied in such checkpointing and data pipeline dependency visualization tools often necessitates a significant upfront investment.

In at-least-once processing, error recovery leans on simpler methods such as message replay upon failures. This simplicity translates into more straightforward deployment cycles. The downside, again, introduces data duplication risks—necessitating comprehensive deduplication strategies downstream in storage, analytics, or reporting layers. If your focus centers heavily around consistent resilience and strict business compliance, exactly-once semantics operationalize your error handling elegantly, albeit at higher infrastructure and complexity overhead. Conversely, for scenarios where constrained budgets or short implementation cycles weigh heavily, at-least-once processing blended with intelligent deduplication mitigations offers agility and rapid deliverability.

Data Governance and Control: Navigating Regulatory Concerns

Compliance and regulatory considerations shape technical requirements profoundly. Precisely-once systems intrinsically mitigate risks associated with data deduplication issues and reduce the potential for compliance infractions caused by duplicated transactions. Expertly engineered exactly-once pipelines inherently simplify adherence to complex regulatory environments that require rigorous traceability and audit trails, like financial services or healthcare industries, where data integrity is mission-critical. Leveraging precisely-once semantics aligns closely with successful implementation of data sharing technical controls, maintaining robust governance frameworks around data lineage, provenance, and audit capabilities.

However, in some analytics and exploratory scenarios, strict compliance requirements may be relaxed in favor of speed, innovation, and agility. Here, selecting at-least-once semantics could allow quicker pipeline iterations with reduced initial overhead—provided there is sufficient downstream oversight ensuring data accuracy and governance adherence. Techniques highlighted in our expertise-focused discussion about custom vs off-the-shelf solution evaluation frequently assist our clients in making informed selections about balancing data governance compliance needs against innovative analytics agility.

Choosing the Right Approach for Your Business Needs

At Dev3lop, we’ve guided numerous clients in choosing optimal processing semantics based on clear, strategic evaluations of their business objectives. Exactly-once processing might be indispensable if your organization handles transactions in real-time and demands stringent consistency, precision in reporting, and critical analytics insights. We empower clients through sophisticated tools such as leveraging explanatory visualizations and annotations, making analytics trustworthy to executives who depend heavily on accurate and duplicate-free insights.

Alternatively, if you require rapid development cycles, minimal infrastructure management overhead, and can accept reasonable down-stream complexity, at-least-once semantics afford powerful opportunities. By aligning your architectural decisions closely with your organizational priorities—from analytics maturity, budget constraints, compliance considerations to operational agility—you ensure an optimized trade-off that maximizes your business outcomes. Whichever semantic strategy fits best, our data warehousing consulting services in Austin, Texas, provide analytics leaders with deep expertise, practical insights and strategic recommendations emphasizing innovation, reliability, and measurable ROI.

by tyler garrett | Jun 12, 2025 | Data Processing

In today’s data-driven landscape, performance bottlenecks become painfully obvious, especially when handling datasets larger than system memory. As your analytics workload grows, the gap between the sheer volume of data and the speed at which your hardware can access and process it becomes a significant barrier to real-time insights and strategic decision-making. This phenomenon, commonly known as the “Memory Wall,” confronts technical teams and decision-makers with critical performance constraints. Understanding this challenge—and architecting data strategies to overcome it—can transform organizations from reactive players into proactive innovators. Let’s dissect the implications of managing working sets larger than RAM and explore pragmatic strategies to scale beyond these limitations.

Understanding the Memory Wall and Its Business Impact

The Memory Wall refers to the increasing performance gap between CPU speeds and memory access times, magnified significantly when your working data set no longer fits within available RAM. Traditionally, the CPU performance improved steadily; however, memory latency drastically lagged. As data-driven workloads continue expanding, organizations quickly realize that datasets surpassing available memory create major performance bottlenecks. Whenever data exceeds your system’s RAM, subsequent accesses inevitably rely on the slower disk storage. This reliance can grind otherwise responsive applications to a halt, severely impacting real-time analytics crucial to agile decision-making. Consequently, decision-makers face not only degraded performance but also diminished organizational agility, incurring considerable operational and strategic costs.

For example, data-intensive business applications—like construction management tools integrated via a robust Procore API—might witness reduced effectiveness when memory constraints become apparent. Timely insights generated through real-time analytics can quickly elude your grasp due to slow data access times, creating delays, miscommunication, and potential errors across collaborating teams. This bottleneck can impede data-driven initiatives, impacting everything from forecasting and scheduling optimization to resource management and client satisfaction. In worst-case scenarios, the Memory Wall limits crucial opportunities for competitive differentiation, dampening innovation momentum across the enterprise.

Symptoms of Memory Wall Constraints in Data Systems

Recognizing symptoms early can help mitigate the challenges posed when working sets surpass the available RAM. The most common sign is dramatic slowdowns and performance degradation that coincides with larger data sets. When a dataset no longer fits comfortably in RAM, your system must constantly fetch data from storage devices, leading to increased response times and vastly reduced throughput. Additionally, the regular occurrence of paging—transferring data blocks between memory and storage—becomes a noticeable performance bottleneck that organizations must carefully monitor and mitigate.

Another symptom is increased pressure on your network and storage subsystems, as frequent data fetching from external storage layers multiplies stress on these infrastructures. Applications once providing quick responses, like interactive visual analytics or swiftly accelerated reporting, suddenly experience long load times, delays, or even complete timeouts. To visualize such potential bottlenecks proactively, organizations can adopt uncertainty visualization techniques for statistical data. These advanced visual techniques empower teams to identify bottlenecks in advance and adjust their infrastructure sooner rather than reactively.

Businesses relying heavily on smooth and continuous workflows, for instance, managers utilizing platforms enriched with timely analytics data or those dependent on accelerated data processing pipelines, will feel the Memory Wall acutely. Ultimately, symptoms include not just technical consequences but organizational pain—missed deadlines, compromised project timelines, and dissatisfied stakeholders needing quick decision-making reassurance.

Strategic Approaches for Tackling the Memory Wall Challenge

Overcoming the Memory Wall requires thoughtful, strategic approaches that leverage innovative practices optimizing data movement and access. Embedding intelligence into data workflows provides a concrete pathway to improved performance. For instance, advanced data movement techniques, such as implementing payload compression strategies in data movement pipelines, can drastically enhance throughput and reduce latency when your datasets overflow beyond RAM.

Moreover, adopting computational storage solutions, where processing occurs at storage level—a strategy deeply explored in our recent article Computational Storage: When Processing at the Storage Layer Makes Sense—can become integral in bypassing performance issues caused by limited RAM. Such architectures strategically reduce data movement by empowering storage systems with compute capabilities. This shift significantly minimizes network and memory bottlenecks by processing data closer to where it resides.

Additionally, implementing intelligent caching strategies, alongside effective memory management techniques like optimized indexing, partitioning, and granular data access patterns, allows businesses to retrieve relevant subsets rapidly rather than fetching massive datasets. Advanced strategies leveraging pipeline-as-code: infrastructure definition for data flows help automate and streamline data processing activities, equipping organizations to scale past traditional RAM limitations.

Modernizing Infrastructure to Break the Memory Wall

Modernizing your enterprise infrastructure can permanently dismantle performance walls. Utilizing scalable cloud infrastructure, for instance, can provide practically limitless memory and computing resources. Cloud platforms and serverless computing dynamically allocate resources, ensuring your workload is consistently supported regardless of dataset size. Similarly, embracing distributed metadata management architecture offers effective long-term solutions. This approach breaks down monolithic workloads into smaller units processed simultaneously across distributed systems, dramatically improving responsiveness.

Additionally, investments in solid-state drives (SSDs) and Non-Volatile Memory Express (NVMe) storage technologies offer exponentially faster data retrieval compared to legacy storage methods. NVMe enables high-speed data transfers even when memory constraints hinder a traditional architecture. Hence, upgrading data storage systems and modernizing infrastructure becomes non-negotiable for data-driven organizations seeking robust scalability and enduring analytics excellence.

Strategic partnering also makes sense: rather than constantly fighting infrastructure deficiencies alone, working with expert consultants specializing in innovative data solutions ensures infrastructure modernization. As highlighted in our popular article, Consultants Aren’t Expensive, Rebuilding IT Twice Is, experts empower organizations with methods, frameworks, and architectures tailored specifically for large data workloads facing Memory Wall challenges.

Cultivating Collaboration Through Working Sessions and Training

Overcoming the Memory Wall isn’t purely a technological challenge but requires targeted organizational collaboration and training throughout IT and analytics teams. By cultivating a culture of informed collaboration, organizations can anticipate issues related to large working sets. Well-facilitated working sessions reduce miscommunication in analytics projects, streamlining problem-solving and aligning distributed stakeholders to mutual infrastructure and data management prescriptions, making overcoming Memory Wall constraints seamless.

Throughout the organization, enhanced training for IT and development staff in memory optimization, distributed system design, and analytics infrastructure improvement fosters proactive resource monitoring and allocation strategies. Encouraging the continuous adoption of optimization best practices—like ensuring prompt updates of visual analytics software or adopting efficient techniques, such as Tableau’s quick-win date buckets—can offer impactful incremental improvements that significantly enhance user experience, even as data continues scaling upwards.

This structured approach to training promotes agile responsiveness to data growth stages, encouraging constant innovation and improvement. By equipping teams to understand, anticipate, and tackle Memory Wall challenges, decision-makers ensure resilience and continue driving business value from data—positions organizations must maintain for competitive differentiation in today’s fast-paced technology landscape.

Conclusion: Breaking Through the Memory Wall

Organizations choosing to proactively understand and strategically overcome the Memory Wall can effectively scale their data-driven operations and analytics capabilities. By implementing smart technology practices, modernizing infrastructure, and fostering proactive internal collaboration, businesses successfully break through memory constraints. Addressing these problems strategically ultimately leads teams to transform seemingly challenging bottlenecks into business opportunities, illuminated pathways for innovation, increased organizational agility, and powerful competitive differentiation.

Ready to tackle your organization’s Memory Wall challenges head-on? Partnering with experienced consultants who specialize in data, analytics, and innovation is key. Discover how Dev3lop can elevate your organizational agility—let’s collaborate to transform your data challenges into strategic advantages.