by tyler garrett | Jun 12, 2025 | Data Processing

In a digital-first world, multimedia is a core foundation of nearly every business-savvy decision—whether you’re streaming high-definition videos, analyzing user-generated photos, or processing audio files for marketing insights, extracting and managing metadata from binary blobs is essential. Metadata enhances searchability, enables targeted analytics, improves user experiences, and even strengthens security and compliance. As businesses grow their reliance on sophisticated multimedia assets, understanding how to engineer smarter multimedia pipelines can give your company a significant competitive edge. Through advanced pipelines, you can rapidly extract precise metadata, enrich your media assets, and stay ahead of industry demands. Let’s explore how you can unlock maximum value by systematically mining the metadata hidden within binary blobs.

Understanding Binary Blobs in Multimedia

Before exploring metadata extraction strategies, it’s crucial to clearly understand binary blobs, or Binary Large Objects. Binary blobs are files that typically contain multimedia data such as images, audio, or video stored in databases or distributed storage systems. Unlike structured textual data, binary blobs don’t inherently reveal insights or information; they require extraction of embedded metadata. Understanding binary blobs is fundamental for designing effective data warehousing solutions, as they often form part of larger analytical pipelines.

Multimedia pipelines process these binary files through automation; they systematically parse through video frames, audio waveforms, photo metadata, and associated file headers. Equipped with high-quality metadata—such as file format, file creation dates, geolocation coordinates, resolution, bitrate, codec information, author information, and licensing details—analytics teams are empowered to build better AI models, enhanced content recommendation platforms, targeted advertising initiatives, and write compliance algorithms tailored to their industry’s regulations and standards.

The complexity of handling multimedia blobs requires specialized skills, from accurately interpreting headers and file properties to dealing with potential anomalies in data structures. Effective multimedia pipelines are agile, capable of handling diverse file types ranging from compressed JPEG images to high-resolution video files, ultimately ensuring better business intelligence and more informed decision-making processes.

Metadata Extraction: Leveraging Automation Effectively

Automation is the cornerstone when it comes to extracting metadata efficiently. Manual extraction of multimedia metadata at scale is unrealistic due to time constraints, human error risks, and high costs. Leveraging automated extraction pipelines allows organizations to rapidly and accurately parse important information from binary files, significantly speeding up downstream analytics and decision-making.

Automated multimedia pipelines can employ advanced scripting, APIs, sophisticated parsing algorithms, and even artificial intelligence to rapidly process large volumes of multimedia data. For instance, employing cloud-based vision APIs or open-source libraries enables automatic extraction of geolocation, timestamps, camera information, and copyrights from images and videos. Similarly, audio files can yield metadata that reveals duration, bit rate, sample rate, encoding format, and even transcription details. These automation-driven insights help businesses tailor their offerings, optimize customer interactions, fulfill compliance requirements, and fuel critical business analytics.

However, not all pipelines are created equal. Ensuring efficient automation requires insightful planning, careful understanding of project requirements and stakeholder expectations, as well as establishing robust debugging and quality assurance measures. Smart automation not only speeds up metadata extraction but also frees resources for innovation, expansion, and strategic thinking.

Best Practices in Multimedia Metadata Extraction

While automation is the foundation of pipeline efficiency, adhering to best practices ensures accuracy, reduces errors, and streamlines operations. Let’s explore several best practices to consider:

Prioritize Metadata Schema Design

Before extraction begins, carefully define metadata schemas or structured data templates. Clearly defining schema ensures uniformity and easier integration into existing analytics frameworks. Consider relevant industry standards and formats when defining schemas, as aligning your metadata structures with widely accepted practices reduces transition friction and enhances compatibility. Partnering with seasoned professionals specializing in multimedia analytics also pays off, ensuring your schema properly supports downstream data warehousing and analysis needs.

Ensure Robust Error Handling and Logging

Errors can creep into automated processes, particularly when dealing with diverse multimedia formats. Implement comprehensive logging mechanisms and clear error diagnostics strategies—your technical team can leverage best-in-class data debugging techniques and tools to quickly identify and correct issues. Robust error-handling capabilities provide confidence in pipeline data quality, saving valuable resources by minimizing manual troubleshooting and potential reprocessing operations.

Optimize Pipelines through Recursive Structures

Multimedia pipelines often involve hierarchical data organization, requiring recursive techniques for smooth extraction. Handling recursive data demands precision, preemptive troubleshooting, and optimization—for details on tackling these challenges, consider exploring our comprehensive article on managing hierarchical data and recursive workloads. Success hinges on agility, smart architecture, and deliberate choices informed by deep technical insight.

Addressing Seasonality and Scalability in Multimedia Pipelines

For businesses that heavily use multimedia content, events seasonal impacts can severely influence processing associated workloads. Multimedia uploads often fluctuate with market trends, special events, or seasonal effects such as holidays or industry-specific peaks. Properly architecting pipelines to handle seasonality effects is crucial, requiring deliberate capacity planning, foresighted algorithmic adaptation, and strategic scaling capabilities.

Cloud architectures, containerization, and scalable microservices are modern solutions often employed to accommodate fluctuating demand. These infrastructure tools can support high-performance ingestion of binary blob metadata during peak times, while also dynamically scaling to save costs during lulls. Businesses that understand these seasonal cycles and leverage adaptable infrastructure outperform competitors by minimizing processing delays or downtimes.

Moreover, considering scalability from the beginning helps avoid costly overhauls or migrations. Proper planning, architecture flexibility, and selecting adaptable frameworks ultimately save substantial technical debt, empowering companies to reinvest resources into innovation, analysis, and strategic initiatives.

Integrating Binary Blob Metadata into Your Data Strategy

Once extracted and cleaned, metadata should contribute directly to your business analytics and data strategy ecosystem. Integrated appropriately, metadata from multimedia pipelines enriches company-wide BI tools, advanced analytics practices, and automated reporting dashboards. Careful integration of metadata aligns with strategic priorities, empowering business decision-makers to tap into deeper insights. Remember that extracting metadata isn’t simply a technical exercise—it’s an essential step to leveraging multimedia as a strategic resource.

Integrating metadata enhances predictive capabilities, targeted marketing initiatives, or user-centered personalization solutions. Particularly in today’s data-driven landscape, the strategic importance of metadata has significantly increased. As you consider expanding your data analytics capability, explore our insights on the growing importance of strategic data analysis to unlock competitive advantages.

Additionally, integrating metadata from binary blobs augments API-driven business services—ranging from advanced recommendation engines to multimedia asset management APIs—further driving innovation and business value. If your team requires support integrating multimedia metadata into quick-turnaround solutions, our article on quick API consulting engagements shares valuable recommendations.

Conclusion: Turning Metadata into Industry-Leading Innovation

Multimedia metadata extraction isn’t merely a nice-to-have feature—it’s a strategic necessity. Empowering pipelines to reliably extract, handle, and integrate metadata from a broad array of binary blobs positions your organization for innovation, clearer analytic processes, and superior marketplace visibility. By thoughtfully embracing automation, error handling, scalability, and integration best practices, you gain a valuable asset that directly informs business intelligence and fosters digital transformation.

Your multimedia strategy becomes more agile and decisive when you view metadata extraction as foundational, not optional. To take your analytics operations and multimedia pipelines to the next level, consider partnering with experts focused on analytics and innovation who can ensure your pipelines are efficient, accurate, and scalable—boosting your position as an industry leader.

Tags: Multimedia Pipelines, Metadata Extraction, Binary Blobs, Automation, Data Analytics, Technical Strategy

by tyler garrett | Jun 12, 2025 | Data Processing

Imagine a bustling city where modern skyscrapers coexist with aging structures, their foundations creaking under the weight of time. Legacy batch systems in your technology stack are much like these outdated buildings—once strong and essential, now becoming restrictive, functional yet increasingly costly. Analogous to the powerful strangler fig in nature—slowly enveloping an aging host to replace it with something far sturdier—modern software engineering has adopted the “Strangler Fig” refactoring pattern. This strategy involves incrementally replacing legacy software systems piece by piece, until a robust, scalable, and future-ready structure emerges without disrupting the foundational operations your business relies on. In this article, we introduce decision-makers to the idea of using the Strangler Fig approach for modernizing old batch systems, unlocking innovation in analytics, automation, and continuous delivery, ultimately sustaining the agility needed to outpace competition.

Understanding Legacy Batch Systems and Their Challenges

Businesses heavily relying on data-intensive operations often find themselves tied to legacy batch systems—old-school applications processing large volumes of data in scheduled, discrete batches. Born from the constraints of previous IT architectures, these applications have historically delivered reliability and consistency. However, today’s agile enterprises find these systems inherently limited because they introduce latency, rigid workflows, and encourage a siloed organizational structure. Consider the typical challenges associated with outdated batch systems: delayed decision-making due to overnight data processing, rigid integration points, difficult scalability, and limited visibility into real-time business performance.

As businesses aim for innovation through real-time analytics and adaptive decision-making, the limitations become expensive problems. The growing burden of maintaining these legacy systems can have compounding negative effects, from keeping expert resources tied up maintaining dated applications to hindering the organization’s agility to respond promptly to market demands. Furthermore, adapting modern analytical practices such as embedding statistical context into visualizations—potentially guided by thoughtful techniques highlighted in our guide on embedding statistical context in data visualizations—can become impossible under traditional batch architectures. This lack of agility can significantly hamper the organization’s ability to leverage valuable insights quickly and accurately.

What is the Strangler Fig Refactoring Pattern?

Inspired by the gradual but efficient nature of the strangler fig tree enveloping its host tree, the Strangler Fig pattern offers a proven method of incrementally modernizing a legacy system piece by piece. Rather than adopting a risky “big bang” approach by completely rewriting or migrating legacy systems in one massive migration, the Strangler Fig strategy identifies small, manageable components that can be incrementally replaced by more flexible, scalable, and sustainable solutions. Each replacement layer steadily improves data processing frameworks towards seamless real-time systems and cloud-native infrastructure without any downtime.

This incremental strategy ensures the business can continue utilizing existing investments, manage risks effectively, and gain real-time performance benefits as each piece is upgraded. Furthermore, Strangler Fig refactoring aligns perfectly with modern agile development practices, facilitating iterative enhancement and rapid deployment cycles. Successful implementations can harness adaptive resource management suggested in our exploration of adaptive parallelism in data processing, enhancing scalability and cost efficiency through dynamic resource allocation.

The Strategic Benefits of Strangler Fig Refactoring

Employing the Strangler Fig pattern provides substantial strategic advantages beyond addressing technical debt. First among these is risk management—gradual refactoring significantly reduces operational risks associated with large-scale transformations because it enables testing incremental changes in isolated modules. Companies can ensure that key functionalities aren’t compromised while continuously improving their system, allowing smoother transitions and improving internal confidence among stakeholders.

Additionally, Strangler Fig implementations promote improved analytics and real-time insights, allowing faster, smarter business decisions. Modernizing your legacy solutions incrementally means your organization begins accessing enhanced analytical capabilities sooner, driving more informed decisions across departments. By addressing common issues such as those highlighted in our report on dashboard auditing mistakes, modern refactoring patterns simplify dashboard maintenance and promote analytical rigor, supporting a deeper, more responsive integration between innovation and business strategy.

Ultimately, the Strangler Fig model aligns technical migrations with overarching business strategy—allowing migration efforts to be prioritized according to direct business value. This balanced alignment ensures technology leaders can articulate clear, quantifiable benefits to executives, making the business case for technology modernization both transparent and compelling.

Steps to Implement an Effective Strangler Fig Migration and Modernization Process

1. Identify and isolate modules for gradual replacement

The first critical step involves assessing and enumerating critical components of your batch processing system, evaluating their complexity, interdependencies, and business importance. Select low-risk yet high-impact modules for initial refactoring. Database components, particularly segments reliant on outdated or inefficient data stores, often become prime candidates for modernization—transforming batch-intensive ETL jobs into modern parallelized processes. For example, our insights on improving ETL process performance furnish valuable strategies enabling streamlined transformations during incremental migrations.

2. Establish clear boundaries and communication guidelines

These boundaries allow independent upgrade phases during incremental changeovers. Well-defined APIs and data contracts ensure smooth interoperability, safeguarding the system during ongoing replacement stages. Moreover, using clear documentation and automated testing ensures availability of actionable metrics and health checks of new components compared to legacy counterparts, assuring smooth handovers.

3. Introduce parallel, cloud-native and real-time solutions early in the refactoring process

Replacing batch-oriented processing with adaptive, parallel, real-time architectures early allows for proactive performance optimization, as previously explored in our blog post about dynamic scaling of data resources. This early transition toward native-cloud platforms consequently fosters responsiveness, adaptability, and enhanced scalability.

The Role of Modern Technologies, Analytics, and Machine Learning in Migration Strategies

In adapting legacy batch systems, organizations gain remarkable leverage by utilizing advanced analytics, machine learning, and data visualization approaches. Enhanced real-time analytics directly contributes to smarter, faster decision-making. For instance, employing advanced visualizations such as our explanatory guide on ternary plots for compositional data can provide nuanced understanding of complex analytical contexts impacted by legacy system limitations.

Furthermore, embracing machine learning enhances capabilities in fraud detection, forecasting, and anomaly detection, all significantly limited by traditional batch-oriented data models. As illustrated in our article covering how machine learning enhances fraud detection, incorporating analytics and ML-enabled solutions into modernized architectures helps organizations build predictive, proactive strategies, dramatically improving risk mitigation and agility.

Moving Forward: Aligning Your Data and Technology Strategy

Harnessing Strangler Fig refactoring methods positions organizations for sustained strategic advantage. The modernization of your existing systems elevates analytics and data-enabled decision-making from operational overhead to insightful strategic advantages. With commitment and expertise, teams can achieve modern, real-time analytics environments capable of transforming vast data into clearer business intelligence and agile, informed leadership.

To support this transition effectively, consider engaging with external expertise, such as our offerings for specialized MySQL consulting services. Our team has extensive experience modernizing legacy data architectures, facilitating optimized performance, heightened clarity in your analytics, and assured incremental transitions.

Just like the natural evolution from legacy structures into modern scalable systems, intelligently planned incremental refactoring ensures that your data ecosystem’s modernization creates longevity, agility, and scalability—foundational elements driving continued innovation, sustainable growth, and enhanced competitive positioning.

by tyler garrett | Jun 12, 2025 | Data Processing

Stream processing—where data flows continuously and demands instant analysis—is the heartbeat of modern, real-time data ecosystems. As decision-makers in today’s dynamic business landscapes, your organization’s ability to interpret data at the speed it arrives directly impacts competitive advantage. Within this powerful streaming universe, understanding windowing strategies becomes mission-critical. Choosing between tumbling and sliding window techniques can influence everything from customer experience to operational efficiency. This in-depth exploration empowers you with the strategic insights necessary to confidently select the optimal streaming window approach, ensuring seamless and meaningful data analytics at scale.

Understanding Streaming Windows and Their Role in Real-Time Analytics

In the modern digitally interconnected sensorial world, real-time insights gleaned from stream processing shape both operational practices and strategic vision. At its core, stream processing involves analyzing data continuously as it flows, rather than after it is stored. To facilitate effective data analysis, technologies such as Apache Kafka, Apache Flink, and AWS Kinesis offer powerful methods to define “windows”—discrete time-intervals within which data points are organized, aggregated, and analyzed.

These windows allow businesses to slice incoming streaming data into manageable segments to conduct accurate, timely, and meaningful analytics. To derive maximum value, it’s crucial to clearly understand the two most common window types—tumbling and sliding—and the nuanced distinctions between them that affect business outcomes. Tumbling and sliding windows both aggregate data, but their fundamental differences in structure, analysis, and applicability significantly influence their suitability for various business use cases. The strategic foundational concept behind pipeline configuration management with environment-specific settings highlights the role streaming windows play in robust, sustainable data architectures.

Decision-makers keen on achieving real-time intelligence, actionable analytics, and operational responsiveness must precisely grasp the advantages and disadvantages of tumbling versus sliding windows, enabling informed choices that align with their organization’s key objectives and analytical needs.

Diving into Tumbling Windows: Structure, Use Cases, and Benefits

Structure of Tumbling Windows

Tumbling windows are characterized by distinct, non-overlapping time intervals. Each data element belongs to exactly one window, and these windows—often defined by consistent, evenly-spaced intervals—provide a clear and predictable approach to aggregations. For example, imagine stream processing configured to a 10-minute tumbling window; data points are grouped into precise ten-minute increments without any overlap or duplication across windows.

Use Cases Best Suited to Tumbling Windows

The straightforward nature of tumbling windows especially benefits use cases centered around time-bounded metrics such as hourly transaction sums, daily user logins, or minute-by-minute sensor readings. Industries like finance, logistics, manufacturing, and IoT ecosystems often leverage tumbling windows to achieve clarity, transparency, and ease of interpretation.

Tumbling windows also work seamlessly with immutable data structures, such as those found in modern content-addressable storage solutions for immutable data warehousing. They ensure a clear and accurate historical aggregation perfect for tasks like compliance reporting, auditing, SLA monitoring, and batch-oriented analyses of streaming data events.

Benefits of Adopting Tumbling Windows

Tumbling windows provide distinct advantages that streamline data processing. These windows impose clear boundaries, facilitating simplified analytics, troubleshooting, and alerting definitions. Data scientists, analysts, and business intelligence engineers particularly value tumbling windows for their ease of implementation, transparent time boundaries, and reduced complexity in statistical modeling or reporting tasks. Additionally, organizations embracing tumbling windows may observe lower computational overhead due to reduced data redundancy, making it resource-efficient and a natural fit for standardized or batch-oriented analyses.

Analyzing Sliding Windows: Structure, Applicability, and Strategic Advantages

Structure of Sliding Windows

In contrast, sliding windows (also called moving windows) feature overlapping intervals, enabling continuous recalculations with a rolling mechanism. Consider a five-minute sliding window moving forward every minute—every incoming data point is associated with multiple windows, fueling constant recalculations and a continuous analytical perspective.

Scenarios Where Sliding Windows Excel

The overlapping structure of sliding windows is perfect for scenarios requiring real-time trend monitoring, rolling averages, anomaly detection, or fault prediction. For instance, network security analytics, predictive equipment maintenance, or customer experience monitoring greatly benefit from sliding windows’ real-time granularity and the enriched analysis they offer. Sliding windows allow organizations to rapidly catch emerging trends or immediately respond to changes in stream patterns, providing early warnings and actionable intelligence reliably and promptly.

When integrated with complex analytical capabilities such as custom user-defined functions (UDFs) for specialized data processing or innovations in polyglot visualization libraries creating richer insights, sliding windows significantly increase a business’s agility in understanding dynamic incoming data. The ongoing evaluations conducted through sliding windows empower teams to detect and respond rapidly, facilitating proactive operational tactics and strategic decision-making.

Benefits That Sliding Windows Bring to Decision Makers

The strategic adoption of sliding windows comes with immense competitive leverage—heightened responsiveness and advanced anomaly detection. Sliding windows enable continuous recalibration of metrics within overlapping intervals for exceptional real-time insight levels. This enables rapid intervention capabilities, revealing short-term deviations or emerging trends not easily captured by fixed-period tumbling windows. Organizations choosing a sliding window model remain a step ahead through the ability to observe immediate data shifts and maintain critical visibility into continuous operational performance.

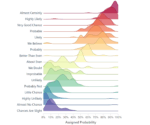

Comparing Tumbling vs Sliding Windows: Key Decision Factors

Both windowing approaches present strengths tailored to different analytical priorities, operational demands, and strategic objectives. To pick your perfect match effectively, consider factors including latency requirements, resource consumption, complexity of implementation, and tolerance to data redundancy.

Tumbling windows offer simplicity, ease of interpretation, clearer boundaries, and minimal operational overhead, while sliding windows present an essential dynamic responsiveness ideal for detecting emerging realities rapidly. Scenario-specific questions—such as “Do we prefer stable reporting over real-time reactivity?” or “Are we more concerned about predictive alerts or retrospective analysis?”—help align strategic priorities with the optimal windowing approach.

Tapping into vital supplementary resources, like understanding logical operators in SQL for optimized queries or ensuring proper methodology in data collection and cleansing strategies, further magnify the benefits of your chosen streaming windows model. Additionally, effective project collaboration reinforced by robust project governance can help eliminate uncertainty surrounding stream processing strategy execution—emphasizing the critical approach outlined in our guide to effective project management for data teams.

Empowering Real-Time Decisions with Advanced Windowing Strategies

Beyond tumbling and sliding, real-time scenarios may sometimes call for hybrid windowing strategies, sessionized windowing (created based on events instead of time), or combinations. Advanced scenarios like migrating real-time Facebook ad interactions to BigQuery—akin to our client scenario detailed in this guide on how to send Facebook data to Google BigQuery using Node.js—illustrate the expansive possibilities achievable by stream processing creativity.

Strategically leveraging expertise from professional service providers can consequently turn technical window selections into strategic organizational decisions. At Dev3lop, our AWS consulting services leverage proven architectural frameworks to pinpoint optimal data windowing strategies, deployment approaches, and platform integrations customized for your unique objectives and enterprise ecosystem.

Empowered by thoughtful strategic insight, technical precision, and collaborative implementation practices, your organization can ensure streaming analytics functions synchronously with broader data ecosystems—securing long-lasting competitive advantage in a data-driven marketplace.

by tyler garrett | Jun 12, 2025 | Data Processing

Imagine guiding an orchestra through a complex symphony—each musician performing their part seamlessly, harmonized into a coherent masterpiece. The Saga Pattern brings this orchestration to software architectures, enabling organizations to confidently manage long-running transactions distributed across multiple services. In today’s dynamic digital landscape, orchestrating such transactions reliably is mission-critical, particularly for businesses heavily reliant on microservices, cloud platforms, and efficient data handling strategies. From financial services to streaming entertainment, effective coordination of distributed systems separates industry leaders from followers. Let’s explore the Saga Pattern, a tactical approach enabling your enterprise to execute, monitor, and gracefully roll back complex transactions across rapidly evolving technical frameworks.

Understanding the Saga Pattern: Distributed Transactions Simplified

The Saga Pattern is an architectural approach designed to manage distributed system transactions where traditional transaction methods—like the ACID properties provided by relational databases—become impractical or inefficient. Distributed microservice architectures, prevalent in modern enterprise IT, often struggle with long-running, multi-step processes that span multiple systems or services. Here, classic transaction management often falter, leading to increased complexity, data inconsistencies, and higher operational overhead.

The Saga Pattern is explicitly crafted to solve these issues. Rather than managing a large monolithic transaction across multiple services, it breaks the transaction into a sequence of smaller, easier-to-manage steps. Each individual transaction has an associated compensating action to handle successful rollbacks or cancellations seamlessly. By structuring distributed transactions this way, businesses can ensure robust operation and maintain workflow consistency despite failures at any point within the transaction sequence.

Implementing the Saga Pattern significantly enhances operational resilience and flexibility, making it a go-to solution for software architects dealing with complex data workflows. For example, in architectures that use PostgreSQL databases and consulting services, developers can integrate robust transactional capabilities with enhanced flexibility, providing critical support for innovative data-driven solutions.

Types of Saga Patterns: Choreography Versus Orchestration

There are two main types of Saga Patterns: choreography-based and orchestration-based sagas. While both approaches aim toward streamlined transaction execution, they differ significantly in structure and implementation. Understanding these differences enables informed architectural decisions and the selection of the ideal pattern for specific business use cases.

Choreography-based Sagas

In choreography-based sagas, each service participates autonomously by completing its operation, publishing an event, and allowing downstream systems to decide the next steps independently. Think of choreography as jazz musicians improvising—a beautiful dance born from autonomy and spontaneity. This structure promotes low coupling and ensures services remain highly independent. However, choreography-wide event management complexity increases as the number of services involved grows, making system-wide visibility and debugging more intricate.

Orchestration-based Sagas

Orchestration-based sagas, on the other hand, introduce a centralized logic manager, often referred to as a “Saga orchestrator.” This orchestrator directs each service, explicitly communicating the next step in the transaction flow and handling compensational logic during rollbacks. This centralized approach provides greater clarity over state management and transaction sequences, streamlining debugging, complex transaction execution visibility, and system-wide error handling improvements. For businesses generating complex data enrichment pipeline architecture patterns, orchestration-based sagas often represent a more tactical choice due to enhanced control and transparency.

Advantages of Implementing the Saga Pattern

Adopting the Saga Pattern provides immense strategic benefits to technology leaders intent on refining their architectures to ensure higher reliability and maintainability:

Improved Scalability and Flexibility

The Saga Pattern naturally supports horizontal scaling and residual resilience in distributed environments. By enabling individual microservices to scale independently, organizations can react swiftly to fluctuating workloads, driving significant reduction in operational overhead and increased business agility.

Error Handling and Data Consistency

Using compensating actions at each step, businesses ensure transactional consistency even in complex transactional scenarios. Sagas enable effective rollbacks at any point in transaction sequences, reducing the risk of erroneous business states and avoiding persistent systemic data failures.

Enhanced Observability and Troubleshooting

Orchestrated Sagas empower architects and engineers with invaluable real-time metrics and visibility of transactional workflows. Troubleshooting becomes significantly easier because transaction logic paths and responsibility reside within organized orchestrations, enabling faster detection and resolution of anomalies.

Consider a progressive approach to data visualization principles demonstrated by chart junk removal and maximizing data-ink ratio methodologies; similarly, eliminating unnecessary complexity in transaction management with Saga Patterns ensures better clarity, observability, and efficiency in data transactions.

Real-World Use Cases: Leveraging Saga Patterns for Industry Success

Within finance, retail, entertainment, logistics, and even the music industry, organizations successfully employ Saga Patterns to manage transactions at scale. For instance, leveraging Saga Patterns becomes critical in supply chain management, precisely integrating advanced predictive analytics methods, as discussed in insights around mastering demand forecasting processes and enhanced supply chain management using predictive analytics.

Similarly, forward-thinking entertainment sectors, such as Austin’s vibrant music venues, utilize advanced analytics to engage audiences strategically. Organizations orchestrate ticket purchasing, customer analytics, marketing initiatives, and loyalty programs through distributed systems integrated with methods like the Saga Pattern. Discover how organizations enhance their approach in scenarios described in, “How Austin’s Music Scene leverages data analytics.”

Whether fintech businesses managing complex transactions across financial portfolios or e-commerce platforms executing multi-step order fulfillment pipelines, adopting Saga Patterns ensures that decision-makers have the right architecture in place to consistently deliver robust, timely transactions at scale.

Successfully Adopting Saga Patterns in Your Organization

Embarking on a Saga Pattern architecture requires careful orchestration. Beyond technical understanding, organizations must embrace a culture of innovation and continuous improvement expressed within effective DevOps and automation implementation. Tools built around visibility, metrics gathering, decentralized monitoring, and event-driven capabilities become critical in successful Saga Patterns adoption.

Moreover, having experienced technology partners helps significantly. Organizations can streamline deployments, optimize efficiency, and build expertise through focused engagements with technical partners customized to enterprise demands. Technology leaders intending to transition toward Saga Pattern architectures should regularly evaluate their IT ecosystem and invest strategically in consulting partnerships, specifically firms specialized in data analytics and software architectures—like Dev3lop.

This holistic approach ensures your technical implementations synergize effectively with your organization’s growth strategy and operational model–ultimately driving success through enhanced reliability, scalability, and transactional confidence.

Conclusion: Empowering Complex Transactions with Orchestrated Excellence

In an ever-more connected digital enterprise landscape characterized by business-critical data transactions traversing multiple systems and interfaces, embracing Saga Patterns becomes a powerful strategic advantage. Whether choreographed or orchestrated, this pattern empowers organizations to execute and manage sophisticated distributed transactions confidently and efficiently, overcoming traditional transactional pitfalls and positioning yourselves advantageously in a competitive digital economy.

Dev3lop LLC is committed to empowering enterprises by providing clear, personalized pathways toward innovative solutions, as exemplified by our newly redeveloped website release announcement article: “Launch of our revised website, offering comprehensive Business Intelligence services.”

By understanding, adapting, and thoughtfully implementing the powerfully flexible Saga Pattern, your organization can orchestrate long-running transactions with ease—delivering greater reliability, competitive agility, and deeper data-driven insights across the enterprise.

Tags: Saga Pattern, Distributed Transactions, Microservices Architecture, Data Orchestration, Transaction Management, Software Architecture

by tyler garrett | Jun 12, 2025 | Data Processing

JSON has become the lingua franca of data interchange on the web. Lightweight and flexible, JSON is undeniably powerful. Yet this very flexibility often encases applications in a schema validation nightmare—what software engineers sometimes call “JSON Hell.” Semi-structured data, with its loosely defined schemas and constantly evolving formats, forces teams to reconsider their validation strategy. At our consulting firm, we understand the strategic implications of managing such complexities. We empower our clients not just to navigate but excel in challenging environments where data-driven innovation is key. Today, we share our insights into schema definition and validation techniques that turn these JSON payloads from daunting challenges into sustainable growth opportunities.

The Nature of Semi-Structured Data Payloads: Flexible Yet Chaotic

In software engineering and data analytics, semi-structured data captures both opportunities and headaches. Unlike data stored strictly in relational databases, semi-structured payloads such as JSON allow for great flexibility, accommodating diverse application requirements and rapid feature iteration. Teams often embrace JSON payloads precisely because they allow agile software development, supporting multiple technologies and platforms. However, the very same flexibility that drives innovation can also create substantial complexity in validating and managing data schemas. Without robust schema validation methods, teams risk facing rapidly multiplying technical debt and unexpected data inconsistencies.

For organizations involved in data analytics or delivering reliable data-driven services, uncontrolled schema chaos can lead to serious downstream penalties. Analytics and reporting accuracy depends largely on high-quality and well-defined data. Any neglected irregularities or stray fields propagated in JSON payloads multiply confusion in analytics, forcing unnecessary debugging and remediation. Ensuring clean, meaningful, and consistent semi-structured data representation becomes critical not only to application stability but also to meaningful insights derived from your datasets.

Furthermore, as discussed in our previous post detailing The Role of Data Analytics in Improving the Delivery of Public Services in Austin, maintaining consistent and reliable datasets is pivotal when informing decision-making and resource allocation. Understanding the implications of semi-structured data architectures is a strategic necessity—transforming JSON chaos into well-oiled and controlled schema validation strategies secures your business outcome.

Schema Design: Establishing Clarity in Loose Structures

Transforming JSON payloads from problem payloads into strategic assets involves implementing clearly defined schema specifications. While JSON doesn’t inherently enforce schemas like traditional SQL tables do—which we cover extensively in our article titled CREATE TABLE: Defining a New Table Structure in SQL—modern developments are increasingly leveraging schema validation to impose necessary structural constraints.

The primary goal of schema validation is ensuring data correctness and consistency throughout data ingestion, processing, and analytics pipelines. A JSON schema describes exactly what a JSON payload should include, specifies accepted fields, data types, formats, allowed values, and constraints. Using JSON Schema—a popular method for schema representation—enables precise validation against incoming API requests, sensor data, or streaming event payloads, immediately filtering out malformed or inconsistent messages.

A strong schema validation strategy provides clarity and reduces cognitive burdens on developers and data analysts, creating a shared language that explicitly defines incoming data’s shape and intent. Furthermore, clearly defined schemas improve technical collaboration across stakeholder teams, making documentation and understanding far easier. Schema specification aligns teams and reduces ambiguity in systems integration and analysis. For development teams leveraging hexagonal design patterns, precise schema interfaces are similarly crucial. Our prior article on the benefits of Hexagonal Architecture for Data Platforms: Ports and Adapters emphasizes clearly defining schemas around data ingress for robust and flexible architectures—reducing coupling, promoting testability, and improving maintainability.

Validation Techniques and Tools for JSON Payloads

Leveraging schema definitions without suitable validation tooling is a recipe for frustration. Fortunately, modern JSON schema validation tooling is mature and widely available, significantly simplifying developer work and ensuring data consistency throughout the lifecycle.

A number of powerful validation tools exist for semi-structured JSON data. JSON Schema, for instance, sets a clear and comprehensive standard that simplifies schema validation. Popular JSON schema validators like AJV (Another JSON Schema Validator), Json.NET Schema, and JSV all offer robust, performant validation functionalities that can easily integrate into existing CI/CD pipelines and runtime environments. Schema validators not only catch malformed payloads but can also provide actionable feedback and error insights, accelerating debugging efforts and improving overall system resilience.

Validation should also be integrated thoughtfully with production infrastructure and automation. Just as resource-aware design enhances fairness in shared processing frameworks—such as our previously discussed guidelines on Multi-Tenant Resource Allocation in Shared Processing Environments—schema validation can similarly ensure reliability of data ingestion pipelines. API gateways or middleware solutions can perform schema checks, immediately discarding invalid inputs while safeguarding downstream components, including data warehouses, analytics layers, and reporting tools, thus preserving system health and preventing data corruption.

User Experience and Error Communication: Bridging Technology and Understanding

An often-overlooked aspect of schema validation implementation revolves around the clear and actionable communication of validation errors to end users and developers alike. Schema errors aren’t merely technical implementation details—they affect user experience profoundly. By clearly conveying validation errors, developers empower users and partners to remediate data problems proactively, reducing frustration and enhancing system adoption.

Design a validation mechanism such that resultant error messages explicitly state expected schema requirements and precisely indicate problematic fields. For payloads intended for analytical visualization purposes—such as those explored in our blog topic on Interactive Legends Enhancing User Control in Visualizations—validation clarity translates immediately into more responsive interactive experiences. Users or analysts relying on data-driven insights can trust the platform, confidently diagnosing and adjusting payloads without guesswork.

Good UX design combined with clear schema validation conveys meaningful insights instantly, guiding corrective action without excessive technical support overhead. Importantly, clarity in error communication also supports adoption and trustworthiness throughout the entire stakeholder ecosystem, from internal developers to external partners, streamlining troubleshooting processes and fostering successful integration into enterprise or public service delivery contexts.

Ethical Considerations: Schemas as Safeguards in Data Privacy and Bias Prevention

Finally, schema validation goes beyond merely technical correctness—it also provides essential ethical safeguards in increasingly sophisticated data analytics systems. Stringent schema validation can enforce data privacy by explicitly defining acceptable data collection scopes, specifically preventing unauthorized or accidental inclusion of sensitive fields in payload structures. This validation enforcement plays a fundamental role in privacy-conscious analytics, an important consideration explored extensively in our article on Ethical Considerations of Data Analytics: Issues of Privacy, Bias, and the Responsible Use of Data.

Well-defined schema validation also helps organizations proactively avoid unintentional data biases and inaccuracies. By enforcing precise constraints on acceptable values and inputs, schema validation significantly reduces exposure to subtle bias being introduced into datasets. Filtering at ingestion allows data scientists and analysts to confidently interpret and leverage data insights without risking downstream effects from unintended systemic biases or manipulated data.

Maintaining upfront schema validation practices thus becomes not only an architectural best practice but also an ethical responsibility. As professional technology strategists, we continually advocate deeply embedding schema validation frameworks into your systems design—ensuring not only data quality and operational stability but also responsible data stewardship and compliance.

Conclusion: Escaping JSON Hell Through Strategic Schema Validation

Semi-structured JSON payloads offer significant flexibility but can quickly turn chaotic without solid schema validation strategies. By investing in robust tools, thoughtfully designed schemas, clear error communication strategies, and ethically cognizant policies, your organization can transform schema validation from an afterthought to a strategic asset. At Dev3lop, we combine technical strategy and innovation with solid practical implementation experience, supporting enterprise-level data analytics, data architecture, and data-driven decision-making. Engage with our expertise—from consulting on complex JSON schema definitions through advanced analytics infrastructures leveraging technologies like MySQL Consulting Services—and let’s avoid JSON Hell together.