by tyler garrett | Jul 22, 2025 | Real-Time Streaming Systems

In the era of relentless digital acceleration, decision-makers are under mounting pressure to leverage every data point—instantly. The competitive landscape demands more than just machine learning; it requires the ability to extract, transform, and act upon raw data in real time. At Dev3lop, we help organizations transcend static batch processes, unlocking new frontiers with advanced analytics and consulting solutions that empower teams with rapid online ML scoring. This article dives deep into the art and science of real-time feature extraction—and why it is the bridge between data and decisive, profitable action.

The Strategic Imperative for Real-Time Feature Extraction

Feature extraction sits at the core of any data-driven initiative, selectively surfacing signals from the noise for downstream machine learning models. Traditionally, this process has operated offline—delaying insight and sometimes even corrupting outcomes with outdated or ‘zombie’ data. In high-velocity domains—such as financial trading, fraud detection, and digital marketing—this simply doesn’t cut it. Decision-makers must architect environments that promote feature extraction on the fly, ensuring the freshest, most relevant data drives each prediction.

Real-time feature engineering reshapes enterprise agility. For example, complex cross-system identification, such as Legal Entity Identifier integration, enhances model scoring accuracy by keeping entity relationships current at all times. Marrying new data points with advanced data streaming and in-memory processing technologies, the window between data generation and business insight narrows dramatically. This isn’t just about faster decisions—it’s smart, context-rich decision making that competitors can’t match.

Architecting Data Pipelines for Online ML Scoring

The journey from data ingestion to online scoring hinges on sophisticated pipeline engineering. This entails more than just raw performance; it requires orchestration of event sourcing, real-time transformation, and stateful aggregation, all while maintaining resilience and data privacy. Drawing on lessons from event sourcing architectures, organizations can reconstruct feature state from an immutable log of changes, promoting both accuracy and traceability.

To thrive, pipeline design must anticipate recursive structures and data hierarchies, acknowledged as notorious hazards in hierarchical workloads. Teams must address challenges like join performance, late-arriving data, and schema evolution, often building proof-of-concept solutions collaboratively in real time—explained in greater depth in our approach to real-time client workshops. By combining robust engineering with continuous feedback, organizations can iterate rapidly and keep their online ML engines humming at peak efficiency.

Visualizing and Interacting With Streaming Features

Data without visibility is seldom actionable. As pipelines churn and ML models score, operational teams need intuitive ways to observe and debug features in real time. Effective unit visualization, such as visualizing individual data points at scale, unearths patterns and anomalies long before dashboards catch up. Advanced, touch-friendly interfaces—see our work in multi-touch interaction design for tablet visualizations—let stakeholders explore live features, trace state changes, and drill into the events that shaped a model’s current understanding.

These capabilities aren’t just customer-facing gloss; they’re critical tools for real-time troubleshooting, quality assurance, and executive oversight. By integrating privacy-first approaches, rooted in the principles described in data privacy best practices, teams can democratize data insight while protecting sensitive information—meeting rigorous regulatory requirements and bolstering end-user trust.

Conclusion: Turning Real-Time Features Into Business Value

In today’s fast-paced, data-driven landscape, the capacity to extract, visualize, and operationalize features in real time is more than an engineering feat—it’s a competitive necessity. Executives and technologists who champion real-time feature extraction enable their organizations not only to keep pace with shifting markets, but to outpace them—transforming raw streams into insights, and insights into action. At Dev3lop, we marshal a full spectrum of modern capabilities—from cutting-edge visualization to bulletproof privacy and advanced machine learning deployment. To explore how our tableau consulting services can accelerate your data initiatives, connect with us today. The future belongs to those who act just as fast as their data moves.

Thank you for your support, follow DEV3LOPCOM, LLC on LinkedIn and YouTube.

by tyler garrett | Jul 18, 2025 | Real-Time Streaming Systems

In the fast-evolving landscape of data-driven decision-making, tracking time-based metrics reliably is both an art and a science. As seasoned consultants at Dev3lop, we recognize how organizations today—across industries—need to extract actionable insights from streaming or frequently updated datasets. Enter sliding and tumbling window metric computation: two time-series techniques that, when mastered, can catalyze both real-time analytics and predictive modeling. But what makes these methods more than just data engineering buzzwords? In this guided exploration, we’ll decode their value, show why you need them, and help you distinguish best-fit scenarios—empowering leaders to steer data strategies with confidence. For organizations designing state-of-the-art analytics pipelines or experimenting with AI consultant-guided metric intelligence, understanding these windowing techniques is a must.

The Rationale Behind Time Window Metrics

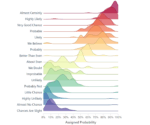

Storing all state and recalculating every metric—a natural reflex in data analysis—is untenable at scale. Instead, “windowing” breaks continuous streams into manageable, insightful segments. Why choose sliding or tumbling windows over simple aggregates? The answer lies in modern data engineering challenges—continuous influxes of data, business needs for near-instant feedback, and pressures to reduce infrastructure costs. Tumbling windows create fixed, non-overlapping intervals (think: hourly sales totals); sliding windows compute metrics over intervals that move forward in time as new data arrives, yielding smooth, up-to-date trends.

Applying these methods allows for everything from real-time fraud detection (webhooks and alerts) to nuanced user engagement analyses. Sliding windows are ideal for teams seeking to spot abrupt behavioral changes, while tumbling windows suit scheduled reporting needs. Used judiciously, they become the backbone of streaming analytics architectures—a must for decision-makers seeking both agility and accuracy in their metric computation pipelines.

Architectural Approaches: Sliding vs Tumbling Windows

What truly distinguishes sliding from tumbling windows is their handling of time intervals and data overlap. Tumbling windows are like batches: they partition time into consecutive, fixed-duration blocks (e.g., “every 10 minutes”). Events land in one, and only one, window—making aggregates like counts and sums straightforward. Sliding windows, meanwhile, move forward in smaller increments and always “overlap”—each data point may count in multiple windows. This approach delivers granular, real-time trend analysis at the cost of additional computation and storage.

Selecting between these models depends on operational priorities. Tumbling windows may serve scheduled reporting or static dashboards, while sliding windows empower live anomaly detection. At Dev3lop, we frequently architect systems where both coexist, using AI agents or automation to route data into the proper computational streams. For effective windowing, understanding your end-user’s needs and visualization expectations is essential. Such design thinking ensures data is both actionable and digestible—whether it’s an operations manager watching for outages or a data scientist building a predictive model.

Real-World Implementation: Opportunities and Pitfalls

Implementing sliding and tumbling windows in modern architectures (Spark, Flink, classic SQL, or cloud-native services) isn’t without its pitfalls: improper window sizing can obscure valuable signals or flood teams with irrelevant noise. Handling time zones, out-of-order events, and misshaped data streams are real-world headaches, as complex as any unicode or multi-language processing task. Strategic window selection, combined with rigorous testing, delivers trustworthy outputs for business intelligence.

Instant feedback loops (think: transaction monitoring, notification systems, or fraud triggers) require tight integration between streaming computation and pipeline status—often relying on real-time alerts and notification systems to flag anomalies. Meanwhile, when updating historic records or maintaining slowly changing dimensions, careful orchestration of table updates and modification logic is needed to ensure data consistency. Sliding and tumbling windows act as the “pulse,” providing up-to-the-moment context for every digital decision made.

Making the Most of Windowing: Data Strategy and Innovation

Beyond foundational metric computation, windowing unlocks powerful data innovations. Sliding windows, in tandem with transductive transfer learning models, can help operationalize machine learning workflows where label scarcity is a concern.

Ultimately, success hinges on aligning your architecture with your business outcomes. Window size calibration, integration with alerting infrastructure, and the selection of stream vs batch processing all affect downstream insight velocity and accuracy. At Dev3lop, our teams are privileged to partner with organizations seeking to future-proof their data strategy—whether it’s building robust streaming ETL or enabling AI-driven agents to operate on real-time signals. To explore how advanced windowing fits within your AI and analytics roadmap, see our AI agent consulting services or reach out for a strategic architectural review.

Thank you for your support, follow DEV3LOPCOM, LLC on LinkedIn and YouTube.

by tyler garrett | Jul 18, 2025 | Real-Time Streaming Systems

In today’s data-fueled world, the shelf life of information is shrinking rapidly. Decisions that once took weeks now happen in minutes—even seconds. That’s why distinguishing between “Hot Path” and “Cold Path” data architecture patterns is more than a technical detail: it’s a business imperative. At Dev3lop, we help enterprises not just consume data, but transform it into innovation pipelines. Whether you’re streaming millions of social media impressions or fine-tuning machine learning models for predictive insights, understanding these two real-time approaches unlocks agility and competitive advantage. Let’s dissect the architecture strategies that determine whether your business acts in the moment—or gets left behind.

What is the Hot Path? Fast Data for Real-Time Impact

The Hot Path is all about immediacy—turning raw events into actionable intelligence in milliseconds. When you need real-time dashboards, AI-driven recommendations, or fraud alerts, this is the architecture pattern at play. Designed for ultra-low latency, a classic Hot Path will leverage technologies like stream processing frameworks (think Apache Kafka, Apache Flink, or Azure Stream Analytics) to analyze, filter, and enrich data as it lands. Yet Hot Path systems aren’t just for tech giants; organizations adopting them for media analytics see results like accelerated content curation and audience insights. Explore this pattern in action by reviewing our guide on streaming media analytics and visualization patterns, a powerful demonstration of how Hot Path drives rapid value creation.

Implementing Hot Path solutions requires careful planning: you need robust data modeling, scalable infrastructure, and expert tuning, often involving SQL Server consulting services to optimize database performance during live ingestion. But the results are profound: more agile decision-making, higher operational efficiency, and the ability to capture transient opportunities as they arise. Hot Path architecture brings the digital pulse of your organization to life—the sooner data is available, the faster you can respond.

What is the Cold Path? Deep Insight through Batch Processing

The Cold Path, by contrast, operates at the heart of analytics maturity—where big data is aggregated, historized, and digested at scale. This pattern processes large volumes of data over hours or days, yielding deep insight and predictive power that transcend moment-to-moment decisions. Batch ETL jobs, data lakes, and cloud-based warehousing systems such as Azure Data Lake or Amazon Redshift typically power the Cold Path. Here, the focus shifts to data completeness, cost efficiency, and rich model-building rather than immediacy. Review how clients use Cold Path pipelines on their way from gut feelings to predictive models—unlocking strategic foresight over extended time horizons.

The Cold Path excels at integrating broad datasets—think user journeys, market trends, and seasonal sales histories—to drive advanced analytics initiatives. Mapping your organization’s business capabilities to data asset registries ensures that the right information is always available to the right teams for informed, long-term planning. Cold Path doesn’t compete with Hot Path—it complements it, providing the context and intelligence necessary for operational agility and innovation.

Choosing a Unified Architecture: The Lambda Pattern and Beyond

Where does the real power lie? In an integrated approach. Modern enterprises increasingly adopt hybrid, or “Lambda,” architectures, which blend Hot and Cold Paths to deliver both operational intelligence and strategic depth. In a Lambda system, raw event data is processed twice: immediately by the Hot Path for real-time triggers, and later by the Cold Path for high-fidelity, full-spectrum analytics. This design lets organizations harness the best of both worlds—instantaneous reactions to critical signals, balanced by rigorous offline insight. Visualization becomes paramount when integrating perspectives, as illustrated in our exploration of multi-scale visualization for cross-resolution analysis.

Data lineage and security are additional cornerstones of any robust enterprise architecture. Securing data in motion and at rest is essential, and advanced payload tokenization techniques for secure data processing can help safeguard sensitive workflows, particularly in real-time environments. As organizations deploy more AI-driven sentiment analysis and create dynamic customer sentiment heat maps, these models benefit from both fresh Hot Path signals and the comprehensive context of the Cold Path—a fusion that accelerates innovation while meeting rigorous governance standards.

Strategic Enablers: Integrations and Future-Proofing

The future of real-time architecture is convergent, composable, and connected. Modern business needs seamless integration not just across cloud platforms, but also with external services and social networks. For example, getting value from Instagram data might require advanced ETL pipelines—learn how with this practical guide: sending Instagram data to Google BigQuery using Node.js. Whatever your use case—be it live analytics, machine learning, or advanced reporting—having architectural agility is key. Partnering with a consultancy that can design, optimize, and maintain synchronized Hot and Cold Path solutions will future-proof your data strategy as technologies and business priorities evolve.

Real-time patterns are more than technical options; they are levers for business transformation. From instant content recommendations to strategic AI investments, the ability to balance Hot and Cold Path architectures defines tomorrow’s market leaders. Ready to architect your future? Explore our SQL Server consulting services or reach out for a custom solution tailored to your unique data journey.

Thank you for your support, follow DEV3LOPCOM, LLC on LinkedIn and YouTube.

by tyler garrett | Jul 17, 2025 | Real-Time Streaming Systems

As organizations strive to harness real-time data for competitive advantage, stateful stream processing has become a cornerstone for analytics, automation, and intelligent decision-making. At Dev3lop LLC, we empower clients to turn live events into actionable insights—whether that’s personalizing user experiences, detecting anomalies in IoT feeds, or optimizing supply chains with real-time metrics. Yet, scaling stateful stream processing is far from trivial. It requires a strategic blend of platform knowledge, architectural foresight, and deep understanding of both data velocity and volume. In this article, we’ll demystify the core concepts, challenges, and approaches necessary for success, building a bridge from technical nuance to executive priorities.

Understanding Stateful Stream Processing

Stateful stream processing refers to handling data streams where the outcome of computation depends on previously seen events. Unlike stateless processing—where every event is independent—stateful systems track contextual information, enabling operations like counting, sessionization, aggregates, and joins across event windows. This is crucial for applications ranging from fraud detection to user session analytics. Modern frameworks such as Apache Flink, Apache Beam, and Google Dataflow enable enterprise-grade stream analytics, but decision-makers must be aware of the underlying complexities, especially regarding event time semantics, windowing, consistency guarantees, and managing failure states for critical business processes.

If you’re exploring the nuances between tumbling, sliding, and other windowing techniques, or seeking comprehensive insights on big data technology fundamentals, understanding these foundational blocks is vital. At scale, even small design decisions in these areas can have outsized impacts on system throughput, latency, and operational maintainability. This is where trusted partners—like our expert team—help architect solutions aligned to your business outcomes.

Architecting for Scale: Key Patterns and Trade-Offs

Scaling stateful stream processing isn’t just about adding more servers—it’s about making smart architectural choices. Partitioning, sharding, and key distribution are fundamental to distributing stateful workloads while ensuring data integrity and performance. Yet, adapting these patterns to your business context demands expertise. Do you use a global state, localized state per partition, or a hybrid? How do you handle backpressure, out-of-order data, late arrivals, or exactly-once guarantees?

In practice, sophisticated pipelines may involve stream-table join implementation patterns or incorporate slowly changing dimensions as in modern SCD handling. Integrating these with cloud platforms amplifies the need for scalable, resilient, and compliant designs—areas where GCP Consulting Services can streamline your transformation. Critically, your team needs to weigh operational trade-offs: processing guarantees vs. performance, simplicity vs. flexibility, and managed vs. self-managed solutions. The right blend fuels sustainable innovation and long-term ROI.

Integrating Business Value and Data Governance

Powerful technology is only as valuable as the outcomes it enables. State management in stream processing creates new opportunities for business capability mapping and regulatory alignment. By organizing data assets smartly, with a robust data asset mapping registry, organizations unlock reusable building blocks and enhance collaboration across product lines and compliance teams. Furthermore, the surge in real-time analytics brings a sharp focus on data privacy—highlighting the importance of privacy-preserving record linkage techniques for sensitive or regulated scenarios.

From enriching social media streams for business insight to driving advanced analytics in verticals like museum visitor analytics, your stream solutions can be fine-tuned to maximize value. Leverage consistent versioning policies with semantic versioning for data schemas and APIs, and ensure your streaming data engineering slots seamlessly into your broader ecosystem—whether driving classic BI or powering cutting-edge AI applications. Let Dev3lop be your guide from ETL pipelines to continuous, real-time intelligence.

Conclusion: Orchestrating Real-Time Data for Innovation

Stateful stream processing is not simply an engineering trend but a strategic lever for organizations determined to lead in the data-driven future. From real-time supply chain optimization to personalized customer journeys, the ability to act on data in motion is rapidly becoming a competitive imperative. To succeed at scale, blend deep technical excellence with business acumen—choose partners who design for reliability, regulatory agility, and future-proof innovation. At Dev3lop LLC, we’re committed to helping you architect, implement, and evolve stateful stream processing solutions that propel your mission forward—securely, efficiently, and at scale.

Thank you for your support, follow DEV3LOPCOM, LLC on LinkedIn and YouTube.

by tyler garrett | Jun 30, 2025 | Data Visual

Imagine visualizing the invisible, tracing the paths of transactions that ripple across global networks instantly and securely. Blockchain transaction visualization unlocks this capability, transforming abstract data flows into clear, navigable visual stories. Decision-makers today face an urgent need to understand blockchain activity to capture value, enhance regulatory compliance, and make strategic decisions confidently. By effectively mapping distributed ledger transactions, businesses can gain unprecedented transparency into their operations and enjoy richer analytic insights—turning cryptic ledger entries into vibrant opportunities for innovation.

Understanding Blockchain Transactions: Insights Beyond the Ledger

Blockchain technology, known for its decentralized and tamper-resistant properties, carries tremendous potential for transparency. Each blockchain transaction is a cryptographically secure event stored permanently across multiple distributed nodes, building an immutable ledger. Yet the inherent complexity of transactions and the vast scale of ledger data present substantial challenges when extracting meaningful insights rapidly. Here, visualization emerges as an essential approach to simplify and clarify blockchain insights for strategic understanding.

By leveraging effective visualization techniques, stakeholders can examine intricate transaction relationships, pinpoint high-value exchanges, and uncover patterns indicative of market behaviors or fraudulent activities. When decision-makers grasp the flow of resources through intuitive visual interfaces, it elevates their strategic decision-making ability exponentially. Using innovative analytical tools and methodologies specifically designed for blockchain, businesses can quickly transform distributed ledger complexity into actionable intelligence, thus generating concrete business value from distributed insights.

At Dev3lop, our expertise in Node.js consulting services helps integrate robust visualization systems seamlessly into cutting-edge blockchain analytics workflows, enhancing the speed and precision of strategic decision-making across your organization.

Choosing the Right Visualization Techniques for Blockchain Data

Not every visualization approach works effectively for blockchain data. Optimal visualization demands understanding the specific nature and purpose of the data you’re analyzing. Transaction maps, heat maps, Sankey diagrams, network graphs, and even contour plot visuals—like the ones we’ve explained in our blog on contour plotting techniques for continuous variable domains—offer tremendous analytical power. Network graphs illustrate complex relationships among addresses, wallets, and smart contracts, allowing analysts to recognize influential nodes and assess transactional risk accurately.

Sankey diagrams, in particular, can visualize resource movements across crypto platforms clearly, allowing stakeholders to instantly grasp inflows and outflows at multiple addresses or distinguish between factors influencing wallet activities. Heat maps enable stakeholders to detect areas of high blockchain usage frequency, easily identifying geographic or temporal transaction trends. Creating the right visualization structure demands strategic thought: are stakeholders most interested in confirming transaction authenticity, systematic fraud detection, monitoring compliance adherence, or understanding market dynamics?

The strategic alignment with visualization type and analytics goal becomes pivotal. For organizations managing constrained data resources, our blog post about prioritizing analytics projects with limited budgets provides valuable strategic guidance to ensure investments align powerfully with organizational outcomes.

Enhancing Fraud Detection and Security through Visual Analytics

Security and fraud prevention rank as top priorities for blockchain users, particularly for enterprises integrating distributed ledger technology into critical business processes. Transaction visualization significantly strengthens the effectiveness of security measures. Identifying suspicious transactions quickly and easily through visual analysis reduces organizational risk and saves resources otherwise dedicated to manual investigative processes. Patterns and outliers revealed via visualization highlight transactions with unusual transfer volumes or repeated activity from suspicious sources clearly.

Furthermore, visual analytics tools powered by Node.js solutions can be implemented for tracking blockchain events in real-time, supported by platforms well-suited for processing large data streams. Adopting effective processing window strategies for streaming analytics, as described in our published insights, positions analytics teams to detect fraudulent irregularities rapidly in live transactional datasets.

Visualization also aids regulatory compliance by enabling comprehensive chain-of-custody insights into funds traveling across dispersed networks. Enterprises can track compliance adherence visually and share transparent reports instantly, dramatically improving trust and accountability across complex digital ecosystems.

Advanced Visualization Strategies: Real-Time Blockchain Monitoring

Real-time blockchain monitoring represents the future of strategic blockchain visualization within analytics frameworks. Decision-makers require immediate accuracy and clarity when evaluating distributed ledger activities, and advanced visualization methods make this possible. Real-time dashboards employing sophisticated data querying frameworks, like utilizing efficient SQL operators we detailed in efficient filtering of multiple values using the SQL IN operator, equip analysts with live transaction feeds represented visually. Instantaneous visualization helps businesses react quickly to dynamic market shifts or regulatory requirements.

Enabling real-time monitoring demands powerful, reliable infrastructure and streamlined data movement: as we’ve previously demonstrated by helping businesses send Sage API data to Google BigQuery, robust integration services provide stable platforms for scalable blockchain analytics. Engineers adept at big data analytics and cloud environments, outlined further in our article on hiring engineers focused on improving your data environment, bolster your analytics strategy by constructing streamlined analytics pipelines that instantly bring blockchain insights from decentralized nodes to decision-maker dashboards.

Navigating Global Complexity: Visualization at Scale

Blockchain systems inherently span multiple global locations, creating complexities inherent to distributed operations, transaction timing, and location-specific analytics needs. Decision-makers managing cross-border blockchain applications encounter issues in comparing and analyzing transaction timestamps consistently—a challenge we covered extensively in our post about handling time zones in global data processing.

Effective blockchain visualization reconciles these global complexities by offering intuitive visual representations, synchronizing time zones dynamically, and presenting coherent perspectives no matter how wide-ranging or globally dispersed the data may be. Platforms capable of intelligently aggregating data from geographically decentralized blockchain nodes enhance reliability, speed, and clarity, thereby minimizing confusion across global teams.

Seamless integration between visual analytics and global blockchain systems ensures businesses stay competitive in international arenas, confidently interpret cross-border ledger activities, and leverage blockchain data effectively in their strategic decision-making processes.

Leveraging Blockchain Visualization for Competitive Advantage

With sophisticated blockchain transaction visualization in place, organizations achieve unprecedented strategic clarity and operational insight—unlocking significant competitive advantages across marketplaces. Visualizing your distributed ledger data enhances forecasting accuracy, identifies customer segments clearly, and reveals new business opportunities. We’ve detailed similar strategies previously in our article illustrating how market basket analysis helps identify complementary products.

Visualization also serves as a powerful communication tool internally. Translating blockchain data into visually comprehensible insights, even non-technical executives quickly grasp previously obscure ledger trends. This boosts organizational agility, expedites data-driven responses, and helps organizations position themselves expertly for market leadership.

Strategic decision-making fueled by clear blockchain data visualizations drives lasting innovation, operational efficiency, and robust competitive performance. Leaders who embrace blockchain transaction visualization pledge their organization toward greater transparency, sustained innovation, and unwavering growth potential in an increasingly blockchain-centric economy.

From strategy definition through visualization execution at Dev3lop, our expertise bridges the gap between industry-defining analytics insight and blockchain’s transformative power, ensuring your organization leads confidently through a digitally decentralized future.

Thank you for your support, follow DEV3LOPCOM, LLC on LinkedIn and YouTube.