by tyler garrett | Jun 11, 2025 | Data Visual

In the era of digitization, data has become the lifeblood of corporations aiming to innovate, optimize processes, and strategically enhance decision-making. Corporate communication teams depend heavily on visualizations—charts, graphs, and dashboards—to simplify complexity and present narratives in compelling ways. Yet, with the persuasive power of visual storytelling comes an imperative ethical responsibility to ensure accurate, transparent depiction of data. Unfortunately, misleading charts can distort interpretations, significantly affecting stakeholders’ decisions and trust. Understanding visualization ethics and pitfalls becomes crucial not merely from a moral standpoint but also as an essential strategic practice to sustain credibility and drive informed business decisions.

The Impact of Misleading Data Visualizations

Corporate reports, presentations, and dashboards serve as fundamental sources of actionable insights. Organizations utilize these tools not only internally, aiding leaders and departments in identifying opportunities, but also externally to communicate relevant information to investors, regulators, and customers. However, visualization missteps—intentional or unintentional—can drastically mislead stakeholders. A single improperly scaled chart or an ambiguous visual representation can result in costly confusion or misinformed strategic decisions.

Moreover, in an era of heightened transparency and social media scrutiny, misleading visualizations can severely damage corporate reputation. Misinterpretations may arise from basic design errors, improper label placements, exaggerated scales, or selective omission of data points. Therefore, corporations must proactively build internal standards and guidelines to reduce the risk of visual miscommunication and ethical pitfalls within reports. Adopting robust data quality testing frameworks helps ensure that underlying data feeding visualizations remains credible, accurate, and standardized.

Ensuring ethical and truthful visual representations involves more than just good intentions. Companies need experts who understand how visual design interacts with cognitive perceptions. Engaging professional advisors through data visualization consulting services provides a way to establish industry best practices and reinforce responsible visualization cultures within teams.

The Common Pitfalls in Corporate Chart Design (and How to Avoid Them)

Incorrect Use of Scale and Axis Manipulation

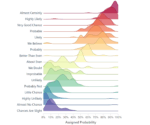

Manipulating axis dimensions or scales is a common yet subtle way visualizations become unintentionally misleading. When charts exaggerate a minor difference by truncating the axis or failing to begin at a logical zero baseline, they distort reality and magnify trivial variations.

To counter this, visualization creators within corporations should standardize axis scaling policies, using full-scale axis contexts wherever practical to portray true proportionality. Transparency in axis labeling, clear legends, and scale standardization protect stakeholders from incorrect assumptions. Enterprise teams can utilize data element standardization across multiple domains, which helps establish uniform consistency in how data components are applied and visually presented.

Cherry-picking Data Points and Omitting Context

Organizations naturally strive to highlight favorable stories from their data, yet selectively omitting unfavorable data points or outliers misrepresents actual conditions. Context removal compromises integrity and disguises genuine challenges.

Transparency should always take precedence. Including explanatory footnotes, offering interactive visual tools for stakeholder exploration, and clearly communicating underlying assumptions help stakeholders understand both the positive spins and inherent limitations of data representations.

Investing in systems to manage data transparency, such as pipeline registry implementations, ensures decision-makers fully comprehend metadata and environmental context associated with presented data, keeping visualization integrity intact.

Misuse of Visual Encoding and Graphical Elements

Visual encoding errors happen often—perhaps color schemes unintentionally manipulate emotional interpretation, or specialized visualization types like 3D visuals distort the perception of relative sizes. Fancy visuals are appealing but without conscientious use, they can inadvertently mislead viewers.

Consistent visual encoding and simplified clarity should inform design strategies. Using known visualization best practices, comprehensive stakeholder training, and industry-standard visualization principles promote visualization reliability. Additionally, market-facing charts should minimize overly complex graphical treatments unless necessary. Readers must quickly grasp the intended message without misinterpretation risks. Partnering with visualization experts provides design guidance aligned with ethical visualization practices, aligning innovation ambitions and ethical transparency effectively.

Establishing Ethical Data Visualization Practices within Your Organization

Businesses focused on innovation cannot leave visualization ethics merely to individual discretion. Instead, organizations must embed visual data ethics directly into corporate reporting governance frameworks and processes. Training programs centered on ethical data-stage management and responsible visualization design patterns systematically reinforce desired behaviors within your analytics teams.

Adopting and implementing internal guidelines with clear standards aligned to industry best practices—and utilizing prerequisite technology infrastructures to manage real-time data ethical standards—is imperative. Understanding and applying analytics methods such as market basket analysis requires clear visual guidelines, aiding clear interpretation.

When handling more complex data types—for example, streaming data at scale—having predefined ethical visualization rules provides consistent guardrails that aid users’ understanding and uphold integrity. This not only bolsters corporate credibility but also builds a strong, trustworthy brand narrative.

Technical Tools and Processes for Visualization Integrity

Establishing ethical data visualization requires effective integration of technical solutions coupled with vigorous, reliable processes. Technology-savvy corporations should integrate automated validation protocols and algorithms that swiftly flag charts that deviate from predefined ethical standards or typical patterns.

Data professionals should systematically leverage data quality software solutions to apply automated accuracy checks pre-publications. Tools capable of intelligently identifying designs violating standards can proactively reduce the potential for misinformation. Moreover, integrating easily verifiable metadata management approaches ensures visualizations are cross-referenced transparently with underlying data flows.

Organizational reliance on consistent infrastructure practices—such as clearly documented procedures for Update Orchestrator Services and other IT processes—ensures both the reliability of data aggregation strategies behind visualizations and upfront system transparency, further supporting visualization ethical compliance. Smart technology utilization paired with clear procedural frameworks generates seamless business reporting designed for transparency, accuracy, and ethical practices.

Continuous Improvement and Corporate Ethical Commitment

Visualization ethical standards aren’t static checkpoints; they are ongoing concerns that require continuous effort, alignment, and evolution. Companies should regularly audit their data reporting and visualization practices, evolving standards based on stakeholder feedback, emerging market norms, or technologies becoming adopted. Digital visualization innovation continuously evolves to support improved accuracy, fidelity, and clear communication; thus maintaining ethical standards requires proactive adaptability.

Visualization ethics adherence creates long-term brand value, trust, and clarity. Leaders must remain diligent, reinforcing responsibility in visualization design principles and ethics. Organizations displaying consistent ethical commitment are viewed positively by both markets and industry leaders, fortifying long-term competitiveness. To sustainably embed visualization ethics within corporate culture, commit to ongoing structured education, regular assessments, and continual improvements. Partnering with skilled consulting organizations specializing in data visualization ensures organizations mitigate risk exposure, reinforcing trust underpinning corporate innovation strategy.

Conclusion: Charting a Clear Ethical Path Forward

Data visualizations wield remarkable power for empowerment and influence, translating complex insights into meaningful decisions. Yet, such persuasive influences must remain ethically bounded by truthfulness and accuracy. Misleading visualization designs may provide short-term, superficial gains shading unfavorable realities, but such practices pose greater reputational and operational risks long-term.

The integrity of how companies represent visualized data significantly influences overall corporate perception and success. Only through conscious dedication, clear procedures, technology investments, and fostering organizational cultures dedicated to transparency can companies ensure design decisions consistently reflect truthful, accountable information.

By recognizing corporate responsibility in visualization ethics and committing resources toward sustained improvements, organizations secure long-term trust, brand integrity, and innovate responsibly. Stakeholders deserve clear, truthful visualizations across every corporate communication to make the best collective decisions driving your business forward.

For more strategic direction in ethical data visualizations and solutions optimized for innovation and accuracy, contact an experienced visualization consultant; consider exploring comprehensive data visualization consulting services for expert guidance on refining your corporate reporting practices.

Thank you for your support, follow DEV3LOPCOM, LLC on LinkedIn and YouTube.

by tyler garrett | Jun 9, 2025 | Data Processing

Imagine your analytics system as a tightly choreographed dance performance. Every performer (data event) needs to enter the stage precisely on cue. But real-world data seldom obeys our neatly timed schedules. Late-arriving data, events that report well beyond their expected window, can cause significant bottlenecks, inaccuracies, and frustration – complicating decisions and potentially derailing initiatives reliant on precise insights. In an ever-evolving digital age, with businesses leaning heavily on real-time, predictive analytics for critical decision-making, your capability to effectively handle late-arriving events becomes pivotal. How can your team mitigate this issue? How can your company reliably harness value from temporal data despite delays? As experts who help clients navigate complex data challenges—from advanced analytics to sophisticated predictive modeling—our aim is to maintain clarity amidst the chaos. Let’s dive into proven strategic methods, useful tools, and best practices for processing temporal data effectively, even when events show up fashionably late.

Understanding the Impact of Late-Arriving Events on Analytics

Late-arriving events are a common phenomenon in data-driven businesses. These events occur when data points or metrics, intended to be part of a chronological timeline, are received much later than their established expectation or deadline. This delay may stem from a lot of reasons—connectivity latency, slow sensor communication, third-party API delays, or batch processes designed to run at predetermined intervals. Whatever the origin, understanding the impact of these delayed events on your analytics initiatives is crucially important.

Ignoring or mishandling this late-arriving data can lead decision-makers astray, resulting in inaccurate reports and analytics outcomes that adversely influence your business decisions. Metrics such as customer engagement, real-time personalized offers, churn rate predictions, or even sophisticated predictive models could lose accuracy and reliability, misguiding strategic decisions and budget allocations.

For example, suppose your business implements predictive models designed to analyze customer behaviors based on sequential events. An event’s delay—even by minutes—can lead to models constructing incorrect narratives about the user’s journey. Real-world businesses risk monetary loss, damaged relationships with customers, or missed revenue opportunities from inaccurate analytics.

Clearly, any analytics practice built upon temporal accuracy needs a proactive strategy. At our consulting firm, clients often face challenges like these; understanding exactly how delays impact analytical processes empowers them to implement critical solutions such as improved data infrastructure scalability and real-time analytics practices.

Key Strategies to Handle Late-Arriving Temporal Data

Establish Effective Data Windows and Buffer Periods

Setting up clearly defined temporal windows and buffer times acts as an immediate defensive measure to prevent late-arriving data from upsetting your critical analytical computations. By carefully calibrating the expected maximum possible delay for your dataset, you effectively ensure completeness before initiating costly analytical computations or predictive analyses.

For instance, let’s say your dataset typically arrives in real-time but occasionally encounters external delays. Defining a specific latency threshold or “buffer period” (e.g., 30 minutes) allows you to hold off event-driven workflows just long enough to accept typical late contributions. This controlled approach balances real-time responsiveness with analytical accuracy.

By intelligently architecting buffer periods, you develop reasonable and robust pipelines resilient against unpredictable delays, as described in-depth through our guide on moving from gut feelings to predictive models. Once established, timely, accurate insights provide better decision support, ensuring forecasts and analytical processes remain trustworthy and reliable despite the underlying complexity of data arrival timings.

Leverage Event Time and Processing Time Analytics Paradigms

Two important paradigms that support your strategic approach when addressing temporal data are event-time and processing-time analytics. Event-time analytics organizes and analyzes data based on when events actually occurred, rather than when they are received or categorized. Processing-time, conversely, focuses strictly on when data becomes known to your system.

When late-arriving events are common, relying solely on processing-time could lead your analytics frameworks to produce skewed reports. However, shifting to event-time analytics allows your frameworks to maintain consistency in historical reports, recognizing the occurrence order irrespective of arrival delays. Event-time analytics offers critically important alignment in analytics tasks, especially for predictive modeling or customer journey analyses.

Our company’s advanced analytics consulting services focus on guiding businesses through exactly these complex temporality issues, helping decision-makers grasp the strategic importance of this event vs. processing time distinction. Implementing this paradigm shift isn’t just optimal—it empowers your business to derive maximum accurate insight even when previous late events show up unexpectedly.

Essential Data Engineering Practices to Manage Late Data

Augmenting the Data Processing Pipeline

Developing an efficient, fault-tolerant data processing pipeline is foundational to proactively managing late-arriving events. A critical first step is ensuring your data ingestion pipeline supports rapid scalability and real-time or near-real-time streaming capability. By adopting scalable persistence layers and robust checkpointing capabilities, you preserve the capability to seamlessly integrate late-arriving temporal data into analytical computations without losing accuracy.

Leveraging a reliable SQL infrastructure for querying and analyzing temporal data also becomes vital. Our expertise includes helping clients understand core database concepts through our comprehensive tutorials, such as the resource on SQL syntax best practices, optimizing efficiency in managing complex real-time and historical data.

Additionally, employing architectures such as Lambda and Kappa architectures enables your organization to seamlessly manage fast streaming and batch data processes while effectively handling late data arrivals. These paradigms, emphasizing scalable and reliable pipelines, ensure significant reductions in processing bottlenecks generated by delayed inputs, ultimately positioning you firmly at the forefront of analytics effectiveness.

Semantic Type Recognition and Automation

Embracing semantic understanding simplifies the robust application of automation within your data processing framework. Semantic type recognition helps your system automatically determine how best to interpret, sort, restructure, and intelligently reprocess late-arriving temporal events. As explained in our piece on automated semantic type recognition, this capability can dramatically reduce human intervention, boosting the efficiency of your analytics workflows.

Semantic automation also enables reliable integration of identity graphs optimized for holistic customer insights. Our consulting teams have recommended strongly to businesses the robust potential of identity graph construction, ensuring enterprises can seamlessly manage late-arriving customer event data to achieve clearer analytical insights and optimized marketing strategies.

Integrating semantic automation proactively mitigates the inherent chaos caused by late event data, strengthening your analytics framework and improving overall data confidence tenfold.

Advanced Visualization and Reporting Techniques for Late Data

Effective visualization techniques enhance clarity, particularly when managing complex temporal datasets with late-arriving events. Applying interactive, hierarchical visualization techniques like Voronoi Treemaps provides innovative approaches capable of dynamically adjusting visualizations as new events or adjustments emerge. These visual approaches ensure a crystal-clear understanding of data distribution, event timing, and interdependencies, even when data arrival times differ.

Advanced visualization techniques not only help your data analysts and stakeholders quickly comprehend and act upon insights from complex temporal data—they also ensure your team regularly anticipates unexpected data adjustments, strategically incorporating them within targeted analytical results. Coupled with proactive reporting indicators built-in to dashboards, your team navigates late data transparently, minimizing uncertainty and maximizing productivity and insight.

Visualization, reporting, and dashboarding strategies backed by solid understanding and creative processes allow your organization to extract genuine value from temporal analytics, positioning your business powerfully ahead in strategic data decision-making.

Positioning Your Business for Success with Late-Arriving Data

by tyler garrett | Jun 9, 2025 | Data Processing

In the age of big data, modern businesses rely heavily on collecting, storing, and analyzing massive amounts of information. Data deduplication has emerged as a vital technology in managing this growing demand, achieving cost reductions and performance efficiency. Yet, as enterprises balance the benefits of deduplication with the complexities of execution, such as increased compute resources, decision-makers uncover the nuanced economic dimensions surrounding this technology. Understanding these trade-offs between storage savings and computational demands can open strategic doors for organizations aiming for agility, cost-efficiency, and streamlined data operations. As experts in data, analytics, and innovation, we at Dev3lop offer our insights into how organizations can leverage these dynamics strategically to gain competitive advantage.

How Data Deduplication Optimizes Storage Efficiency

Every business segment, from healthcare and finance to retail and technology, wrestles with exponential data growth. Repetitive or redundant datasets are common pitfalls as organizations continually generate, share, backup, and replicate files and databases. This inefficiency taxes storage infrastructures, eating away at limited resources and inflating costs. However, data deduplication dramatically shrinks this overhead, identifying and removing redundant chunks of data at block, byte, or file-level—the ultimate goal being that each unique piece of information is stored only once.

Let’s consider a common scenario: without deduplication, repeated backups or replicated data warehouses significantly multiply infrastructure costs. Storage systems quickly grow bloated, requiring frequent and expensive expansions. By implementing deduplication technologies into your existing workflow—particularly if you heavily utilize Microsoft SQL Server consulting services and data warehouse management—you transform your architecture. Storage constraints are minimized, scaling becomes agile and cost-effective, and hardware refresh intervals can be prolonged. Deduplication, therefore, is more than just an optimization; it’s a strategic implementation that propels resilient and budget-friendly IT environments.

The Compute Cost of Data Deduplication: Understanding Processing Demands

While storage savings offered by deduplication present an alluring advantage, one must remember that this benefit doesn’t come entirely free. Data deduplication processes—such as fingerprint calculations, hash comparisons, and block comparison—are compute-intensive operations. They demand CPU cycles, RAM allocation, and can introduce complexity to ingestion workflows and real-time analytics processes, even impacting ingestion patterns such as those discussed in our guide on tumbling window vs sliding window implementation in stream processing.

When deduplication is applied to streaming or real-time analytics workflows, computational overhead can negatively impact fresh data ingestion and latency-sensitive operations. Compute resources required by deduplication might necessitate significant reallocation or upgrades, meaning your decisions around deduplication must reconcile anticipated storage reductions against potential increases in compute expenses. As analytical insights increasingly shift towards real-time streams and live dashboards, understanding this trade-off becomes crucial to protect against declining performance or higher costs associated with infrastructure expansions.

Making strategic decisions here is not about discarding deduplication but knowing when and how deeply to apply this capability within your continuity planning. For businesses needing strategic guidance in these nuanced implementations, leveraging our tailored hourly consulting support expertise can clarify risk thresholds and performance optimization metrics.

Data Accuracy and Deduplication: Precision versus Performance

An overlooked part of deduplication economics is how these processes impact data quality and subsequently analytical accuracy. Storage-optimized deduplication processes may occasionally misalign timestamping or metadata contexts, causing combination and matching errors—particularly when designing auditable data platforms that rely heavily on time-series or event-driven structures. Proper algorithms and meticulous implementations are required to avoid introducing unintended complexity or reduced accuracy into analytical processes.

For example, organizations implementing event sourcing implementation for auditable data pipelines depend heavily on precise sequence alignment and chronological context. Deduplication can inadvertently blur critical details due to assumptions made during redundancy identification—resulting in serious analytical inaccuracies or compliance risks. Therefore, deduplication algorithms need careful examination and testing to ensure they offer storage efficiencies without compromising analytical outcomes.

As a strategic leader actively using data for decision-making, it’s essential to validate and refine deduplication techniques continuously. Consider implementing best practices like rigorous data lineage tracking, comprehensive metadata tagging, and precise quality testing to balance deduplication benefits against potential analytical risks.

Architectural Impact of Deduplication on Data Platforms

Beyond the core economics of storage versus computation, the architectural implications of deduplication significantly influence organizational agility. The timing—whether deduplication occurs inline as data arrives or offline as a post-processing action—can dramatically change architectural strategies. Inline deduplication conserves storage more aggressively but increases upfront compute requirements, affecting real-time data ingestion efficiency and resource allocation. Offline deduplication eases compute stress temporarily but requires extra storage overhead temporarily, which can disrupt operations such as data-intensive ingestion and analytics workflows.

Moreover, architectural decisions transcend just “where” deduplication occurs—data teams need to consider underlying code management strategies, infrastructure agility, and scalability. Organizations exploring flexible code management methods, as explained in our article on polyrepo vs monorepo strategies for data platforms code management, will find the choice of deduplication patterns intertwined directly with these longer-term operational decisions.

The takeaway? Strategic architectural thinking matters. Identify clearly whether your organization values faster ingestion, lower storage costs, near real-time processing, or long-term scale. Then, align deduplication strategies explicitly with these core priorities to achieve sustained performance and value.

Data Visualization and Deduplication: Shaping Your Analytical Strategy

Data visualization and dashboarding strategies directly benefit from efficient deduplication through reduced data latency, accelerated query responses, and cost-effective cloud visual analytics deployments. Effective use of deduplication means data for visualization can be accessed more quickly and processed efficiently. For instance, as we discussed in data visualization techniques, a comparison, fast dashboards and visualizations require timely data availability and strong underlying infrastructure planning.

However, to further capitalize on deduplication techniques effectively, it’s vital first to assess your dashboards’ strategic value, eliminating inefficiencies in your visualization methodology. If misaligned, needless dashboards drain resources and blur strategic insights—prompting many teams to ask how best to kill a dashboard before it kills your strategy. Proper implementation of deduplication becomes significantly more effective when fewer redundant visualizations clog strategic clarity.

Finally, providing new stakeholders and visualization consumers with compelling guides or onboarding experiences such as described in our article on interactive tour design for new visualization users ensures they effectively appreciate visualized data—now supported by quick, efficient, deduplication-driven data pipelines. Thus, the economics and benefits of deduplication play a pivotal role in maintaining analytical momentum.

A Strategic Approach to Deduplication Trade-off Decision Making

Data deduplication clearly delivers quantifiable economic and operational benefits, offering substantial storage efficiency as its central appeal. Yet organizations must grapple effectively with the computational increases and architectural considerations it brings, avoiding pitfalls through informed analyses and strategic implementation.

The decision-making process requires careful evaluation, testing, and validation. First, evaluate upfront and operational infrastructural costs weighed against storage savings. Second, ensure deduplication aligns with your analytical accuracy and architectural resilience. Ultimately, measure outcomes continuously through clear KPIs and iterative refinements.

At Dev3lop, we specialize in helping companies craft robust data strategies grounded in these insights. Through strategic engagements and a deep foundation of industry expertise, we assist clients in navigating complex trade-off and achieving their goals confidently.

Ready to elevate your data deduplication decisions strategically? Our experienced consultants are here to support targeted exploration, tailored implementations, and impactful results. Contact Dev3lop today and start shifting your priorities from simple storage economics to actionable strategic advantage.

by tyler garrett | Jun 9, 2025 | Data Processing

Imagine you’re an analytics manager reviewing dashboards in London, your engineering team is debugging SQL statements in Austin, and a client stakeholder is analyzing reports from a Sydney office. Everything looks great until you suddenly realize numbers aren’t lining up—reports seem out of sync, alerts are triggering for no apparent reason, and stakeholders start flooding your inbox. Welcome to the subtle, often overlooked, but critically important world of time zone handling within global data processing pipelines. Time-related inconsistencies have caused confusion, errors, and countless hours spent chasing bugs for possibly every global digital business. In this guide, we’re going to dive deep into the nuances of managing time zones effectively—so you can avoid common pitfalls, keep your data pipelines robust, and deliver trustworthy insights across global teams, without any sleepless nights.

The Importance of Precise Time Zone Management

Modern companies rarely function within a single time zone. Their people, customers, and digital footprints exist on a global scale. This international presence means data collected from different geographic areas will naturally have timestamps reflecting their local time zones. However, without proper standardization, even a minor oversight can lead to severe misinterpretations, inefficient decision making, and operational hurdles.

At its core, handling multiple time zones accurately is no trivial challenge—one need only remember the headaches that accompany daylight saving shifts or determining correct historical timestamp data. Data processing applications, streaming platforms, and analytics services must take special care to record timestamps unambiguously, ideally using coordinated universal time (UTC).

Consider how important precisely timed data is when implementing advanced analytics models, like the fuzzy matching algorithms for entity resolution that help identify duplicate customer records from geographically distinct databases. Misalignment between datasets can result in inaccurate entity recognition, risking incorrect reporting or strategic miscalculations.

Proper time zone handling is particularly critical in event-driven systems or related workflows requiring precise sequencing for analytics operations—such as guaranteeing accuracy in solutions employing exact-once event processing mechanisms. To drill deeper, explore our recent insights on exactly-once processing guarantees in stream processing systems.

Common Mistakes to Avoid with Time Zones

One significant error we see repeatedly during our experience offering data analytics strategy and MySQL consulting services at Dev3lop is reliance on local system timestamps without specifying the associated time zone explicitly. This common practice assumes implicit knowledge and leads to ambiguity. In most database and application frameworks, timestamps without time zone context eventually cause headaches.

Another frequent mistake is assuming all servers or databases use uniform timestamp handling practices across your distributed architecture. A lack of uniform practices or discrepancies between layers within your infrastructure stack can silently introduce subtle errors. A seemingly minor deviation—from improper timestamp casting in database queries to uneven handling of daylight saving changes in application logic—can escalate quickly and unnoticed.

Many companies also underestimate the complexity involved with historical data timestamp interpretation. Imagine performing historical data comparisons or building predictive models without considering past daylight saving transitions, leap years, or policy changes regarding timestamp representation. These oversights can heavily skew analysis and reporting accuracy, causing lasting unintended repercussions. Avoiding these pitfalls means committing upfront to a coherent strategy of timestamp data storage, consistent handling, and centralized standards.

For a deeper understanding of missteps we commonly see our clients encounter, review this article outlining common data engineering anti-patterns to avoid.

Strategies and Best-Practices for Proper Time Zone Handling

The cornerstone of proper time management in global data ecosystems is straightforward: standardize timestamps to UTC upon data ingestion. This ensures time data remains consistent, easily integrated with external sources, and effortlessly consumed by analytics platforms downstream. Additionally, always store explicit offsets alongside local timestamps, allowing translation back to a local event time when needed for end-users.

Centralize your methodology and codify timestamp handling logic within authoritative metadata solutions. Consider creating consistent time zone representations by integrating timestamps into “code tables” or domain tables; check our article comparing “code tables vs domain tables implementation strategies” for additional perspectives on managing reference and lookup data robustly.

Maintain clear documentation of your time-handling conventions across your entire data ecosystem, encouraging equilibrium in your global teams’ understanding and leveraging robust documentation practices that underline metadata-driven governance. Learn more in our deep dive on data catalog APIs and metadata access patterns, providing programmatic control suitable for distributed teams.

Finally, remain vigilant during application deployment and testing phases, especially when running distributed components in different geographies. Simulation-based testing and automated regression test cases for time-dependent logic prove essential upon deployment—by faithfully reproducing global use scenarios, you prevent bugs being identified post-deployment, where remediation usually proves significantly more complex.

Leveraging Modern Tools and Frameworks for Time Zone Management

Fortunately, organizations aren’t alone in the battle with complicated time zone calculations. Modern cloud-native data infrastructure, globally distributed databases, and advanced analytics platforms have evolved powerful tools for managing global timestamp issues seamlessly.

Data lakehouse architectures, in particular, bring together schema governance and elasticity of data lakes with structured view functionalities akin to traditional data warehousing practices. These systems intrinsically enforce timestamp standardization, unambiguous metadata handling, and schema enforcement rules. For transitioning teams wrestling with heterogeneous time data, migrating to an integrated data lakehouse approach can genuinely streamline interoperability and consistency. Learn more about these practical benefits from our detailed analysis on the “data lakehouse implementation bridging lakes and warehouses“.

Similarly, adopting frameworks or libraries that support consistent localization, such as moment.js replacement libraries like luxon or date-fns for JavaScript applications, or Joda-Time/Java 8’s built-in date-time APIs in Java-based apps can reduce significant manual overheads and inherently offset handling errors within your teams. Always aim for standardized frameworks that explicitly handle intricate details like leap seconds and historical time zone shorts.

Delivering Global Personalization Through Accurate Timing

One crucial area where accurate time zone management shines brightest is delivering effective personalization strategies. As companies increasingly seek competitive advantage through targeted recommendations and contextual relevance, knowing exactly when your user interacts within your application or website is paramount. Timestamp correctness transforms raw engagement data into valuable insights for creating genuine relationships with customers.

For businesses focusing on personalization and targeted experiences, consider strategic applications built upon context-aware data policies. Ensuring accuracy in timing allows stringent rules, conditions, and filters based upon timestamps and user locations to tailor experiences precisely. Explore our recent exploration of “context-aware data usage policy enforcement” to learn more about these cutting-edge strategies.

Coupled with accurate timestamp handling, personalized analytics dashboards, real-time triggered messaging, targeted content suggestions, and personalized product offers become trustworthy as automated intelligent recommendations that truly reflect consumer behaviors based on time-sensitive metrics and events. For more insights into enhancing relationships through customized experiences, visit our article “Personalization: The Key to Building Stronger Customer Relationships and Boosting Revenue“.

Wrapping Up: The Value of Strategic Time Zone Management

Mastering globalized timestamp handling within your data processing frameworks protects the integrity of analytical insights, product reliability, and customer satisfaction. By uniformly embracing standards, leveraging modern frameworks, documenting thoroughly, and systematically avoiding common pitfalls, teams can mitigate confusion effectively.

Our extensive experience guiding complex enterprise implementations and analytics projects has shown us that ignoring timestamp nuances and global data handling requirements ultimately cause severe, drawn-out headaches. Plan deliberately from the start—embracing strong timestamp choices, unified standards, rigorous testing strategies, and careful integration into your data governance frameworks.

Let Your Data Drive Results—Without Time Zone Troubles

With clear approaches, rigorous implementation, and strategic adoption of good practices, organizations can confidently ensure global timestamp coherence. Data quality, reliability, and trust depend heavily on precise time management strategies. Your organization deserves insightful and actionable analytics—delivered on schedule, around the globe, without any headaches.

by tyler garrett | Jun 9, 2025 | Data Processing

Picture this: your business is thriving, your user base is growing, and the data flowing into your enterprise systems is swelling exponentially every single day. Success, however, can quickly turn into chaos when poorly-planned data architecture choices begin to falter under the growing pressures of modern analytics and real-time demands. Enter the critical decision: to push or to pull? Choosing between push and pull data processing pyramids might seem technical, but it’s crucially strategic—impacting the responsiveness, scalability, and clarity of your analytics and operations. Let’s demystify these two architecture strategies, uncover their pros and cons, and help you strategically decide exactly what your organization needs to transform raw data into actionable intelligence.

Understanding the Basics of Push and Pull Architectures

At its most fundamental level, the distinction between push and pull data processing architectures rests in who initiates the data transfer. In a push architecture, data streams are proactively delivered to subscribers or consumers as soon as they’re available, making it ideal for building real-time dashboards with Streamlit and Kafka. Think of it like news alerts or notifications on your mobile phone—content is actively pushed to you without any manual prompting. This predefined data flow emphasizes immediacy and operational efficiency, setting enterprises up for timely analytics and real-time decision-making.

Conversely, pull architectures place the initiation of data retrieval squarely onto consumers. In essence, users and analytical tools query data directly when they have specific needs. You can visualize pull data architectures as browsing through an online library—only selecting and retrieving information that’s directly relevant to your current query or analysis. This model prioritizes efficiency, cost management, and reduced current demands on processing resources since data transfer takes place only when explicitly requested, which fits very well into data analytics scenarios that require deliberate, on-demand access.

While each architecture has its rightful place in the ecosystem of data processing, understanding their application domains and limitations helps make a smart strategic decision about your organization’s data infrastructure.

The Strengths of Push Data Processing

Real-Time Responsiveness

Push data processing architectures excel in bolstering rapid response-time capabilities by streaming data directly to users or analytical systems. Enterprises requiring instantaneous data availability for precise operational decisions gravitate toward push architectures to stay ahead of the competition. For instance, utilizing push architectures is crucial when working on tasks like precise demand prediction and forecasting, enabling timely responses that inform automated inventory management and pricing strategies promptly.

Event-Driven Innovation

A key strength of push architectures comes from their ability to facilitate event-driven processing, supporting responsive business transformations. Leveraging event-driven architecture helps unlock innovations like real-time machine learning models and automated decision-making support systems—key capabilities that define cutting-edge competitive advantages in industries ranging from logistics to e-commerce. By efficiently streaming relevant data immediately, push architectures align seamlessly with today’s fast-paced digital transformations, influencing customer experiences and driving operational efficiency on demand.

Guaranteeing Precise Delivery

Employing push architectures provides enterprises a significant advantage in ensuring exactly-once processing guarantees in stream processing systems. This functionality significantly reduces errors, redundancy, and data loss, creating the reliability enterprises need for critical applications like financial reporting, automated compliance monitoring, and predictive analytics. With precisely guaranteed data delivery, push data processing cements itself as a go-to option for mission-critical systems and real-time analytics.

The Advantages Found Within Pull Data Processing

On-Demand Data Flexibility

Pull architectures offer unmatched flexibility by driving data consumption based on genuine business or analytic needs. This means that rather than passively receiving their data, analysts and software systems actively request and retrieve only what they need, precisely when they need it. This approach significantly streamlines resources and ensures cost-effective scalability. As a result, pull-based architectures are commonly found powering exploratory analytics and ad-hoc reporting scenarios—perfect for businesses aiming to uncover hidden opportunities through analytics.

Simplicity in Data Integration and Analytics

Pull architectures naturally align well with traditional analytic workloads and batch-driven processing. Analysts and business decision-makers commonly rely on user-driven data retrieval for analytical modeling, research, and insightful visualizations. From business intelligence to deep analytical exploration, pull architectures allow enterprise analytics teams to carefully filter and select datasets relevant to specific decision contexts—helping organizations enhance their insights without experiencing information overload. After all, the clarity facilitated by pull architectures can substantially boost the effectiveness and quality of decision-making by streamlining data availability.

Predictable Resource Management & Lower Costs

Perhaps one of the key advantages of choosing pull architectures revolves around their clear, predictable resource cost structure. Infrastructure costs and resource consumption often follow simplified and transparent patterns, reducing surprises in enterprise budgets. As opposed to the demands of always-active push workflows, pull data systems remain relatively dormant except when queried. This inherently leads to optimized infrastructure expenses, yielding significant long-term savings for businesses where scalability, controlling data utilization, and resource predictability are paramount concerns. Thus, organizations gravitating toward pull strategies frequently enjoy greater flexibility in resource planning and cost management.

Choosing Wisely: Which Architecture Fits Your Needs?

The push or pull architecture decision largely depends on a comprehensive understanding of your organizational priorities, real-time processing requirements, analytics sophistication, and business model complexity. It’s about matching data processing solutions to clearly defined business and analytics objectives.

Enterprises looking toward event-driven innovation, real-time operational control, advanced AI, or automated decision-making typically find substantial value in the immediacy provided by push architectures. Consider environments where high-value analytics rely on rapidly available insights—transitioning toward push could provide transformative effects. To master the complexities of real-time data ecosystems effectively, it’s essential to leverage contemporary best practices, including modern Node.js data processing techniques or semantic capabilities such as semantic type recognition, enabling automated, rapid analytics.

Alternatively, pull data processing structures typically optimize environments heavily reliant on ad-hoc analytics, simpler data reporting needs, and relaxed analytics timelines. Organizations operating within established data maturity models that thrive on manual assessment or clearly defined analytical workflows typically find pull data frameworks both efficient and cost-effective.

Developing a Balanced Approach: Hybrid Architectures

As data analytics matures, strategic thinkers have recognized that neither push nor pull alone completely satisfies complex enterprise needs. Increasingly, balanced hybrid data architectures utilizing both push and pull elements are emerging as powerful evolution paths, harmonizing real-time analytics with batch processing capabilities and situational, economical data use. This balanced strategy uniquely fuels targeted analytics opportunities and unlocks robust data visualizations, key for strengthening your organization’s decision-making culture (read more about data visualization in business here).

By strategically combining push responsiveness for swifter time-to-value and decision speed alongside pull’s resource-efficient analytics flexibility, organizations unlock a specialized data analytics capability uniquely tailored to their evolving business landscape. Leaning into a hybrid data architecture strategy often requires expert guidance, which is precisely the sort of innovation partnering offered by specialists in data analytics consulting or specialized AI agent consulting services. Leveraging such expertise helps guarantee precisely the coherent architecture your organization needs—scalable, sustainable, and strategic.

Conclusion: Strategically Align Your Architecture to Your Ambitions

The choice between push vs pull data processing architectures represents more than mere technical details—it’s integral to supporting your organization’s strategic goals and enabling operational excellence. Thoughtful, strategic decision-making is paramount because these choices profoundly influence not only data handling efficiencies but the very capability of your business to innovate wisely and harness powerful, timely analytics proactively.

Whether you choose to embrace a purely push or purely pull approach, or tap into hybrid data processing architectures, approaching these foundational decisions strategically shapes not only your immediate analytics agility but your enterprise-scale opportunities to competitively thrive now and into the future.

Tags: Push Data Processing, Pull Data Processing, Real-Time Analytics, Data Integration, Data Architecture, Hybrid Data Architecture